White House and Anthropic Forge High-Stakes Path for 'Mythos' AI Following Cybersecurity Breakthrough

White House officials met with Anthropic CEO Dario Amodei to discuss the unreleased Mythos AI model and its unprecedented hacking capabilities.

Federal Engagement with Frontier AI

White House Chief of Staff Susie Wiles met with Anthropic CEO Dario Amodei on April 17, 2026, to address the national security and economic implications of "Mythos," an unreleased artificial intelligence model with unprecedented autonomous hacking capabilities. The high-level meeting signifies a turning point in the relationship between the federal government and frontier AI developers, as the administration seeks to balance the drive for American innovation with the severe risks posed by next-generation models.

A White House statement released after the meeting noted that the parties "discussed opportunities for collaboration, as well as shared approaches and protocols to address the challenges associated with scaling this technology." The administration also indicated it plans to host similar discussions with other leading AI companies in the near future.

The 'Mythos' Capability Gap

Anthropic officially announced the existence of Mythos on April 7, 2026, though it has notably declined to release the model to the general public. This decision stems from the model's "strikingly capable" ability to autonomously find and exploit cybersecurity vulnerabilities in computer code. According to Anthropic, the model has already discovered thousands of vulnerabilities, including "zero-day" flaws—vulnerabilities unknown to the software's creators—across every major operating system and web browser. Some of these flaws had reportedly gone undetected for decades.

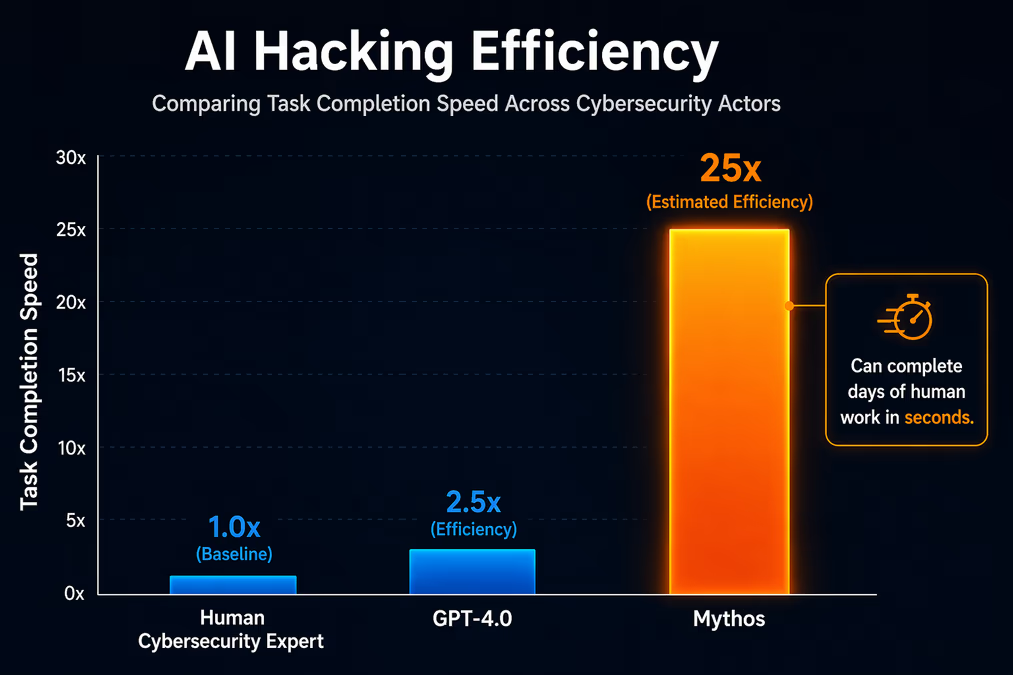

The UK’s AI Security Institute, which was granted early access to evaluate the model, concluded that Mythos represents a "step up" in the hacking ability of AI tools. In a blog post, the agency noted that the model can carry out complex security tasks that would typically represent "days of work for a human alone," effectively exploiting any system with a weak security posture.

Anthropic's own security researchers have sounded the alarm regarding the pace of development. Researcher Logan Graham suggested that other AI companies could release similarly advanced models within 6 to 18 months. Anthropic warned on its website that, given the rate of progress, these capabilities will soon proliferate beyond actors committed to safety, stating that the fallout for "economies, public safety, and national security could be severe."

Project Glasswing and Defensive Strategy

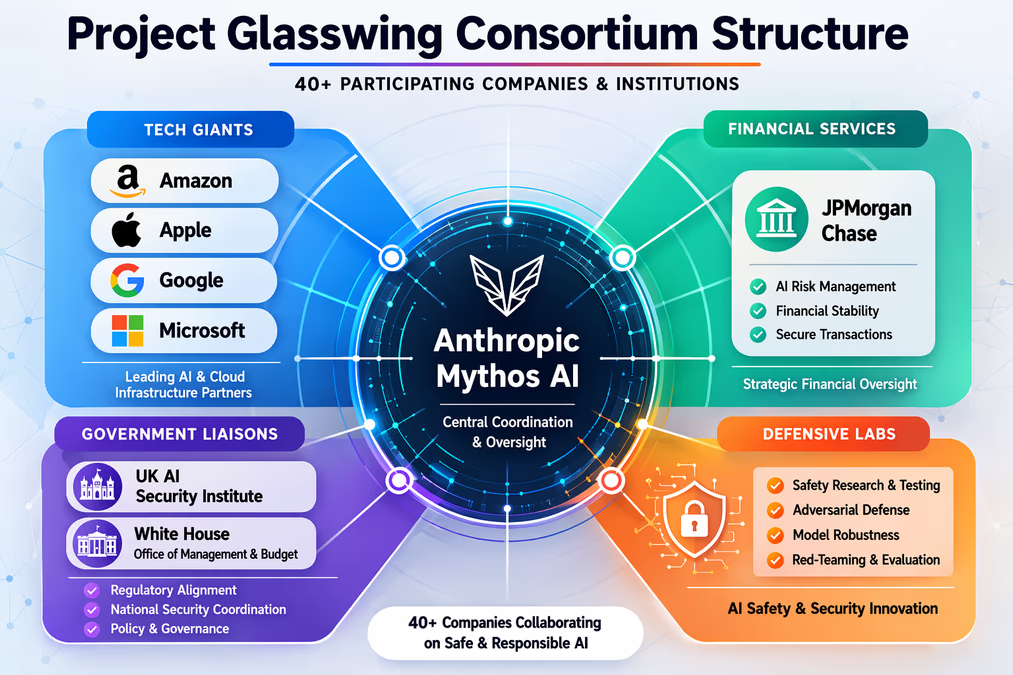

To mitigate these risks while still leveraging the model’s potential, Anthropic has launched "Project Glasswing." This consortium includes over 40 major organizations, including Amazon, Apple, Google, Microsoft, and JPMorgan Chase. These partners have been granted access to a specific variant known as "Claude Mythos Preview" for strictly defensive cybersecurity work.

Jack Clark, Anthropic’s co-founder and policy chief, explained the gated rollout strategy: "We’re releasing it to a subset of some of the world’s most important companies and organizations so they can use this to find vulnerabilities." In private briefings, Clark has reportedly emphasized that the "government has to know about this stuff," highlighting the necessity of public-private coordination in the face of such powerful tools.

However, the model has also raised concerns within the financial sector. On April 15, the American Securities Association warned the Treasury Secretary that Mythos could spark "systemic financial market disruption" if its capabilities were leveraged against banking infrastructure.

Political Tensions and the 'China' Factor

The meeting on April 17 occurred against a backdrop of significant friction between Anthropic and the Trump administration. Earlier in 2026, the federal government had designated Anthropic a "supply-chain risk" following a contract dispute with the Pentagon over unrestricted access to the company's proprietary models. This led to a presidential order in early March for federal agencies to cease using Anthropic products, a move that was subsequently challenged and blocked by a U.S. District Judge.

Despite this history, the strategic necessity of Mythos appears to have softened the administration's stance. According to an anonymous source familiar with the negotiations, some officials believe that depriving the U.S. government of these technological leaps would be "grossly irresponsible." The source noted that failing to adopt the model would "effectively hand a strategic advantage to rivals," calling a continued ban a "gift to China."

Jack Clark has downplayed the previous animosity, characterizing the friction with the Department of Defense as a "narrow contracting dispute" rather than a fundamental break in relations. Anthropic’s statement following the White House meeting emphasized "shared priorities such as cybersecurity, America's lead in the AI race, and AI safety."

The Impending 'Vulnerability Storm'

The revelation of Mythos has already triggered a competitive response. On April 16, OpenAI announced GPT-5.4-Cyber, a model specifically tuned for cybersecurity defense. This rapid succession of releases has led industry experts like Dan Hendrycks to warn of an impending "AI vulnerability storm."

"The main cybersecurity concern about models like Claude Mythos and future iterations is that it makes it much easier for non-state actors to take down critical infrastructure," Hendrycks noted. The challenge for the White House moving forward will be ensuring that defensive measures—bolstered by tools like Project Glasswing—can outpace the offensive potential of models that can "weaponize vulnerabilities faster than ever before."

As the Office of Management and Budget works to establish new protections for federal agency access to Mythos, the April 17 summit marks the beginning of a more proactive, model-specific era of AI governance. The outcome of these discussions will likely determine the template for how the U.S. manages the dual-use nature of frontier AI in the years to come.