Weya AI Releases Open-Source Speech Enhancement Model; Google Previews Gemini 3.1 Flash TTS

Weya AI launches open-source speech model Hush while Google previews Gemini 3.1 Flash TTS, enhancing real-time audio clarity and emotional delivery.

On April 9, 2026, Weya AI officially released 'Hush,' an open-source speech enhancement model designed to tackle the 'cocktail party problem' in real-time communication. Less than a week later, on April 15, Google expanded its generative AI portfolio by introducing a public preview of Gemini 3.1 Flash Text-to-Speech (TTS), a model that prioritizes emotional expressivity and granular user control. Together, these releases signal a significant leap forward in the industry's ability to both understand and generate human speech in increasingly complex, real-world environments.

Weya AI’s Hush: Silence in 8 Megabytes

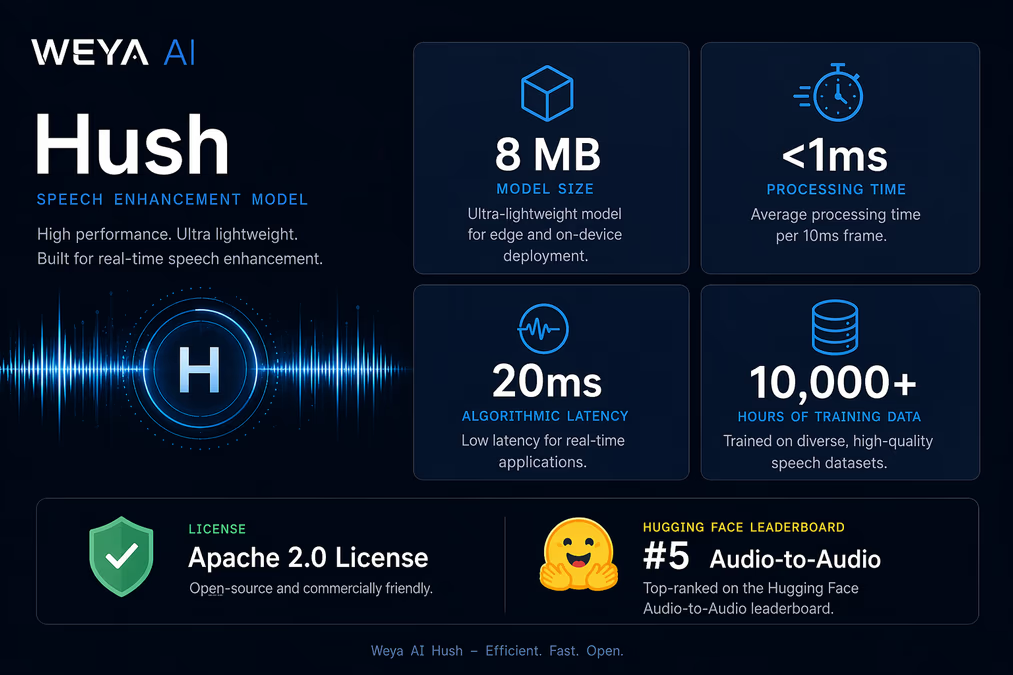

Hush represents a technical milestone for edge-compatible AI. Developed by the Noida-based startup Weya AI, the model is remarkably lightweight at just 8 MB. Unlike many contemporary deep learning models that require high-end GPUs to function in real-time, Hush is optimized to run efficiently on a standard CPU. It processes audio with an algorithmic latency of approximately 20 ms, handling individual 10 ms frames in under 1 ms.

The primary objective of Hush is the isolation of a primary speaker from background noise and competing human voices. This is a critical challenge for Weya AI’s core business in the Banking, Financial Services, and Insurance (BFSI) sector, where automated agents must process customer calls often originating from noisy streets or busy offices. The model was trained on 10,000 hours of mixed data, with 60% of that dataset specifically featuring competing human voices at a 12–24 dB Signal-to-Interference Ratio (SIR).

Atul Singh, CTO at Weya AI, noted that Hush addresses a fundamental gap in current voice technology. "Hush solves one of the most overlooked failure points in production voice AI," Singh stated, referring to how traditional models frequently misinterpret speech when multiple people are talking in the background. By making the model available under the Apache 2.0 license on Hugging Face and GitHub—where it debuted at #5 on the Audio-to-Audio leaderboard—Weya AI is positioning itself as a key contributor to the open-source voice stack.

Google Gemini 3.1 Flash TTS: Directing the AI Voice

While Weya AI focuses on cleaning up input, Google is refining the quality of synthetic output. Gemini 3.1 Flash TTS, now available on Google AI Studio and Vertex AI, moves beyond the flat, robotic delivery of early TTS systems. The model supports over 70 languages and provides 30 prebuilt voices, but its most distinct feature is a library of over 200 expressive audio tags.

These tags allow developers to 'direct' the AI’s delivery by inserting prompts such as [determination], [enthusiasm], [whispers], or [laughs] directly into the text. This allows for precise control over pacing and emotional resonance, making the AI suitable for high-stakes narration or character work in gaming and education. The model also supports native multi-speaker synthesis, allowing two distinct voices to be generated within a single API call.

Khulan Davaajav of Google emphasized the model's flexibility during the announcement. She explained that Gemini 3.1 Flash TTS delivers precise controllability and expressivity, empowering developers and enterprises to build advanced AI-speech applications. The model introduces a high level of controllability by allowing users to steer the delivery using the extensive tag system.

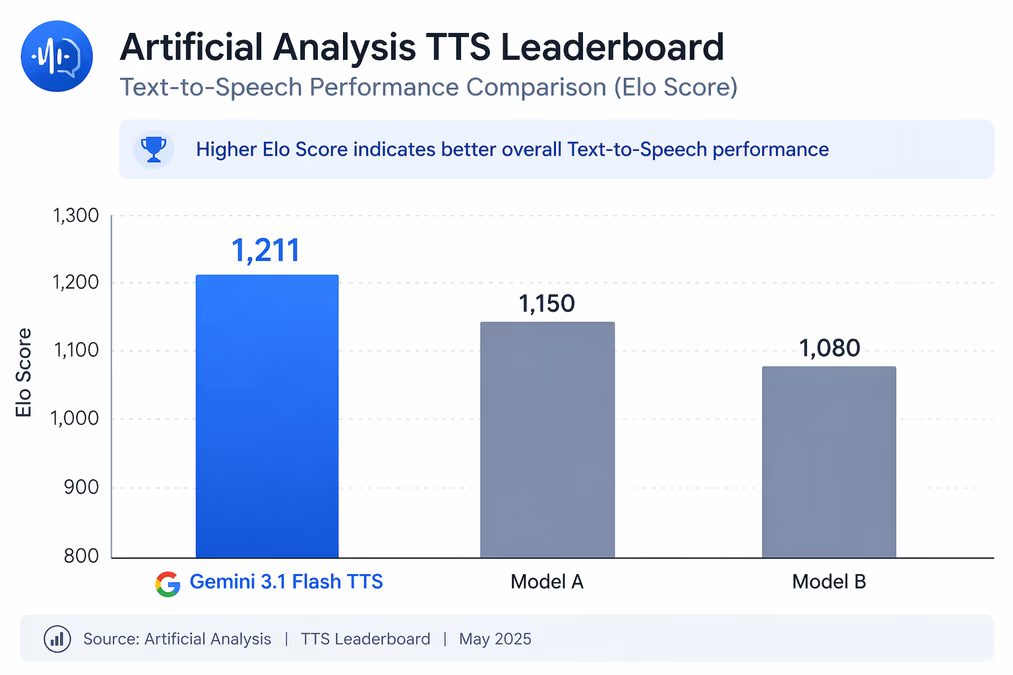

To address safety and authenticity concerns, Google has integrated SynthID watermarking into the audio output. This invisible digital watermark helps identify content as AI-generated without degrading the listening experience. The model has already demonstrated high performance, achieving an Elo score of 1,211 on the Artificial Analysis TTS leaderboard.

The Shift Toward Controllability and Accessibility

The dual arrival of Hush and Gemini 3.1 Flash TTS highlights a broader trend: the move from 'general' AI capabilities to specialized, controllable tools. Weya AI’s decision to open-source Hush reflects a growing movement where open-weight models challenge proprietary systems in accessibility. While competitors like Speechmatics have developed 'Speaker Lock' and companies like Hiya offer background noise defenses, Weya's CPU-first approach democratizes high-end speech enhancement for developers without massive hardware budgets.

Google’s release, meanwhile, suggests that the next frontier for synthetic speech is not just sounding human, but sounding contextually appropriate. By providing low-cost, high-speed 'Flash' tier pricing alongside deep emotional control, Google is targeting the mass integration of AI voices into Google Workspace and third-party apps via Google Vids.

As these technologies mature, the barrier between human and synthetic interaction continues to thin. The ability to isolate a single voice in a crowd and then replicate that voice with nuanced emotion marks a pivotal moment for call centers, content creators, and the future of ambient computing.