Vercel Security Breach Traced to Compromised Third-Party AI Platform

Vercel confirms a security breach originating from a third-party AI tool compromise, exposing non-sensitive environment variables for some users.

Vulnerability in the AI Supply Chain

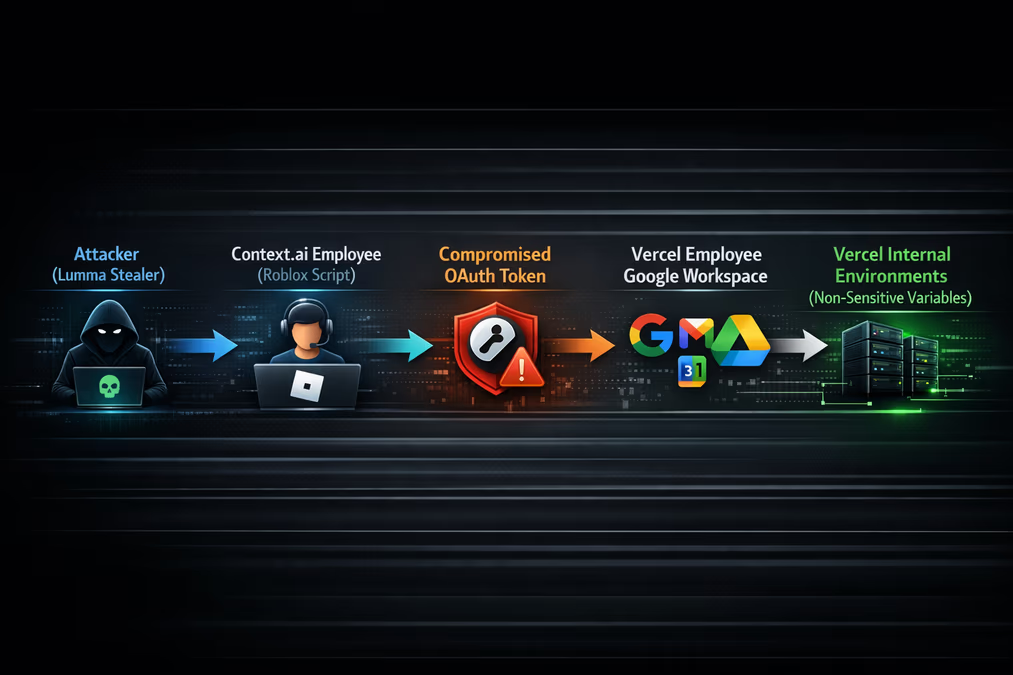

Vercel, the platform behind the popular Next.js framework, has disclosed a security incident where attackers gained unauthorized access to its internal systems through a compromise of Context.ai, a third-party AI tool. The breach highlights a growing trend of 'supply chain escalation,' where vulnerabilities in niche AI services can provide a gateway into some of the tech industry’s most critical infrastructure.

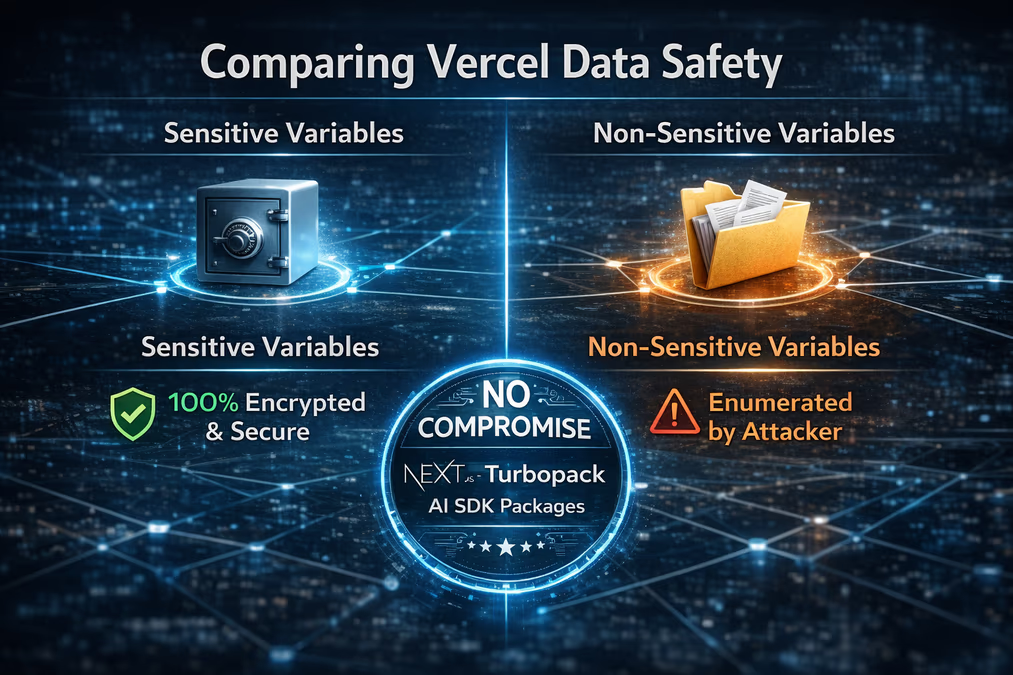

The attacker gained initial access by compromising a Vercel employee's Google Workspace account. This was achieved through a compromised OAuth application belonging to Context.ai. According to Vercel’s security bulletin, the incident originated with the compromise of the third-party tool, which enabled the threat actor to take over the employee’s account and subsequently access some Vercel environments. While the attacker was able to enumerate and access certain environment variables, Vercel has confirmed that variables marked as 'sensitive' remained protected by encryption.

Impact on Customers and Infrastructure

During the incident, a limited subset of Vercel customers had their non-sensitive environment variables exposed. Vercel has stated that it directly notified these customers and advised them to rotate their credentials as a precautionary measure. Guillermo Rauch, CEO of Vercel, addressed the situation by emphasizing the platform’s defense-in-depth mechanisms. "Vercel stores all customer environment variables fully encrypted at rest," Rauch explained. "We do have a capability however to designate environment variables as 'non-sensitive'. Unfortunately, the attacker got further access through their enumeration."

Critically for the broader developer ecosystem, Vercel confirmed that no npm packages published by the company—including Next.js, Turbopack, and the Vercel AI SDK—were compromised. The company is currently collaborating with Google-owned Mandiant and other cybersecurity experts to conduct a full forensic investigation while maintaining communication with law enforcement.

The Anatomy of the Attack

The threat actor involved is described by Vercel as 'highly sophisticated,' citing their rapid operational velocity and deep understanding of Vercel’s internal systems. While Vercel has not officially named the group, unverified reports suggest that the threat actor 'ShinyHunters' has claimed responsibility for the breach. These reports, appearing on BreachForums, suggest the stolen data is being offered for sale for $2 million, though Vercel has not confirmed the validity of these claims.

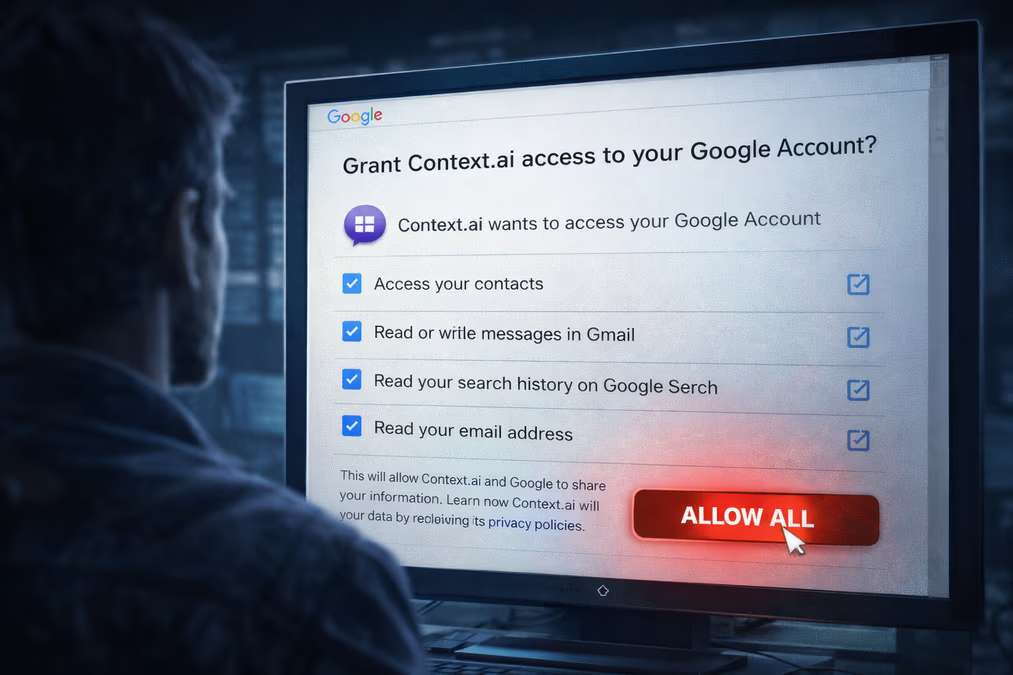

The root of the issue appears to lie in the permissions granted to the Context.ai 'AI Office Suite.' Reports from cybersecurity firm Hudson Rock suggest that the chain of events began in February 2026, when a Context.ai employee was allegedly infected by Lumma Stealer malware after downloading Roblox game exploit scripts. This initial compromise potentially allowed attackers to seize credentials for 'support@context.ai,' eventually pivoting into the OAuth tokens of users who had granted extensive permissions to the tool.

David Lindner, CISO of Contrast Security, noted the simplicity and danger of this attack vector. He pointed out that there was no zero-day exploit involved, but rather an "unsanctioned AI tool, an overpermissioned OAuth grant, and a gaming cheat download." Lindner warned that similar activities are likely occurring on employee machines across various organizations right now.

The Risks of OAuth in the AI Era

This incident serves as a stark reminder of the security challenges posed by the rapid adoption of AI tools. Many of these platforms require extensive permissions to function effectively as autonomous agents or knowledge-base assistants. Jeff Pollard, VP and Principal Analyst at Forrester, suggests that as AI tools spread, OAuth will remain a primary target for attackers. This is not necessarily due to flaws in AI itself, but because these tools require broad access to be valuable to the user.

In response to the incident, Vercel published an Indicator of Compromise (IOC) for a specific Google Workspace OAuth application (ID: 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com). Google Workspace administrators are strongly advised to check for this ID within their environments and revoke permissions if found.

Forward-Looking Implications

As organizations continue to integrate third-party AI agents into their workflows, the Vercel breach underscores the need for more rigorous auditing of OAuth grants and shadow IT. The reliance on 'sensitive' vs 'non-sensitive' labels for environment variables may also see a shift, as companies realize that even seemingly benign data can be leveraged by a sophisticated actor for lateral movement.

Moving forward, the industry is likely to see a push for more granular permission models for AI integrations and a heightened focus on the personal digital habits of employees with high-level access. The Vercel incident demonstrates that in a world of interconnected SaaS platforms, the security of a major infrastructure provider is only as strong as the most permissive third-party tool used by its workforce.