Ubuntu Charts a Local-First AI Path with Open Weight Models and Inference Snaps

Canonical is integrating privacy-focused, local AI features into Ubuntu by 2026, prioritizing open-weight models and user control through Snaps.

A Principled Approach to the AI-Enhanced Desktop

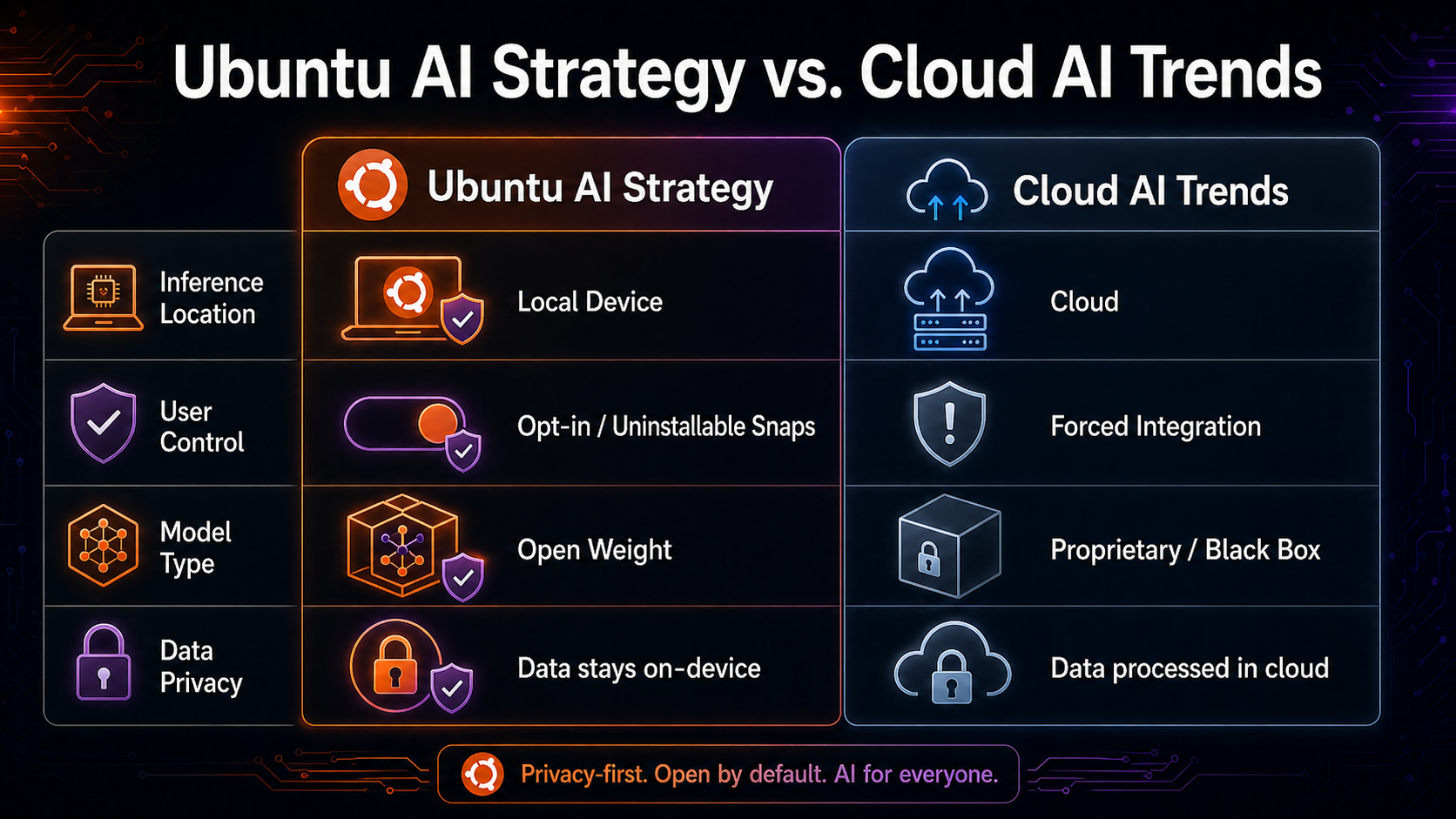

Canonical is preparing to weave artificial intelligence directly into the fabric of Ubuntu, but with a strategy that deviates sharply from the cloud-centric, often-intrusive paths taken by other tech giants. Beginning in 2026, the world’s most popular Linux distribution will begin a gradual rollout of AI features designed around local inference, open-weight models, and strict user sovereignty.

At the heart of this initiative is a commitment to ensuring that AI serves the user rather than the platform provider. Jon Seager, Canonical’s VP of Engineering, explained that the company is ramping up its use of AI tools in a focused and principled manner. According to Seager, the strategy favors open-weight models with license terms that align with open-source values, combined with open-source harnesses to ensure transparency and security.

Implicit vs. Explicit AI: Two Tiers of Integration

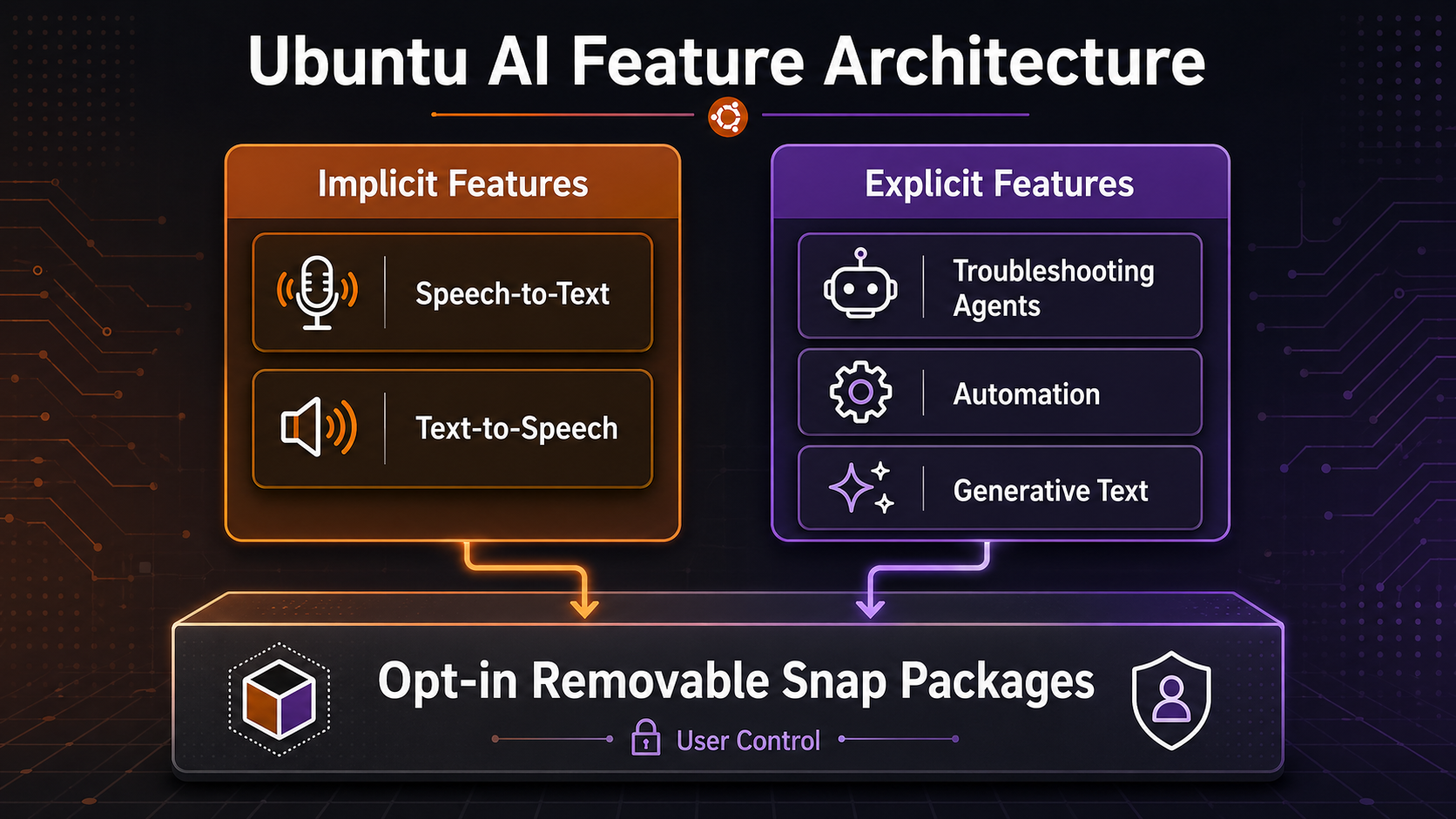

Ubuntu’s AI roadmap categorizes new features into two distinct buckets: 'implicit' and 'explicit.' Implicit features are intended to modernize and enhance existing OS functionalities without fundamentally changing how a user interacts with the system. The primary focus here is accessibility. Seager noted that he views these not merely as 'AI features' but as critical accessibility improvements—such as speech-to-text and text-to-speech—that can be dramatically enhanced via Large Language Models (LLMs) with minimal drawbacks.

In contrast, 'explicit' features represent AI-native capabilities. These include agentic workflows designed to assist with complex troubleshooting, automation of repetitive tasks, and generative text tools. The long-term vision is to transform Ubuntu into a "context-aware operating system" that can act as a helpful agent for both developers and general users, making Linux more accessible to newcomers without sacrificing the power that advanced users demand.

The Power of 'Inference Snaps' and Local Execution

To address the performance and privacy concerns inherent in AI, Canonical is leaning heavily on its containerized software packaging system, Snaps. The company is developing 'inference snaps'—optimized packages designed to simplify running high-performance AI models locally. By delivering these models as removable snaps, Ubuntu ensures that users have total control; any AI feature can be completely disabled by simply uninstalling the associated package.

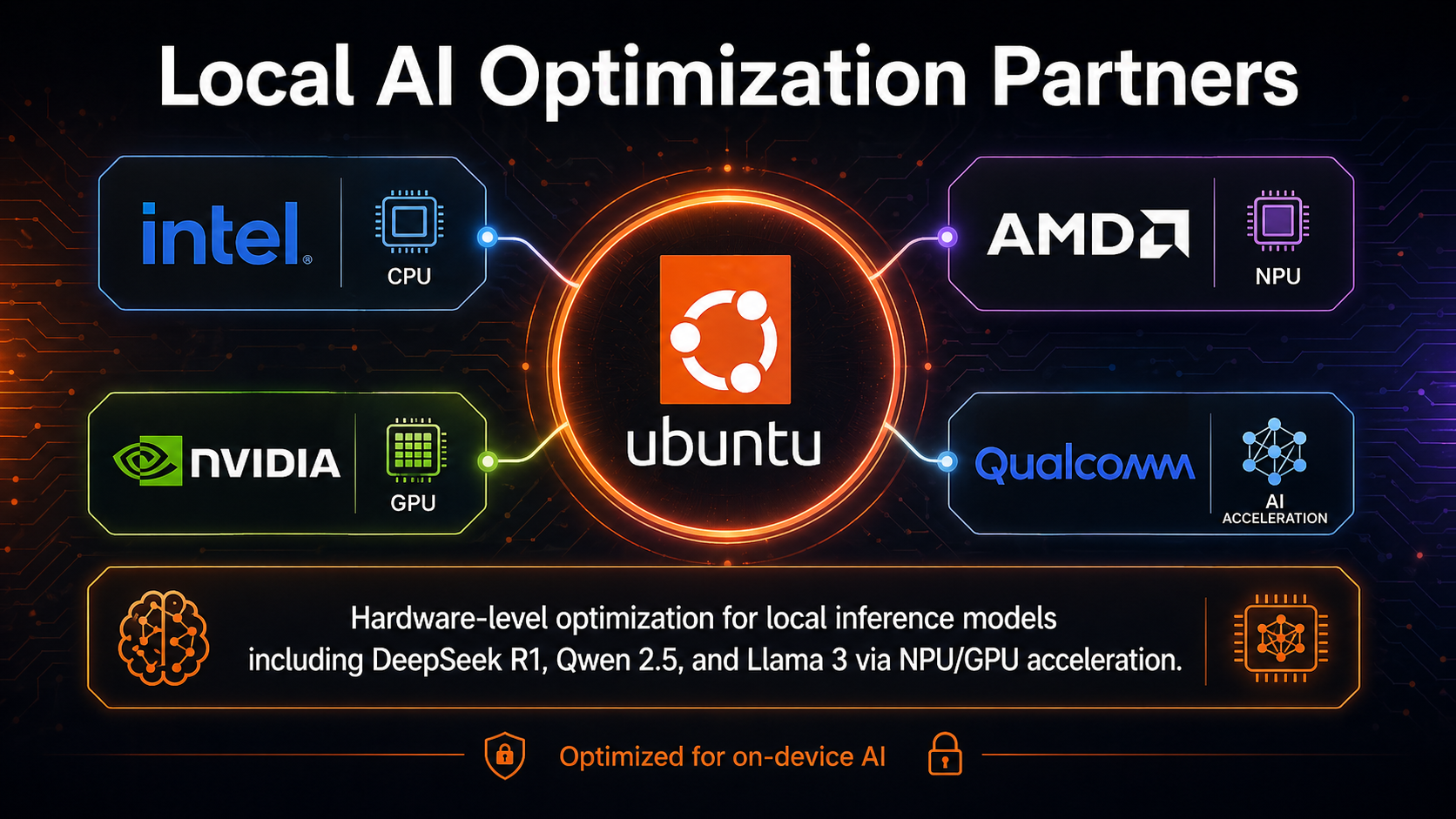

Seager emphasized that the goal of these inference snaps is to provide simplified local access to models that have been specifically tuned for the user's hardware. Initial support is already targeting silicon-optimized models such as DeepSeek R1 and Qwen 2.5 VL. To ensure this works seamlessly across various systems, Canonical has secured partnerships with major silicon manufacturers, including Intel, AMD, NVIDIA, and Qualcomm. These collaborations aim to offload AI tasks to specialized hardware like NPUs and GPUs, reducing the performance penalty often associated with local LLM execution.

Privacy and User Control in an Era of AI Overreach

Canonical’s strategy arrives at a time when users are increasingly wary of AI integration in operating systems. Microsoft’s aggressive push of its Copilot system, and specifically the controversy surrounding the 'Recall' feature, has sparked widespread debate over data privacy and the lack of user opt-outs.

Ubuntu’s approach stands in direct contrast to this trend. By making AI features opt-in by default and ensuring they run locally rather than in the cloud, Canonical aims to maintain the trust of its core user base—which includes a significant percentage of the world’s data scientists and machine learning engineers. While Seager insists that Ubuntu is not becoming an AI product itself, he believes the operating system can become stronger through thoughtful, non-intrusive AI integration.

Timeline and Community Engagement

The rollout of these features is already in motion. In October 2025, Canonical announced the beta availability of silicon-optimized inference snaps for DeepSeek and Qwen. A more comprehensive roadmap was detailed by Seager in April 2026 on the Ubuntu community hub, followed quickly by clarifications to address community skepticism regarding privacy and 'forced' AI features.

Looking ahead, the first hands-on previews of these AI-powered features are expected to arrive in Ubuntu 26.10. Between now and then, Canonical’s internal teams are being encouraged to experiment with AI tools to understand their practical value, though the company is pointedly avoiding metrics based on AI-generated code or token usage. This measured, engineer-led approach suggests that while Ubuntu is embracing the AI future, it is doing so without abandoning the open-source foundations that built its reputation.