The Hidden Thirst of Intelligence: Measuring AI’s Growing Environmental Footprint

AI growth drives record energy and water consumption, with data centers projected to consume up to 1,068 billion liters of water annually by 2028.

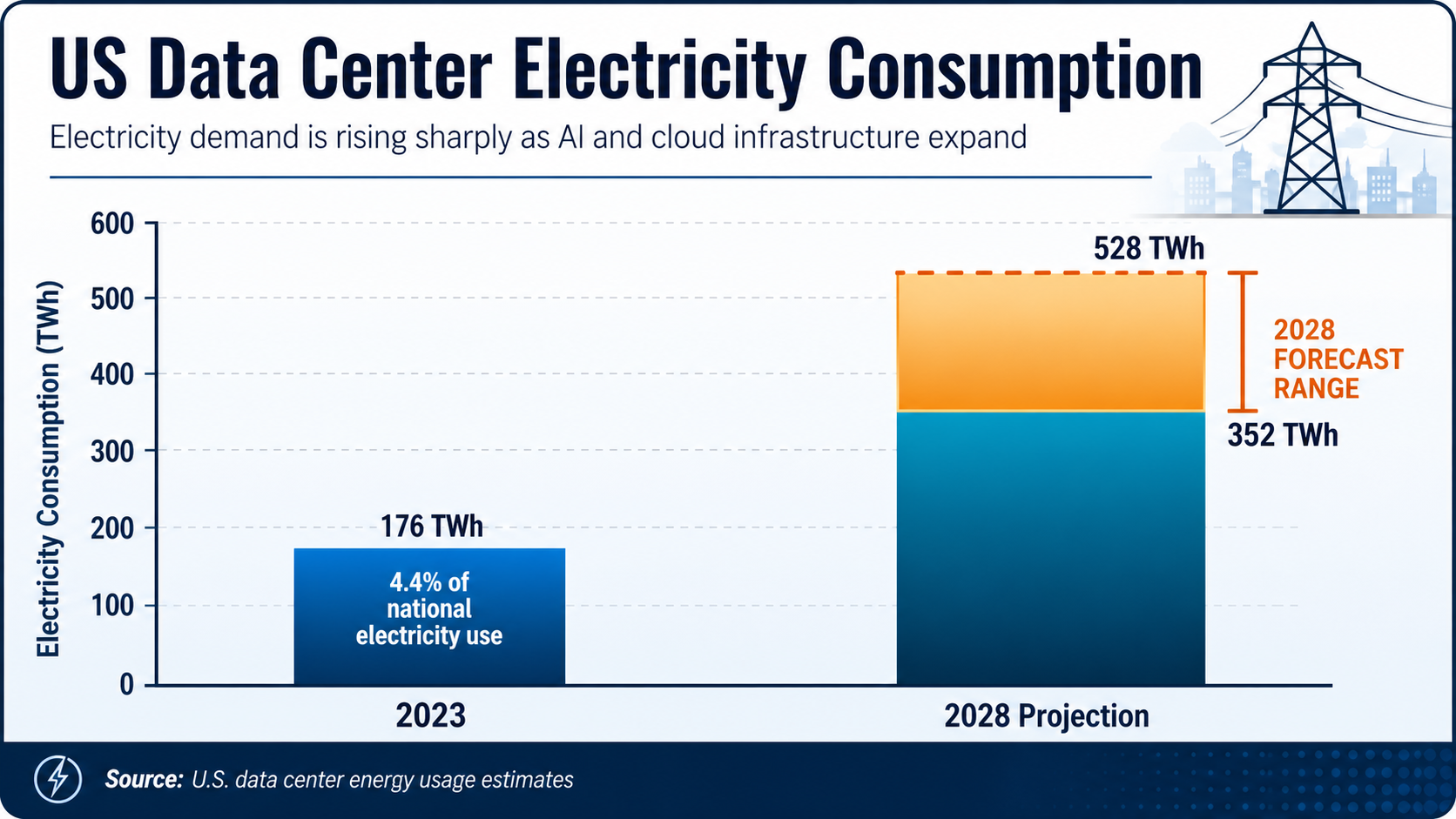

The digital infrastructure powering artificial intelligence has reached a resource-intensive threshold that is straining national power grids and local water supplies. In 2023, data centers across the United States consumed 176 terawatt-hours (TWh) of electricity—representing 4.4% of total national usage. This demand is not slowing; current projections suggest these figures will double or even triple by 2028 as the adoption of generative AI continues to accelerate.

While the industry often focuses on the computational breakthroughs of large language models (LLMs), the physical cost of these achievements is staggering. Training a single model like GPT-3 requires over 1,200 MWh of electricity and approximately 700,000 liters of clean freshwater for cooling. However, the environmental toll does not end once training is complete. Inference—the act of running AI queries for users—is emerging as the primary contributor to the sector's environmental costs, potentially accounting for up to 90% of a model's total lifecycle energy use.

The Thirst of the Machine

Water has become a critical, yet often overlooked, component of the AI boom. Data centers require vast amounts of liquid to cool high-density server stacks that would otherwise overheat. In 2023, Google reported using over 5 billion gallons of water across its global data center operations. Microsoft, another primary player in the space, is projected to reach 28 billion liters of annual water consumption by 2030, a threefold increase compared to its 2020 levels.

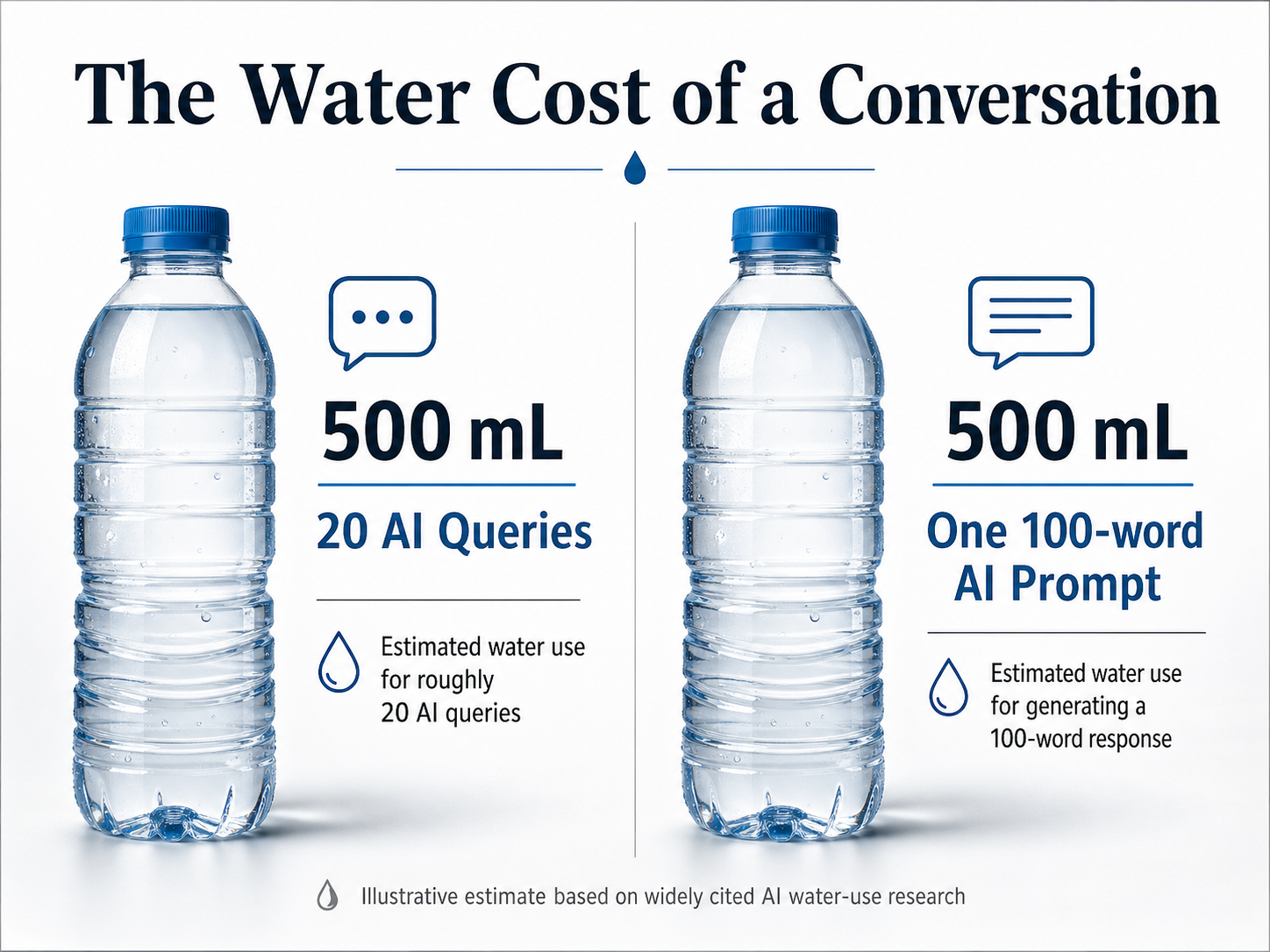

For the end user, this consumption translates into tangible amounts of a precious resource. A study by the University of California Riverside estimated that a typical 20-query chat session with an AI effectively 'consumes' a 500ml bottle of freshwater. For longer interactions, each 100-word prompt is estimated to use roughly 519 milliliters. As Colohan noted in a recent industry report, wherever companies place a data center, "it is like a giant soda straw sucking water out of that basin."

Carbon Emissions and the Grid

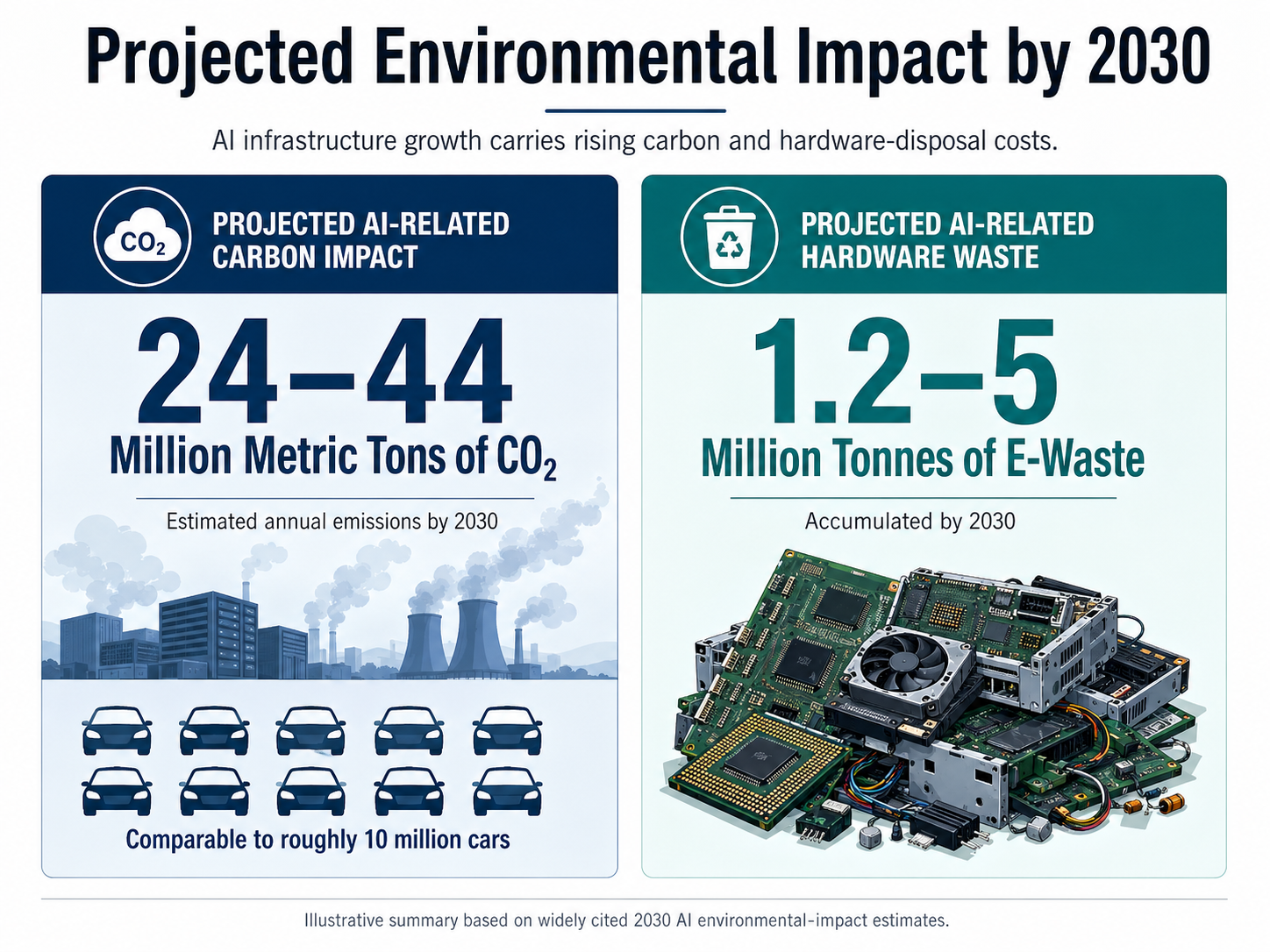

The environmental impact extends beyond water to the atmosphere. By 2030, the current rate of AI growth in the U.S. could add between 24 and 44 million metric tons of carbon dioxide to the atmosphere annually. This is equivalent to adding 5 to 10 million cars to American roadways. The challenge is exacerbated by the location of these facilities. "AI data centers are currently located in areas with grids predominantly powered by fossil fuels, meaning coal- and gas-fired power plants," says Mike Weinstein, Director of Sustainability at Southern New Hampshire University.

There is also the rising tide of electronic waste. Generative AI hardware cycles are notoriously short, and experts estimate the sector could generate between 1.2 and 5 million tonnes of e-waste by 2030. This would represent up to 12% of all projected global e-waste within the next decade.

Accountability and the Efficiency Paradox

Despite the urgency, transparency remains a hurdle. Many tech giants are hesitant to release granular data regarding the resource consumption of specific models. Furthermore, unverified reports from April 2026 suggest that up to 74% of industry claims regarding AI's potential to solve climate issues remain unproven, with few instances of generative AI delivering verifiable emission cuts.

This lack of clarity is complicated by the Jevons Paradox: as AI tasks become more efficient, the total volume of AI usage grows so rapidly that it cancels out any resource savings. Fengqi You, a professor in Energy Systems Engineering at Cornell, explains that while AI is changing every sector, "its rapid growth comes with a real footprint in energy, water and carbon."

Toward a Sustainable Architecture

There are signs that the industry is beginning to pivot toward more sustainable practices. Microsoft has experimented with data center designs targeting zero water consumption for cooling, and Amazon Web Services (AWS) reported achieving 100% renewable electricity matching in 2023. Recent research from UNESCO and UCL suggests that minor adjustments in LLM design could reduce energy consumption by as much as 90% without sacrificing performance.

As the industry matures, the pressure from policymakers and the public to adopt mandatory reporting standards will likely intensify. Golestan Radwan, Chief Digital Officer of the United Nations Environment Programme, emphasizes the stakes: "We need to make sure the net effect of AI on the planet is positive before we deploy the technology at scale." The future of AI may depend not just on its intelligence, but on its ability to exist within the ecological limits of the planet.