Law Firm Admits AI Hallucinations in Major Bankruptcy Case Filing

Sullivan & Cromwell admits to over 40 AI hallucinations in a bankruptcy filing, highlighting the gap between AI policy and legal practice.

The elite Wall Street law firm Sullivan & Cromwell (S&C) has issued a formal apology to the U.S. Bankruptcy Court for the Southern District of New York after identifying more than 40 'hallucinated' citations and fabricated sources in a recent emergency filing. The incident, occurring in the high-profile bankruptcy case of Prince Global Holdings Limited, serves as a stark reminder of the risks inherent in the rapid integration of large language models (LLMs) into the legal profession.

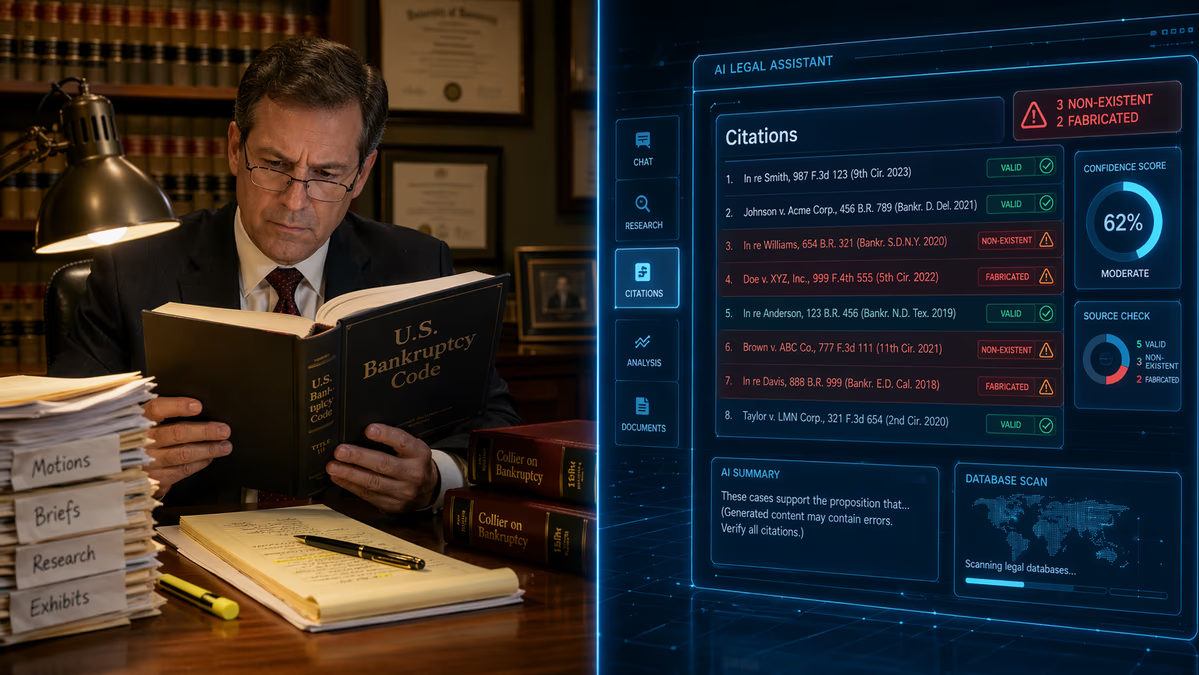

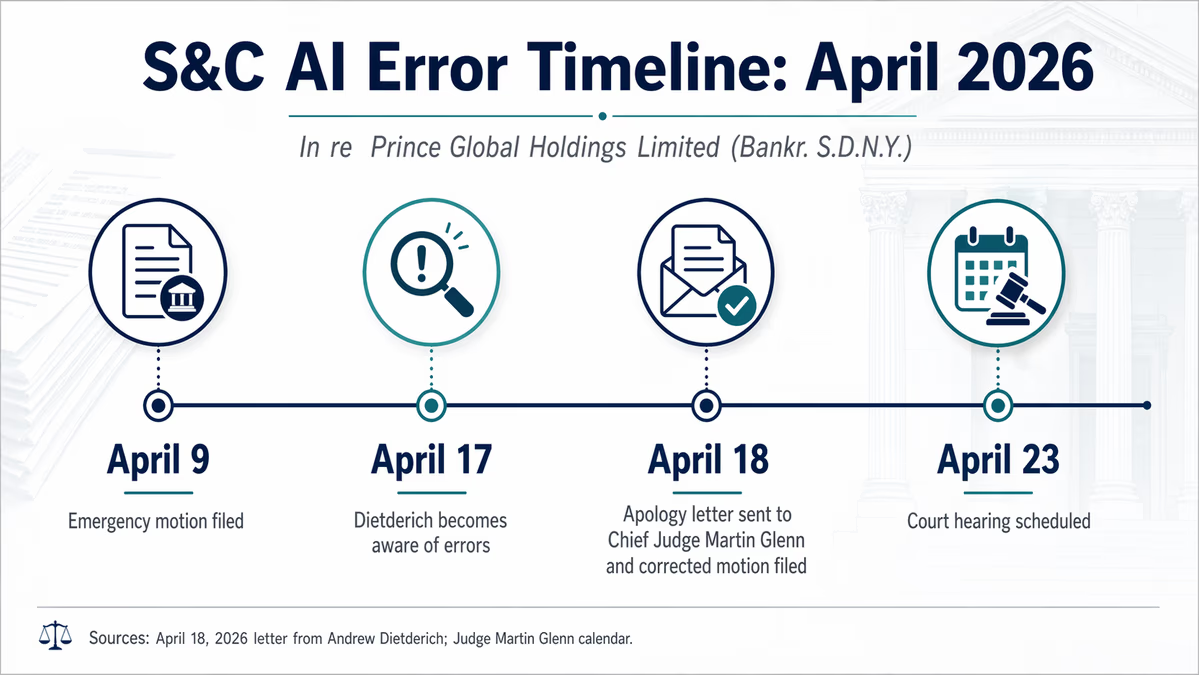

On April 9, 2026, S&C filed an emergency motion that was later discovered to contain approximately 40 to 42 inaccuracies. These errors ranged from inaccurate case citations to misquoted sections of the U.S. Bankruptcy Code. Most critically, the filing included references to legal sources that simply do not exist—a phenomenon commonly referred to in the AI industry as a 'hallucination.'

A Breakdown in Review Protocols

The inaccuracies were not caught by S&C’s internal review but were instead flagged by opposing counsel at Boies Schiller Flexner LLP (BSF). Following the discovery, Andrew G. Dietderich, co-head of S&C's global restructuring group, sent a formal apology letter to Chief Judge Martin Glenn on April 18, 2026.

In the letter, Dietderich was candid about the firm's failure to adhere to its own standards. "The Firm maintains comprehensive policies and training requirements governing the use of AI tools in legal work. These safeguards are designed to prevent exactly this situation," Dietderich stated. He admitted that, in this instance, those policies were not followed during the preparation and submission of the motion. "Regrettably, this review process did not identify the inaccurate citations generated by AI, nor did it identify other errors that appear to have resulted in whole or in part from manual error."

Sullivan & Cromwell, which represents the liquidators appointed by British Virgin Islands authorities against the Prince Group, subsequently filed a corrected version of the motion. Dietderich also reached out to BSF to thank them for identifying the errors and to apologize directly to the opposing legal team.

The Irony of the OpenAI Connection

The failure is particularly notable given Sullivan & Cromwell’s stature in the AI industry. The firm serves as a key advisor to OpenAI on matters of AI ethics and the safe, ethical deployment of artificial intelligence technologies. This high-profile lapse underscores a significant and growing gap between the theoretical development of AI governance policies and their practical application within the fast-paced environment of elite corporate law.

While lawyers are permitted to use AI tools for research and drafting, they remain ethically bound under Federal Rule of Civil Procedure 11. This rule mandates that attorneys conduct a reasonable inquiry to ensure that any paper filed with the court is grounded in fact and existing law. S&C is currently conducting an internal review to determine how the breakdown occurred and to evaluate potential enhancements to its training and review protocols.

A Global Trend of AI Inaccuracy

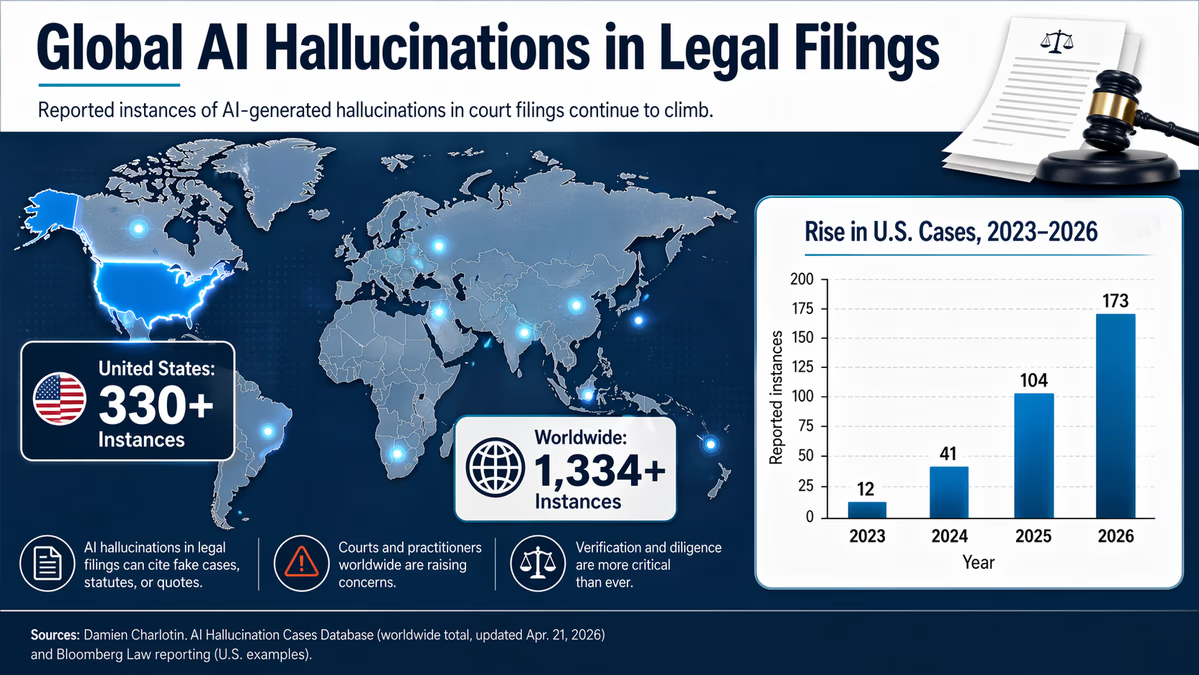

This is far from an isolated incident. A database tracking AI-related legal errors has recorded more than 330 instances of inaccuracies in U.S. courts and over 1,334 worldwide. The legal world first took serious notice of this issue in 2023, when attorney Steven A. Schwartz was sanctioned for using ChatGPT to generate six fake cases in a legal brief. More recently, in March 2026, OpenAI was sued by Nippon Life Insurance Co. of America for allegedly 'practicing law without a license' after its platform reportedly provided faulty legal advice.

The S&C case, however, represents one of the most significant failures involving a top-tier global firm. The irony of BSF flagging the error is also noteworthy, as Boies Schiller Flexner has itself been involved in past AI-related error incidents, demonstrating that no firm is entirely immune to the pitfalls of the technology.

Looking Toward the Future of Legal Tech

As the legal industry moves forward, the Sullivan & Cromwell incident is expected to trigger sharper scrutiny from both the bench and peers. The recurring nature of these hallucinations raises fundamental questions for AI developers regarding the factual grounding and retrieval capabilities of their models. It suggests that, despite advances in RAG (Retrieval-Augmented Generation) and other verification techniques, the human-in-the-loop requirement remains the most critical—and currently most vulnerable—component of the process.

For legal professionals, the takeaway is clear: having a policy is insufficient without rigorous enforcement. As courts continue to schedule hearings to address these digital fabrications—such as the one scheduled for April 23 in the Prince Global case—the pressure on firms to prove their AI competence will only intensify. The cost of a hallucination is no longer just a corrected filing; it is a direct hit to the hard-earned reputation of the world's most prestigious legal institutions.