Stanford 2026 AI Index: US-China Performance Parity Reached Amid Surging Costs and Declining Transparency

Stanford's 2026 AI Index reveals US-China performance parity, a $581B investment surge, and critical concerns over transparency and environmental costs.

A New Era of Geopolitical Parity

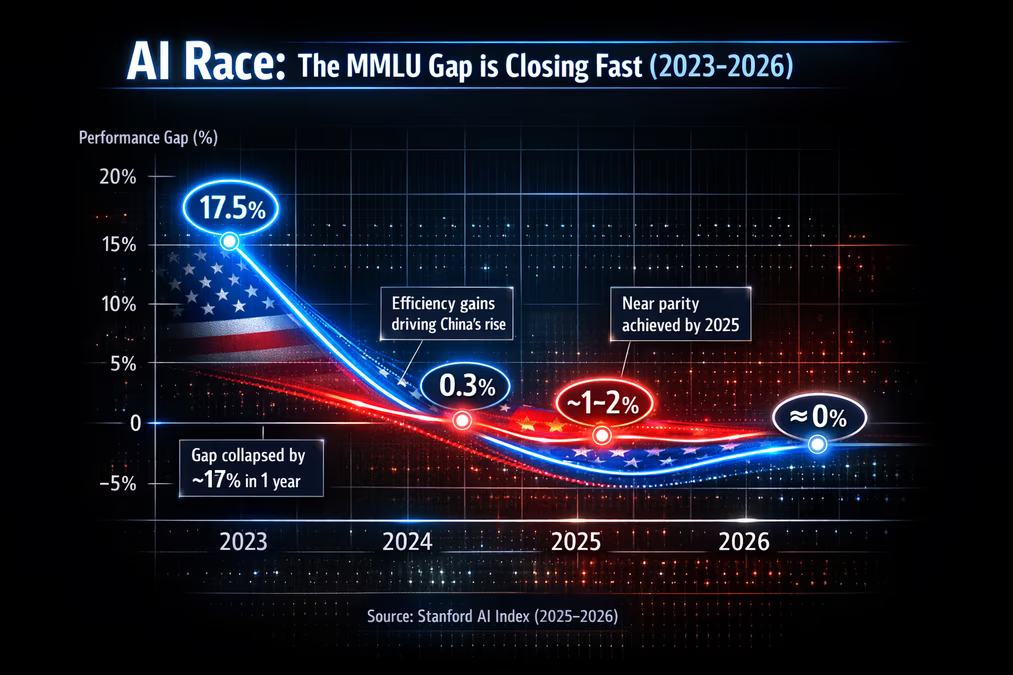

Stanford University’s Ninth Annual AI Index Report, released this April, documents a world where the technological lead once held by the United States over China has effectively vanished on key benchmarks. According to data compiled through early 2026, the performance gap on the Massive Multitask Language Understanding (MMLU) benchmark narrowed from 17.5 percentage points at the end of 2023 to a razor-thin 0.3 points by the close of 2024. As of March 2026, the leading U.S. model holds a marginal 2.7% performance advantage over its top Chinese counterpart.

This convergence suggests a profound shift in the global AI landscape. While the U.S. continues to dominate in capital allocation—investing $285.9 billion in private AI ventures in 2025 compared to China’s $12.4 billion—Chinese researchers have achieved remarkable efficiency. The report notes that in February 2025, China’s DeepSeek-R1 model briefly matched the top U.S. model in performance, signaling that the era of American technical exceptionalism may be giving way to a multi-polar reality.

The Half-Trillion Dollar Bet

Global corporate AI investment reached a staggering $581.7 billion in 2025, a 130% increase from the previous year. This capital influx has driven generative AI tools to a 53% population adoption rate within just three years—a pace of integration significantly faster than that of the personal computer or the internet. In the United States alone, the estimated consumer value of these tools reached $172 billion annually by early 2026.

However, this growth is increasingly concentrated in the private sector. Over 90% of notable AI models now originate from private companies rather than academic institutions. This shift has led to a documented "transparency crisis." The average score on the Foundation Model Transparency Index plummeted from 58 in 2024 to just 40 in 2025. Furthermore, 80 out of 95 notable models released in the past year were published without their training code, leaving researchers and regulators in the dark regarding the datasets and methodologies powering the world’s most influential systems.

The Environmental and Human Cost

As AI models reach human-level capabilities in PhD-level science questions and multimodal reasoning, the physical resources required to sustain them have become a central concern. The report estimates that training xAI’s Grok 4 model emitted 72,816 tons of CO2 equivalent. Perhaps more alarming is the water consumption; annual inference for GPT-4o may now exceed the drinking water needs of 12 million people. To support this scale, global AI data center power capacity has surged to 29.6 GW.

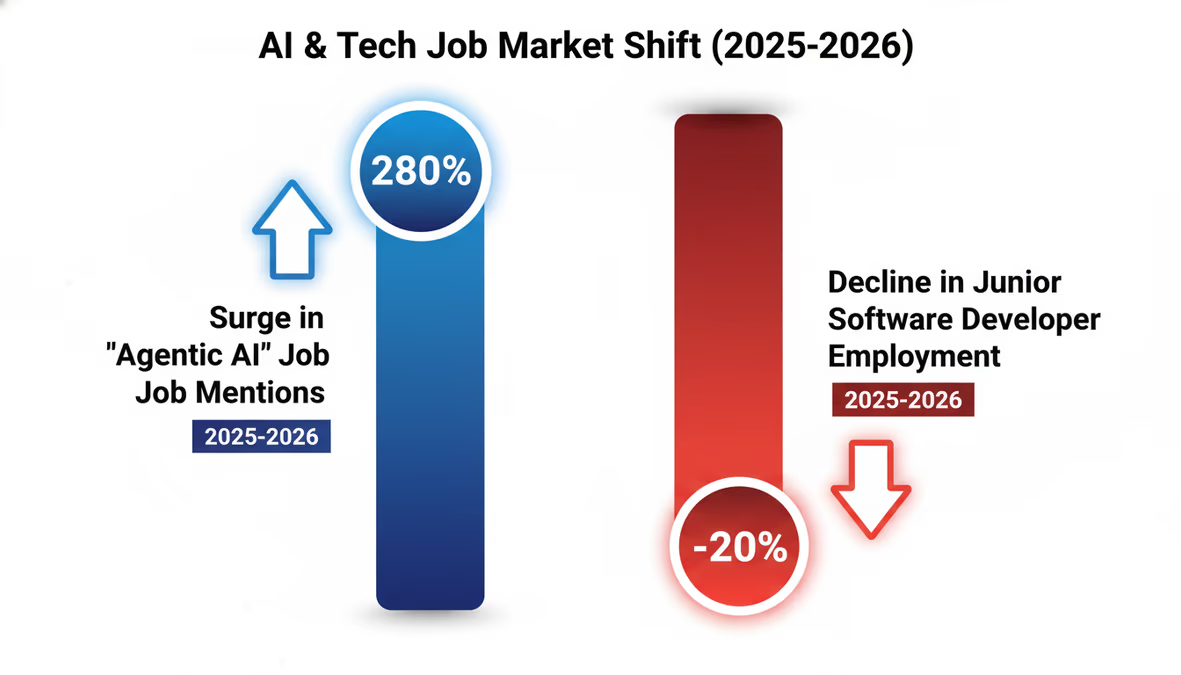

These environmental pressures are mirrored by disruptions in the labor market. Employment for software developers aged 22-25 has decreased by nearly 20% since 2024, suggesting that entry-level coding roles are being heavily impacted by automation. Conversely, the demand for high-level AI expertise is exploding. AI skills are now mentioned in 2.5% of all U.S. job postings, and mentions of "Agentic AI" skills—referring to autonomous AI agents—surged by over 280% in a single year. These agents are becoming increasingly viable; their success rate in handling complex, real-world tasks jumped from 20% in 2025 to 77.3% by early 2026.

A Crisis of Trust and Talent

The report highlights a growing disconnect between technological advancement and public sentiment. While global optimism regarding AI benefits sits at 59%, nervousness has climbed to 52%. Trust in governance remains remarkably low in the West; only 31% of U.S. citizens trust their government to regulate AI effectively, compared to 53% in the European Union.

Compounding these domestic challenges is a significant talent drain. The number of AI scholars relocating to the United States has fallen by 89% since 2017, with the decline accelerating by 80% in the last year alone. This suggests that the U.S. may be losing its status as the primary destination for the world’s brightest AI minds, even as its structural dependency on a single hardware manufacturer, TSMC, introduces systemic risks to the entire ecosystem.

Looking Ahead: The Age of the Agent

As we move deeper into 2026, the Stanford HAI report suggests that the focus of the industry is shifting from static chatbots to "Agentic AI"—systems capable of independent action and complex problem-solving. With 70% of organizations already utilizing generative AI in at least one business function, the integration of these autonomous agents into the global economy appears inevitable.

However, the report serves as a stark warning: the speed of AI development is currently outstripping our ability to monitor, regulate, and sustain it. Whether society can bridge the gap between these accelerating capabilities and our lagging governance frameworks will likely be the defining challenge of the next decade.