RecursiveMAS: Transforming Multi-Agent Collaboration via Latent-Space Computation

Researchers introduce RecursiveMAS, a latent-space framework improving multi-agent efficiency by up to 75.6% while boosting accuracy and speed.

Researchers from the University of Illinois Urbana-Champaign (UIUC) and Stanford University have introduced a multi-agent framework that shifts AI collaboration from text-based dialogue to a unified latent-space recursive computation. Published on arXiv on April 28, 2026, the paper titled 'Recursive Multi-Agent Systems' (RecursiveMAS) addresses the inherent inefficiencies and high costs associated with traditional multi-agent systems (MAS) that rely on token-heavy, natural language communication.

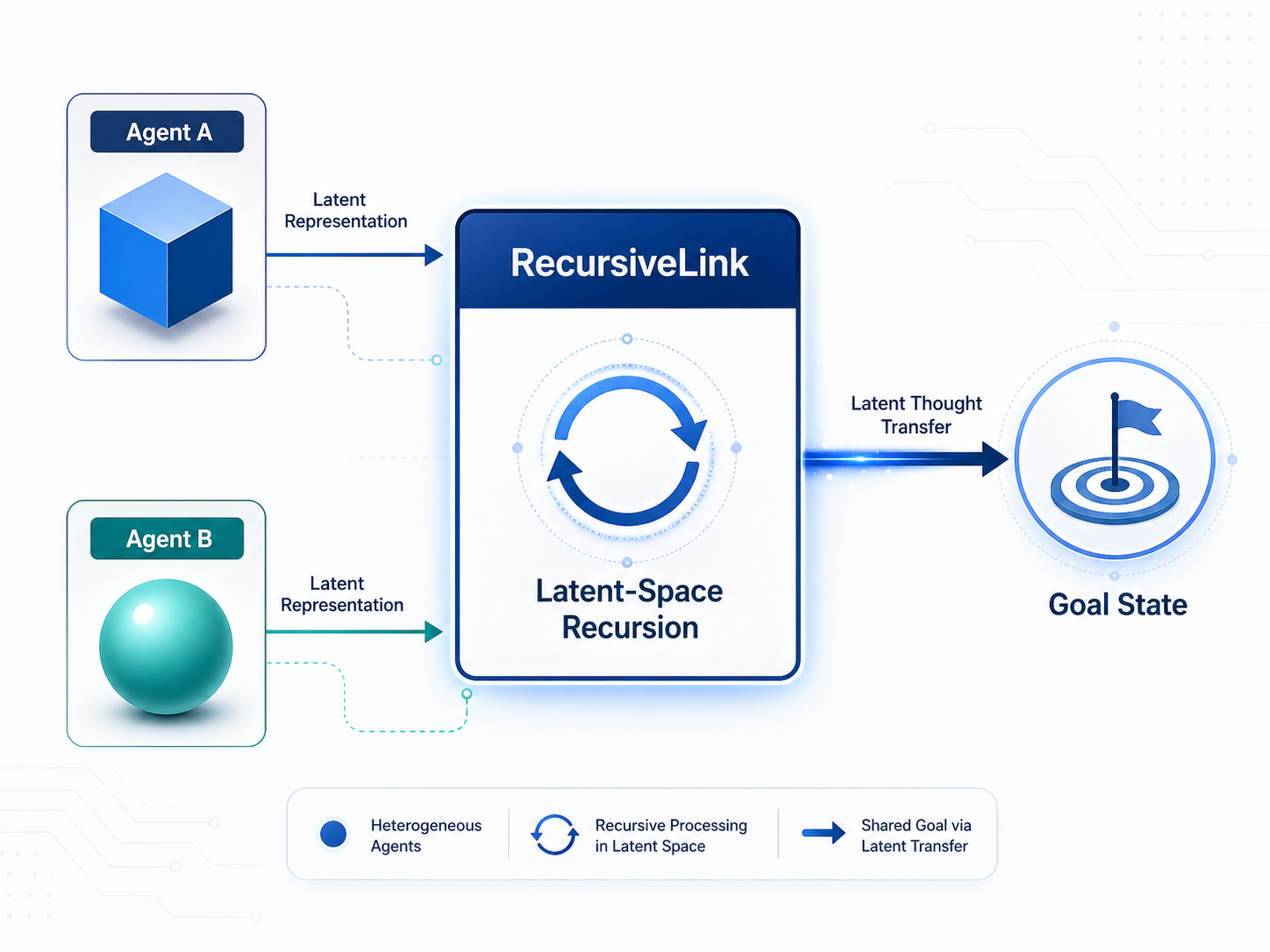

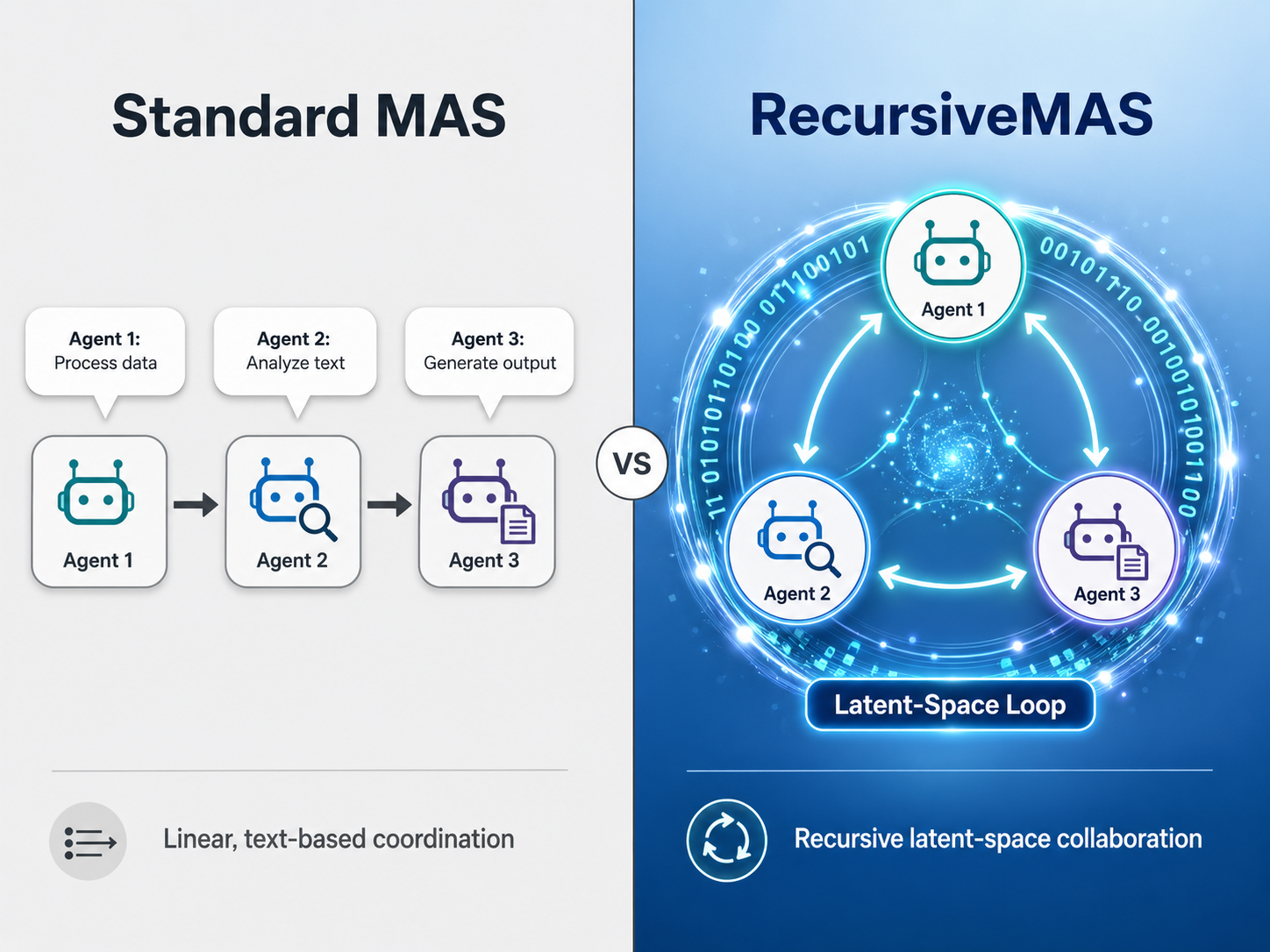

RecursiveMAS represents a departure from current standards where individual agents pass text back and forth to reach a conclusion. Instead, this new architecture treats the entire collection of agents as a single recursive computation loop. By utilizing a lightweight 'RecursiveLink' module, the system enables heterogeneous agents—models with different architectures or roles—to generate and transfer 'latent thoughts' directly. This approach allows the system to share context and intent through high-dimensional vectors rather than discrete words, significantly deepening the reasoning capabilities of the collective.

Solving the Efficiency Crisis in Agentic AI

The research arrives at a time when 'agentic AI' is becoming the industry's primary focus. Tom Coshow, Senior Director Analyst at Gartner, defines these systems as those that can plan autonomously and take actions to meet goals. Coshow notes that agents are smarter and more proactive, capable of recognizing patterns in user behavior and offering suggestions across applications. However, as these systems become more complex, the cost and latency of multi-agent interactions have historically scaled poorly.

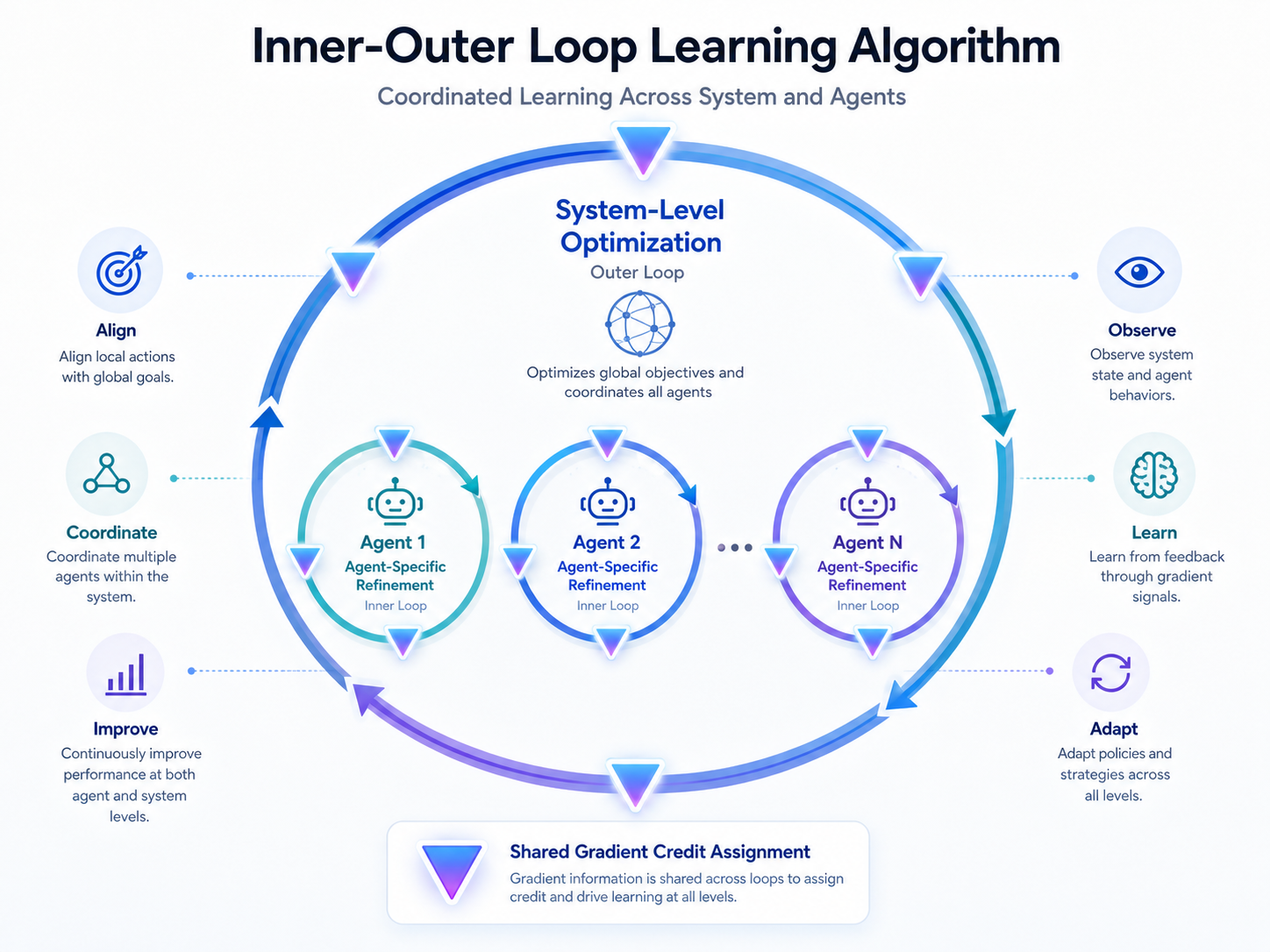

RecursiveMAS tackles these bottlenecks through a specialized inner-outer loop learning algorithm. This algorithm enables whole-system co-optimization by assigning credit across multiple recursion rounds using shared gradients. According to the research team, led by Xiyuan Yang and James Zou, this ensures that the system maintains stable gradients during training, preventing the performance degradation often seen in deeply recursive models.

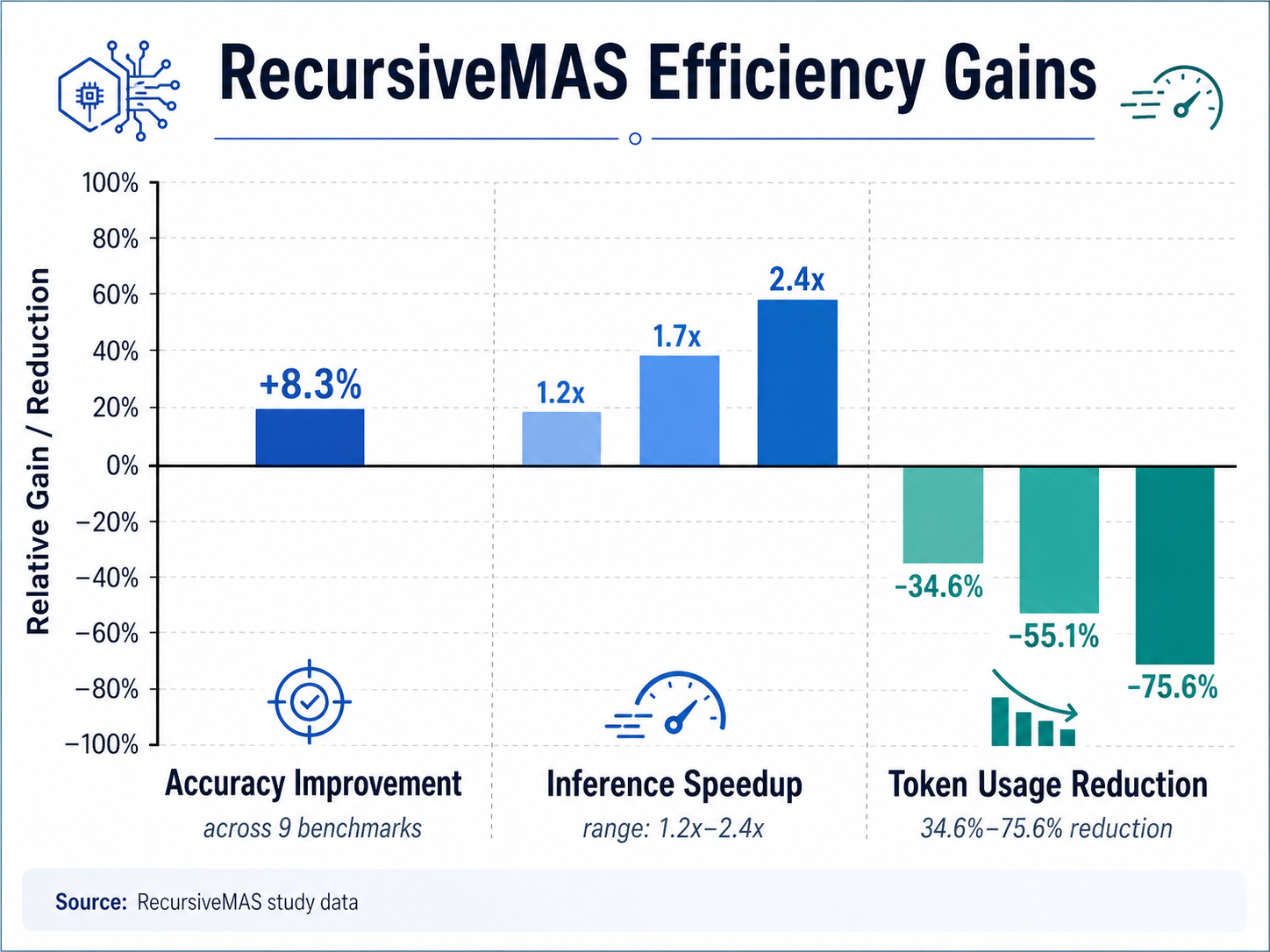

Empirical evaluations conducted across nine diverse benchmarks—spanning mathematics, medicine, science, search, and code generation—showed that RecursiveMAS provides an average accuracy improvement of 8.3%. More impressively, the framework achieved a 1.2x to 2.4x end-to-end inference speedup. For enterprises concerned with operational costs, the most striking finding was a 34.6% to 75.6% reduction in token usage compared to advanced single-agent and multi-agent baselines like AutoGen and MoA.

The Shift Toward Latent Reasoning

The publication builds on a sequence of advancements in recursive AI. While 2025 saw the rise of 'looped' language models like LoopLM, which refined computations within a single model, RecursiveMAS extends this logic to the system level. This transition addresses the 'dispersed decision-making' problem, where context is often lost or diluted when passed between agents in a standard text-based chain.

Industry interest in these robust architectures is high. According to unverified reports, major tech players are currently testing similar latent-transfer protocols to move beyond the constraints of text-only APIs. The goal is to create more cohesive AI collectives that can tackle real-world problems—such as real-time medical diagnostics or complex software engineering—without the lag and reliability issues that currently plague multi-step reasoning tasks.

Impact and Industry Adoption

For the broader AI ecosystem, the implications are profound. By reducing the reliance on natural language for internal agent communication, developers can build more scalable solutions that are less prone to the 'hallucination drift' that occurs during long-form text exchanges between agents. The ability to generalize to various collaboration patterns suggests that RecursiveMAS could become a foundational architecture for future autonomous systems.

Emmanuel Apetsi, CEO of SISU AI, views this evolution as a civilizational shift. He describes the future as agentic, urging the industry to embrace the rise of AI agents to catapult humanity into an era of unprecedented cognitive augmentation.

As the framework’s code and data have been made publicly available by the research team, the developer community can now begin integrating recursive latent links into existing workflows. The move toward latent-space recursion may well be the catalyst that transforms AI from a collection of helpful tools into a truly seamless, autonomous cognitive layer. Challenges remain, particularly in ensuring reliability in critical applications and managing the complexity of gradient-based credit assignment in massive systems, but RecursiveMAS provides a clear mathematical path forward.