OpenAI Releases Specialized GPT-5.4-Cyber Model, Expands Access for Verified Defenders

OpenAI launches GPT-5.4-Cyber and expands its Trusted Access for Cyber program, providing verified defenders with advanced binary reverse engineering tools.

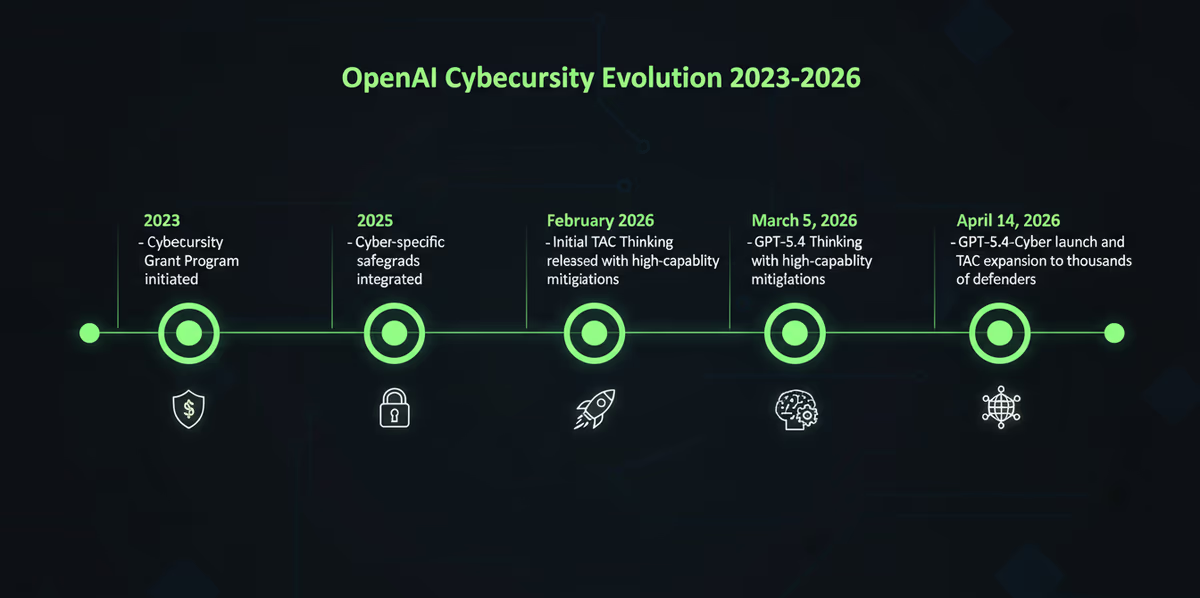

On April 14, 2026, OpenAI introduced GPT-5.4-Cyber, a specialized variant of its flagship large language model engineered specifically for defensive cybersecurity operations. The release marks a pivot in the company’s deployment strategy, shifting from general-purpose safeguards toward a more nuanced, verification-based approach that allows security professionals to utilize the model’s most potent analytical capabilities.

GPT-5.4-Cyber is fine-tuned for high-intensity defensive tasks, including vulnerability analysis and binary reverse engineering. Unlike standard iterations of GPT-5.4, this version is designed to analyze compiled software to assess malware potential and security robustness without requiring access to the original source code. To facilitate these advanced workflows, OpenAI has intentionally lowered the refusal boundary for legitimate cybersecurity activities, allowing the model to engage with code and scenarios that would typically trigger safety filters in consumer-facing models.

Scaling the Defensive Front

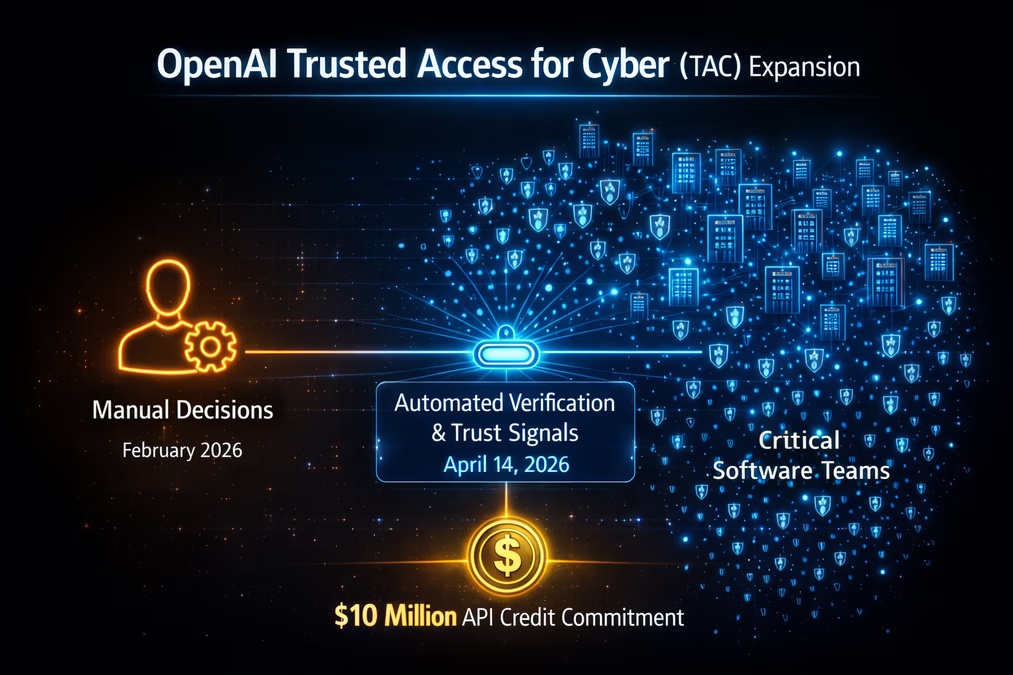

Accompanying the model launch is a significant expansion of OpenAI’s Trusted Access for Cyber (TAC) program. Originally debuted in February 2026, the TAC program has been restructured into a tiered system to accommodate a broader range of users. OpenAI aims to scale access to thousands of verified individual defenders and hundreds of enterprise teams responsible for protecting critical digital infrastructure.

Verification is handled through a new portal at chatgpt.com/cyber, where individual users can confirm their identity. Enterprise clients are encouraged to coordinate through existing OpenAI representatives. This move toward automated and signal-based verification represents a shift in philosophy for the AI giant.

"To enable responsible use at scale, we need systems that can validate trustworthy users and use cases in more automated and more objective ways," OpenAI stated regarding the expansion. "This allows us to expand access based on evidence and real signals of trust, rather than relying on manual decisions. We don't think it's practical or appropriate to centrally decide who gets to defend themselves."

To further catalyze the adoption of AI in defense, OpenAI has committed $10 million in API credits to support cybersecurity initiatives. This investment follows years of foundational work, including the 2023 Cybersecurity Grant Program and the integration of cyber-specific safety training in models ranging from GPT-5.2 to the recently released GPT-5.4 Thinking.

The Competitive Landscape of AI Defense

The launch of GPT-5.4-Cyber comes exactly one week after rival lab Anthropic announced its own defensive initiative, "Project Glasswing," and the associated Claude Mythos model. While both companies are racing to provide security tools, their strategies differ significantly. Anthropic has maintained a more restricted release model, whereas OpenAI is betting on a democratized, tiered access strategy to empower a wider pool of verified defenders.

This divergence highlights the ongoing debate regarding the "dual-use" nature of AI. The same capabilities that allow GPT-5.4-Cyber to reverse-engineer malware to build a defense could, in theory, be used by attackers to find exploits. OpenAI’s Preparedness Framework, which classifies GPT-5.3-Codex and GPT-5.4 as having "high" cyber capability, is the primary mechanism for managing these risks. GPT-5.4 Thinking, released in March, was the first general-purpose model to implement mitigations specifically for these high-level capabilities.

OpenAI acknowledges that the threat landscape is already shifting. "As model capabilities increase, defenses need to scale alongside them," the company noted. "We've seen steady improvements in agentic coding, which have direct implications for cybersecurity and we've adapted our approach in step."

Implications for the Future of Security

The introduction of specialized models like GPT-5.4-Cyber suggests a future where AI is not just an assistant for writing code, but a primary actor in maintaining software integrity. By enabling security professionals to analyze binary files for vulnerabilities without source code, OpenAI is providing a tool that could significantly compress the time between the discovery of a threat and the deployment of a patch.

However, the success of this initiative relies heavily on the robustness of the TAC verification system. As AI models become more capable of identifying flaws, the risk of these tools being co-opted for offensive purposes remains a central concern for policymakers and industry leaders alike. Furthermore, as AI becomes integrated into the defensive stack, the models themselves represent a new attack surface, necessitating a new generation of security frameworks to protect the AI that protects the network.

For now, OpenAI’s message is clear: in an era of AI-driven threats, the only viable path forward is to arm the defenders with even more powerful AI.