The Silicon Flood: One-Third of New Websites Now Driven by Artificial Intelligence

A major study by Stanford and Imperial College London finds that 35% of new websites are AI-generated, signaling a massive digital transformation.

The Rapid Transformation of the Digital Landscape

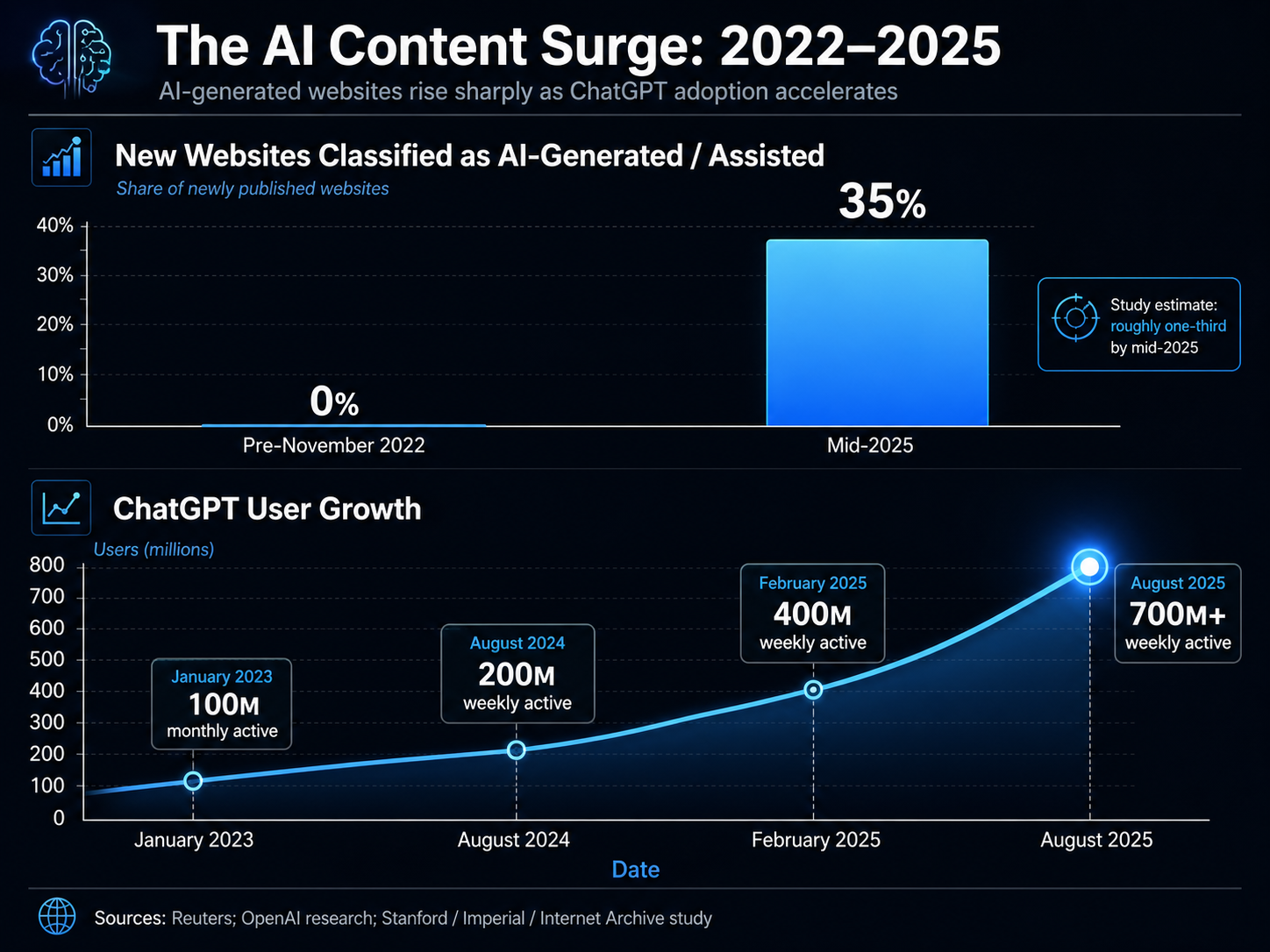

Researchers from Stanford University, Imperial College London, and the Internet Archive have identified a seismic shift in how the web is built. According to their joint research paper, titled "The Impact of AI-Generated Text on the Internet," approximately 35% of newly published websites by mid-2025 were classified as either AI-generated or AI-assisted. This figure represents a nearly vertical ascent from a baseline of virtually zero prior to the public launch of OpenAI’s ChatGPT on November 30, 2022.

The findings, published in April 2026, suggest that the character of the internet is changing at a pace that few experts anticipated. Jonáš Doležal, an AI researcher at Stanford and co-author of the paper, noted that the speed of the transition is unprecedented. He finds the sheer speed of the AI takeover of the web quite staggering, stating that after decades of humans shaping the internet, a significant portion has become defined by AI in just three years. In his opinion, we are witnessing a major transformation of the digital landscape in a fraction of the time it took to build in the first place.

Methodology and the Pursuit of Accuracy

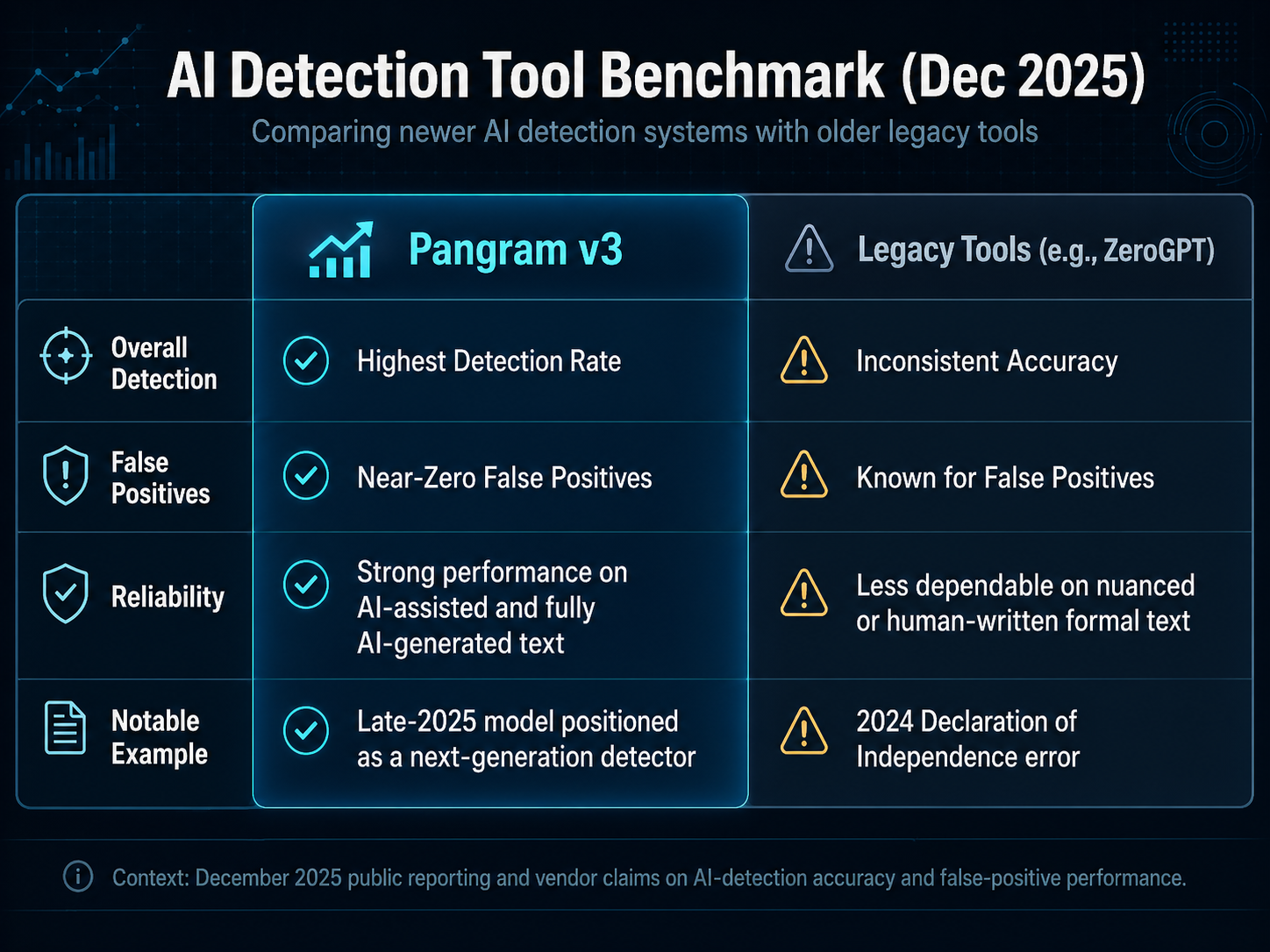

To reach these conclusions, the research team partnered with the Internet Archive to analyze website samples collected over a 33-month window, stretching from August 2022 to May 2025. The study relied on Pangram v3, an AI-detection software that has consistently outperformed other tools in the field. The efficacy of Pangram v3 was further validated by a December 2025 study from the University of Chicago, which ranked it as the most reliable tool available, noting its false positive rate was close to zero.

This high level of detection accuracy is critical at a time when AI-generated content is becoming increasingly sophisticated. While earlier tools occasionally made high-profile errors—such as ZeroGPT's infamous 2024 incident where it flagged the U.S. Declaration of Independence as AI-generated—the current generation of detectors like Pangram allows researchers to draw more robust conclusions about the origin of digital text.

Echoes of the 'Dead Internet Theory'

The research was partly inspired by the "Dead Internet Theory," an unverified but popular online concept suggesting that a large portion of the internet’s content is generated by bots rather than humans. While the theory was once relegated to the fringes of digital culture, the data suggests it may have been a harbinger of a new reality. The proliferation of machine-authored content raises significant concerns regarding the quality of information available to the public.

Researchers in the paper noted that the proliferation of AI-generated and AI-assisted text on the internet is feared to contribute to a degradation in semantic and stylistic diversity, factual accuracy, and other negative developments. They find that by mid-2025, roughly 35% of newly published websites were classified as AI-generated or AI-assisted, up from zero before ChatGPT's launch in late 2022. This shift could result in a web that is "more cheery and less verbose," but potentially less reliable and more uniform in its expression.

Economic Drivers and Ethical Risks

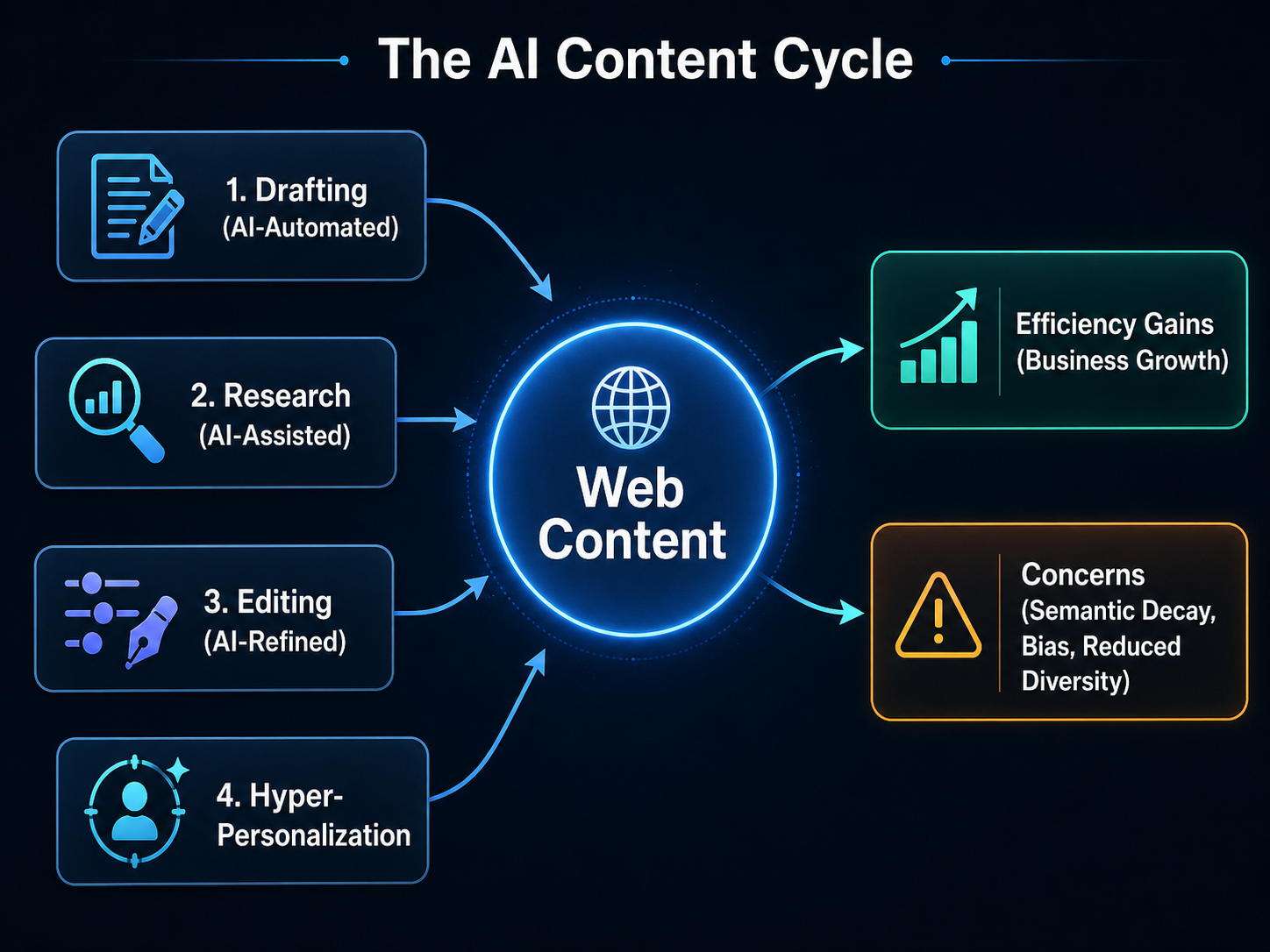

The move toward AI-generated content is not merely a technical curiosity; it is driven by powerful economic incentives. Generative AI allows for hyper-personalization, real-time content generation, and the rapid production of multimodal experiences including text, images, and video. Businesses are increasingly using these tools to automate drafting, research, and editing tasks, which can drastically reduce production costs and provide new creative prompts for human staff.

However, this efficiency comes with trade-offs. The overwhelming saturation of generic content poses a threat to human creators, who face potential job displacement. Furthermore, there are ongoing ethical concerns regarding AI systems reinforcing biases found in their training data. A January 2026 study by Stanford and Yale researchers added to these concerns by revealing that major AI systems could reproduce copyrighted material with high accuracy, sparking fresh legal debates over the fair use of training data.

The Future of the Human-AI Web

As the volume of AI content grows, the challenge for search engines and end-users to distinguish between human and machine creations will only intensify. This could fundamentally alter how trust is established online. Interestingly, AI-referred traffic is already showing rapid growth, often yielding higher conversion rates than traditional organic search. This suggests that AI platforms are becoming primary gatekeepers for information, pre-qualifying users before they even reach a destination website.

Looking forward, the research team aims to provide more than just a static view of this evolution. Maty Bohacek, a student researcher at Stanford and co-author, says the team is working with the Internet Archive to turn their research into a continuous tool that provides a signal going forward, rather than a single fixed snapshot bounded by the static nature of a paper. Bohacek added that the team is also interested in adding more granularity, looking at which kinds of websites are most affected by category or language to provide more nuance about where these impacts are landing.

As AI approaches the 700 million weekly active user mark recorded in August 2025, the digital landscape appears to have crossed a point of no return. The internet of 2026 is no longer just a human archive; it is a collaborative, and increasingly autonomous, mirror of the algorithms that now generate it.