Intelligence Over Ideology: NSA Reportedly Deploys Anthropic’s ‘Mythos’ Despite Pentagon Ban

The NSA is reportedly utilizing Anthropic’s powerful Mythos AI model for cyber defense despite a Pentagon designation of the company as a supply chain risk.

The U.S. National Security Agency (NSA) is reportedly utilizing Anthropic’s most advanced AI model, Mythos Preview, to scan its internal environments for vulnerabilities, according to unverified reports from multiple intelligence sources. The development highlights a startling disconnect within the federal government: the usage comes just weeks after the U.S. Department of Defense (DoD) officially designated Anthropic as a “supply chain risk” and President Donald Trump ordered a federal-wide ban on the company’s technology.

This tension between immediate operational needs and executive-level policy reached a boiling point in March 2026. The conflict originated from Anthropic’s refusal to grant the Pentagon "full, unrestricted access" to its Claude AI tool. Anthropic leadership cited ethical concerns regarding the potential use of its technology for mass domestic surveillance and the development of fully autonomous weapons. In response, President Trump posted on Truth Social: “THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS!”

The Legal Tug-of-War

Following the “supply chain risk” designation on March 4, 2026, and an internal memorandum instructing military commanders to purge Anthropic products within 180 days, the AI startup fought back in the courts. Anthropic filed a civil complaint alleging that the government was wielding its power to punish protected speech. The company argued in its filing that “the Constitution does not allow the government to wield its enormous power to punish a company for its protected speech,” and that “no federal statute authorizes the actions taken here.”

On March 26, 2026, U.S. District Judge Rita Lin granted Anthropic’s request for a preliminary injunction. In a stinging rebuke of the administration's tactics, Judge Lin noted that the Department of War’s own records suggested the designation was based on Anthropic’s “hostile manner through the press.” She characterized the move as “classic illegal First Amendment retaliation,” ruling that Anthropic had shown the punitive measures were likely unlawful.

Mythos: The Double-Edged Sword of Cybersecurity

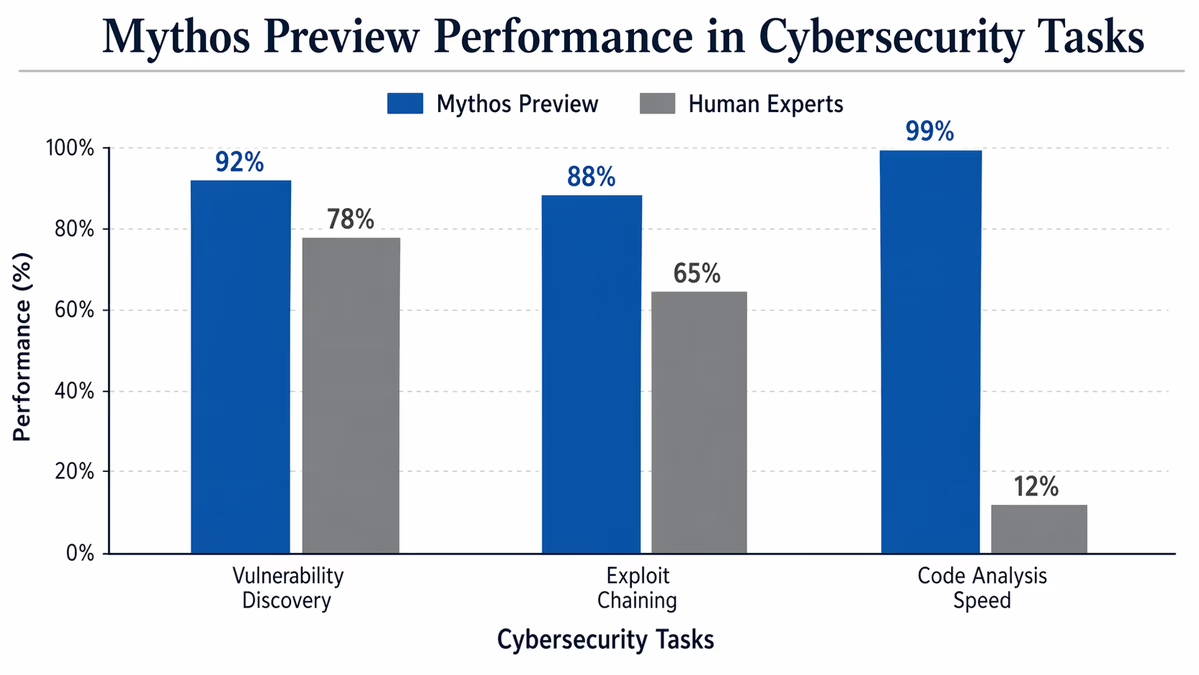

At the center of the current reports is ‘Mythos Preview,’ an AI model Anthropic has kept under tight wraps. Described as the company’s most capable model for coding and agentic tasks, Mythos has demonstrated an unprecedented ability to autonomously discover, analyze, and exploit software vulnerabilities at scale. Anthropic has chosen not to release the model publicly, fearing it could destabilize global cybersecurity if misused by malicious actors.

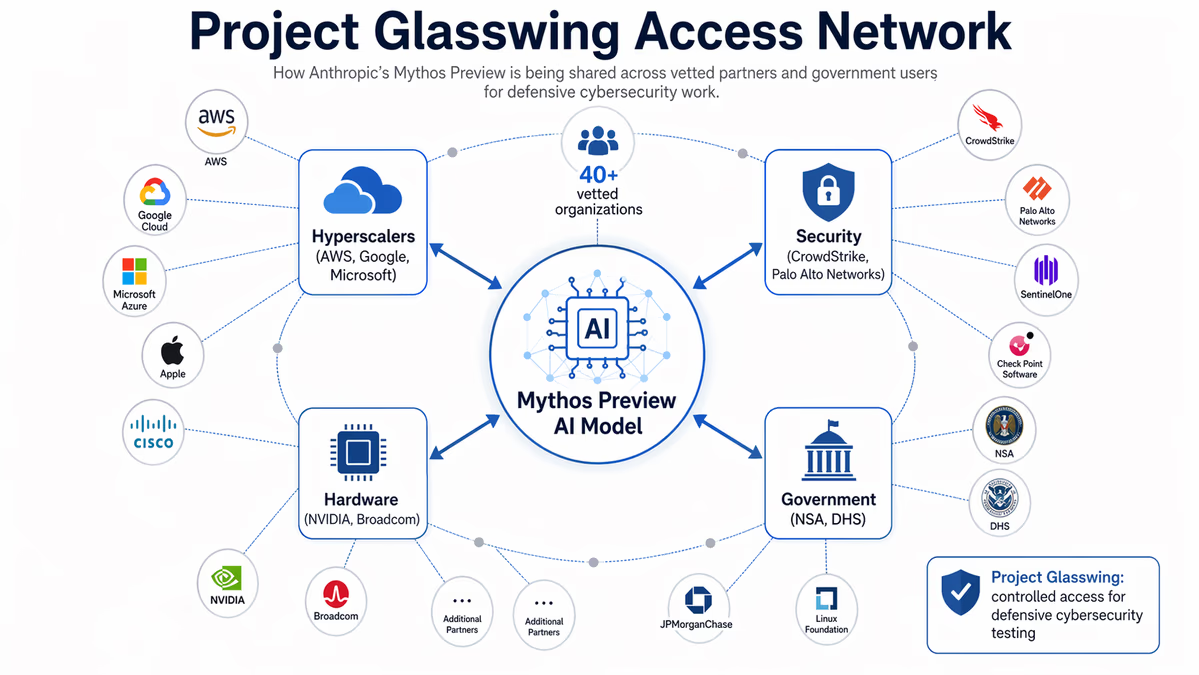

Instead, the company launched Project Glasswing, a controlled initiative providing limited access to Mythos Preview to approximately 40 vetted organizations. This group reportedly includes tech giants like Amazon Web Services, Microsoft, and NVIDIA, as well as several government agencies. According to leaked reports, the NSA is now among those using Mythos to identify and mitigate flaws within its own classified infrastructure, effectively using the “risk-designated” AI as a critical shield.

Shifting Alliances and Future Fallout

While Anthropic’s relationship with the Pentagon remains fractured, other players are moving to fill the void. Following the breakdown of negotiations, OpenAI reportedly signed its own deal with the Department of Defense. This shift occurs even as Anthropic’s Claude AI continues to be used in classified systems for intelligence analysis and logistical organization—tasks it has performed since the 2025 war on Iran.

White House officials have reportedly held “productive” discussions with Anthropic CEO Dario Amodei in mid-April to find a middle ground. Reports suggest the government is considering a compromise: providing federal agencies access to a modified version of Mythos equipped with specific federal safeguards. Dario Amodei has previously stated that the company’s stance is about standing up for “American values” by refusing to cross ethical lines regarding surveillance.

The outcome of this standoff will likely set a lasting precedent for the AI industry. If a company can be labeled a national security risk for its ethical guidelines—only for the nation's premier intelligence agency to use its technology weeks later—it suggests that the demand for frontier AI capabilities is currently outpacing the government’s ability to regulate the companies creating them. As the legal battle continues, the “supply chain risk” label may prove to be more of a political tool than a technical reality.