Google Unveils Gemma 4: A New Era of Local Intelligence and Digital Sovereignty

Google has launched Gemma 4, a breakthrough open-weight model series featuring multimodal capabilities and advanced reasoning. Optimized for local deployment and mobile devices, it brings frontier-level AI performance to everyday hardware under an Apache 2.0 license.

Google Shatters the Ceiling for Local AI with Gemma 4 Launch

MOUNTAIN VIEW, CA — In a move that signals a seismic shift in the landscape of decentralized artificial intelligence, Google today announced the release of Gemma 4. Launched on April 2, 2026, this latest iteration of Google’s open-model family is being hailed as the most intelligent open series ever produced, designed specifically to bridge the gap between massive cloud-based LLMs and the burgeoning world of on-device, local execution.

Built on the same foundational research and technology as Google’s flagship Gemini 3 models, Gemma 4 is not just an incremental update. It is a comprehensive overhaul aimed at providing developers with what Google calls "unprecedented intelligence-per-parameter." By releasing these models under a permissive Apache 2.0 license, Google is effectively handing the keys of frontier AI to the global developer community.

A Versatile Lineup for Every Device

The Gemma 4 series is meticulously tiered into four distinct sizes, ensuring compatibility with hardware ranging from budget smartphones to high-end developer workstations:

* Effective 2B (E2B) & Effective 4B (E4B): Optimized for edge devices and mobile platforms.

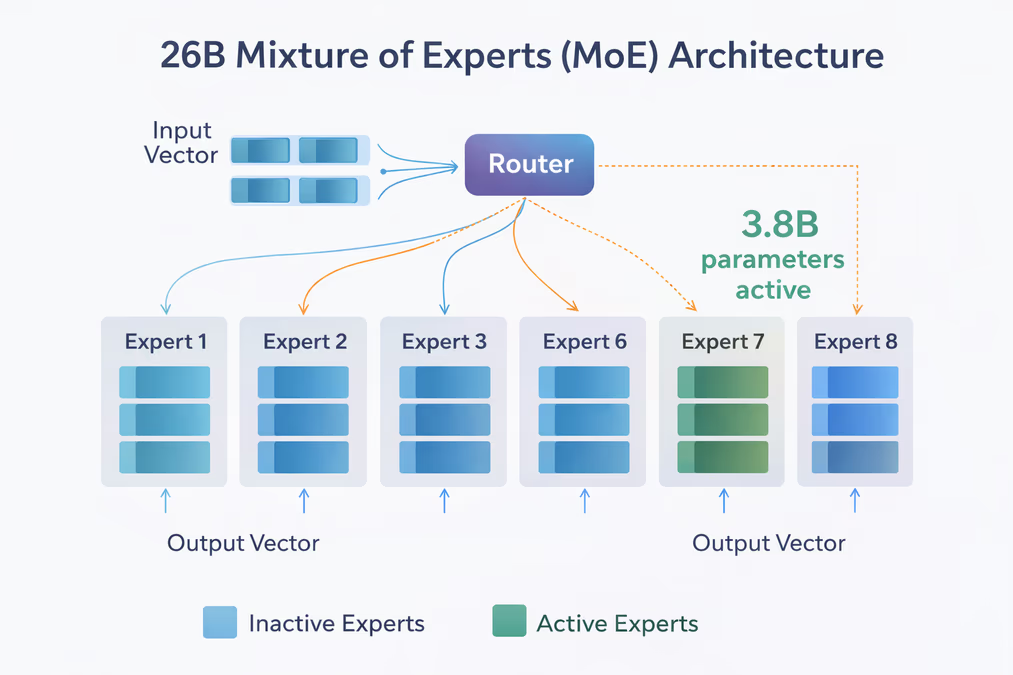

* 26B Mixture of Experts (MoE): A high-efficiency model that activates only 3.8 billion parameters during inference, offering lightning-fast token generation.

* 31B Dense: The flagship of the open series, currently ranked as the #3 open model globally on the industry-standard Arena AI leaderboard.

According to the Google Blog, "Gemma 4 is purpose-built for advanced reasoning and agentic workflows. We listened closely to what innovators need next to push the boundaries of AI, and Gemma 4 is our answer: breakthrough capabilities made widely accessible."

Native Multimodality and Massive Context

Perhaps the most striking feature of Gemma 4 is its native multimodality. Unlike previous generations that relied on external adapters, every model in the Gemma 4 series can natively process text, video, and images with support for variable resolutions. The smaller edge models (E2B and E4B) go a step further, including native audio input for real-time speech recognition and understanding.

To handle complex, long-form content, Google has significantly expanded the context windows. The edge models feature a 128K context window, while the 26B and 31B versions offer up to 256K. This enables the models to digest entire technical manuals or lengthy video files without losing the thread of the conversation.

Empowing the 'Local-First' Developer

For developers, Gemma 4 is a major play for "digital sovereignty." By supporting native function-calling, structured JSON output, and system instructions, the models allow for the creation of autonomous agents that run entirely offline. This is particularly transformative for coding; Gemma 4 supports high-quality offline code generation, effectively turning a standard laptop into a local-first AI coding assistant.

"This open-source license provides a foundation for complete developer flexibility and digital sovereignty," stated Google in their announcement. "It grants you complete control over your data, infrastructure, and models."

Privacy is a central pillar of this release. By processing sensitive data directly on-device—supported by hardware as modest as an Android device with 4GB of RAM—Gemma 4 eliminates the need to send information to remote servers, a critical requirement for enterprise and healthcare applications.

Performance Meets Efficiency

The efficiency of the 26B MoE model is a standout technical achievement. By only activating 3.8 billion parameters at any given time, it delivers high-quality responses at speeds that were previously impossible for models of this caliber. On the leaderboard, the 31B Dense model holds the #3 spot, while the 26B MoE holds the #6 spot, proving that open-weight models are now breathing down the necks of their closed-source counterparts.

Furthermore, Gemma 4 serves as the foundation for Gemini Nano 4. This next-generation mobile model is optimized for Android, running up to 4x faster and consuming 60% less battery than its predecessor. This ensures that the next wave of AI features on smartphones won't come at the cost of device longevity.

The Strategic Pivot

The release of Gemma 4 continues Google's strategic evolution from a closed-ecosystem provider to a champion of open-weight AI. This shift is widely viewed as a competitive response to Meta’s Llama series, fostering a more vibrant and transparent AI landscape. By leveraging the Google AI Edge Platform—including LiteRT and MediaPipe—Gemma 4 is optimized to run across a vast array of hardware, including NVIDIA’s Jetson edge devices and Blackwell GPUs.

As AI continues to move from the cloud to the pocket, Gemma 4 stands as a testament to the power of optimization. It proves that the future of AI isn't just about getting bigger—it's about getting smarter, more accessible, and more private.

Sources

* https://vertexaisearch.cloud.google.com/grounding-api-redirect/AUZIYQGL_e16BLB0dck-rD0YoHIoHJH2PwBqeAmg1r93gEIhIRaNT_o7K8goWihCqXi2F4RdUakn6W_ysH1irJG9QlD7_6IEeUufjL-Bh2H6Qb-oF1N-bMGTGxWDREneENMvLvHnKtyohDm4C9jsmluvgV2xYTZzziIRANqWomYpd1_YWW5Jcnayoxyzm9os9J6TfPUb2lHZKk1yX308FAxabrfRjzmOPTZwLKKGqg==

* https://vertexaisearch.cloud.google.com/grounding-api-redirect/AUZIYQHcx5dNWnhgJnl8x7wVcLRa4JkLyErcgfbGvIeyfOs5LrCppuXsNsYrGJO_4LrKWvqOyOKbvBKHj9sRwnMx_uhHo69H8Uyd_92o-CczewwSh_ACpuy3nWJYLHa77uY0tj5K9-7wihRkN9ZDRLpC5iCYfEt3U1VR8UJ4CGuHBINxPno8Tb2y

* https://vertexaisearch.cloud.google.com/grounding-api-redirect/AUZIYQFow9JX5-lWwTe_IW_otMqubLsgRkDFrMIfHUIBjI7AQ8Tk1ePT5KuHcdBQea3bg8z1C4bm0Gxv0u4ALQOfpRq8VJYDTaSFqb5kvV0VPIHcjzHCSI7gnwlTVDxpBA==