Google and UCSD Accelerate LLM Inference 3X on TPUs via New Block Diffusion Method

DFlash achieves a 3.13x average speedup for LLM inference on Google TPUs by replacing sequential drafting with parallel block-diffusion painting.

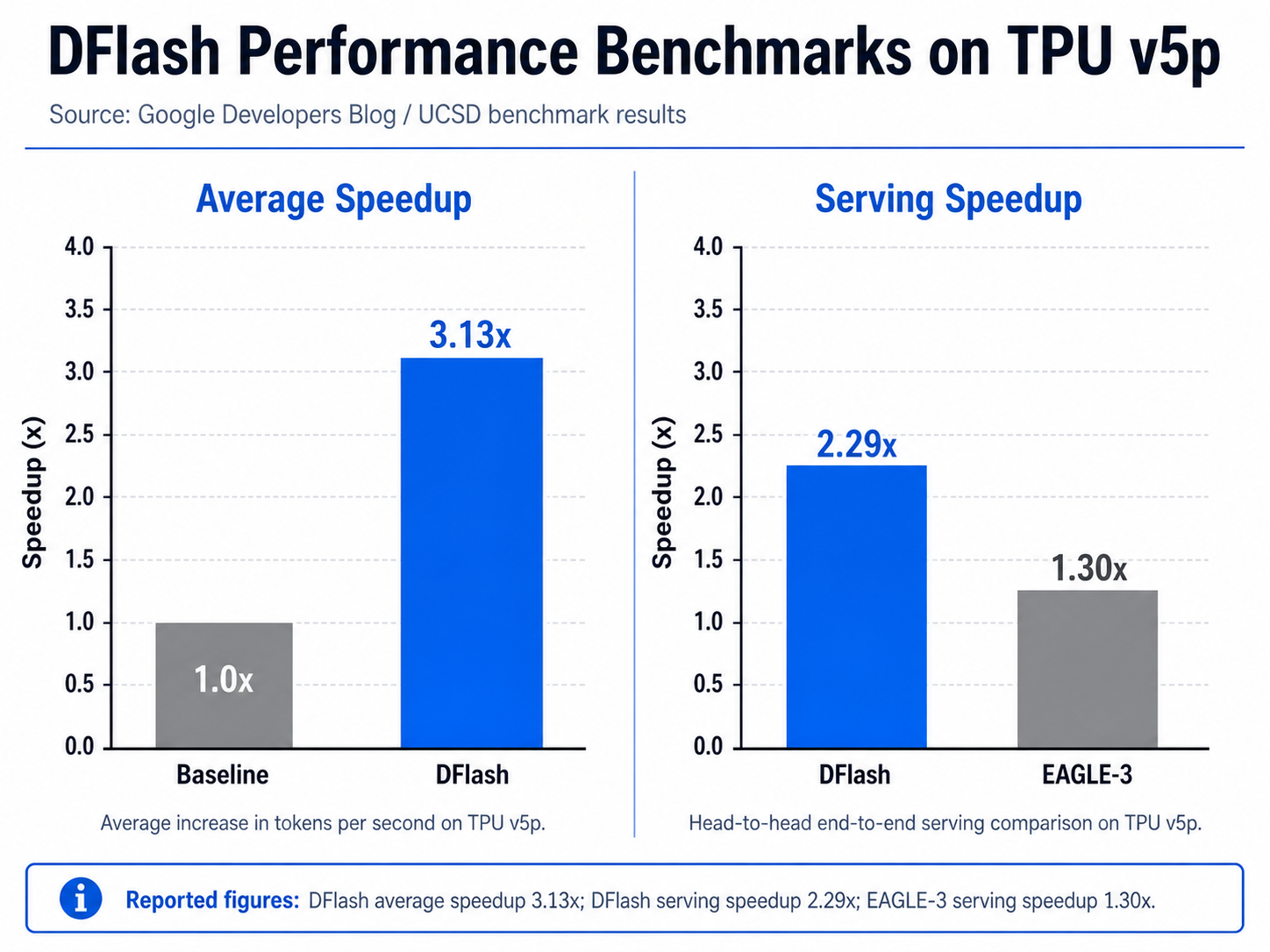

Google researchers and the University of California San Diego (UCSD) have demonstrated a significant leap in Large Language Model (LLM) performance, achieving an average 3.13x speedup in inference speeds on Google’s specialized Tensor Processing Units (TPUs). The research, centered on a novel decoding method called DFlash, addresses one of the most persistent bottlenecks in modern AI: the slow, sequential nature of text generation.

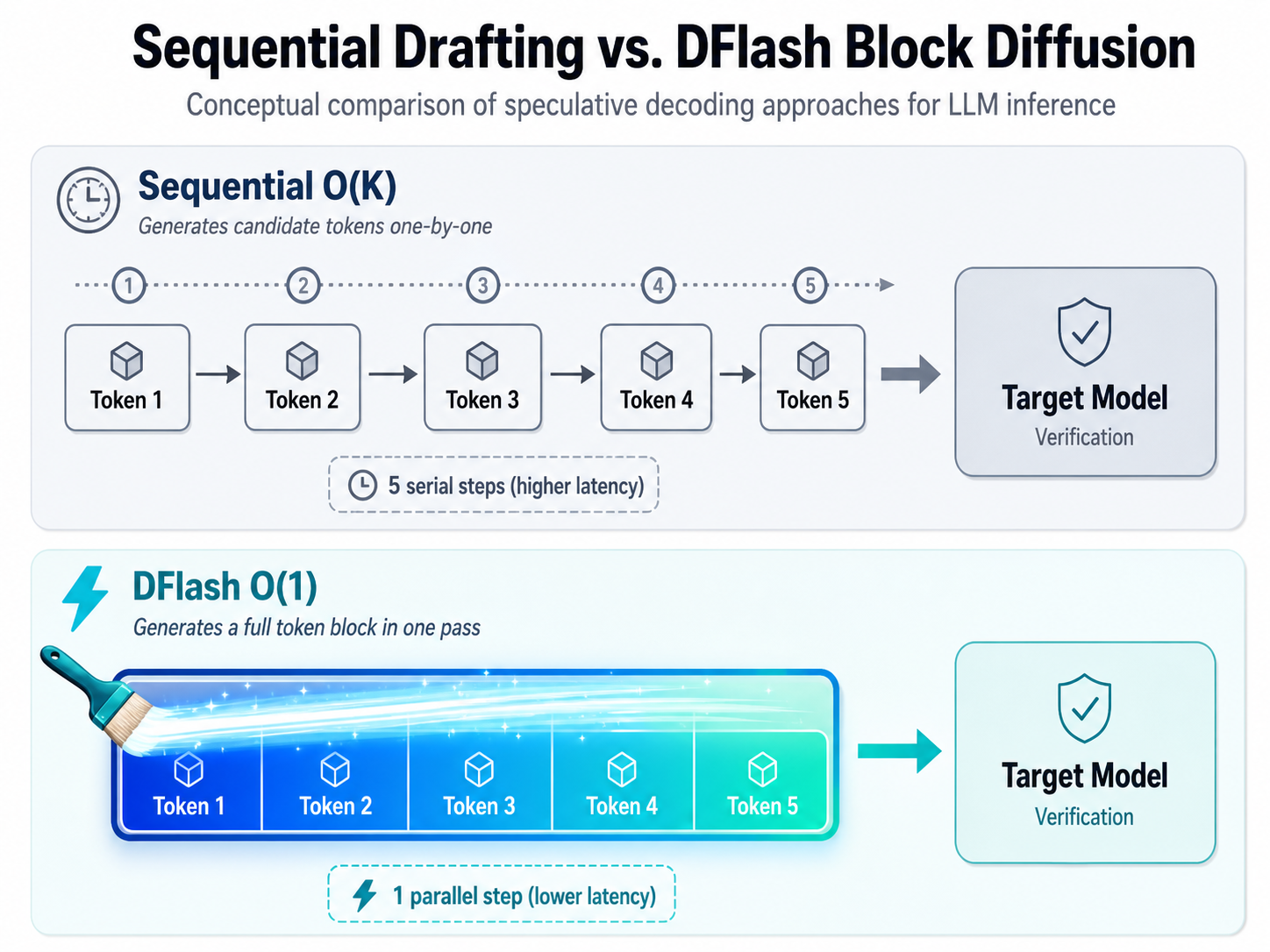

Traditionally, LLMs operate autoregressively, producing a single token at a time. Because each new word depends on every previous word in the sequence, the process is inherently linear. This creates a computational bottleneck where powerful hardware like Google's TPU v5p often sits underutilized while waiting for the next token to be calculated. While speculative decoding—using a smaller 'draft' model to predict multiple tokens for parallel verification—has mitigated this, most existing methods still rely on sequential drafting, limiting their maximum efficiency.

Shifting from Sequential to Parallel Drafting

DFlash departs from the standard speculative decoding blueprint by introducing a "block-painting" approach. Rather than drafting tokens one-by-one in an O(K) sequence, DFlash utilizes block diffusion to generate an entire block of candidate tokens in a single parallel forward pass, represented as an O(1) operation. By leveraging the massive parallel processing capabilities of Google's TPUs, DFlash can propose a long sequence of tokens nearly instantaneously.

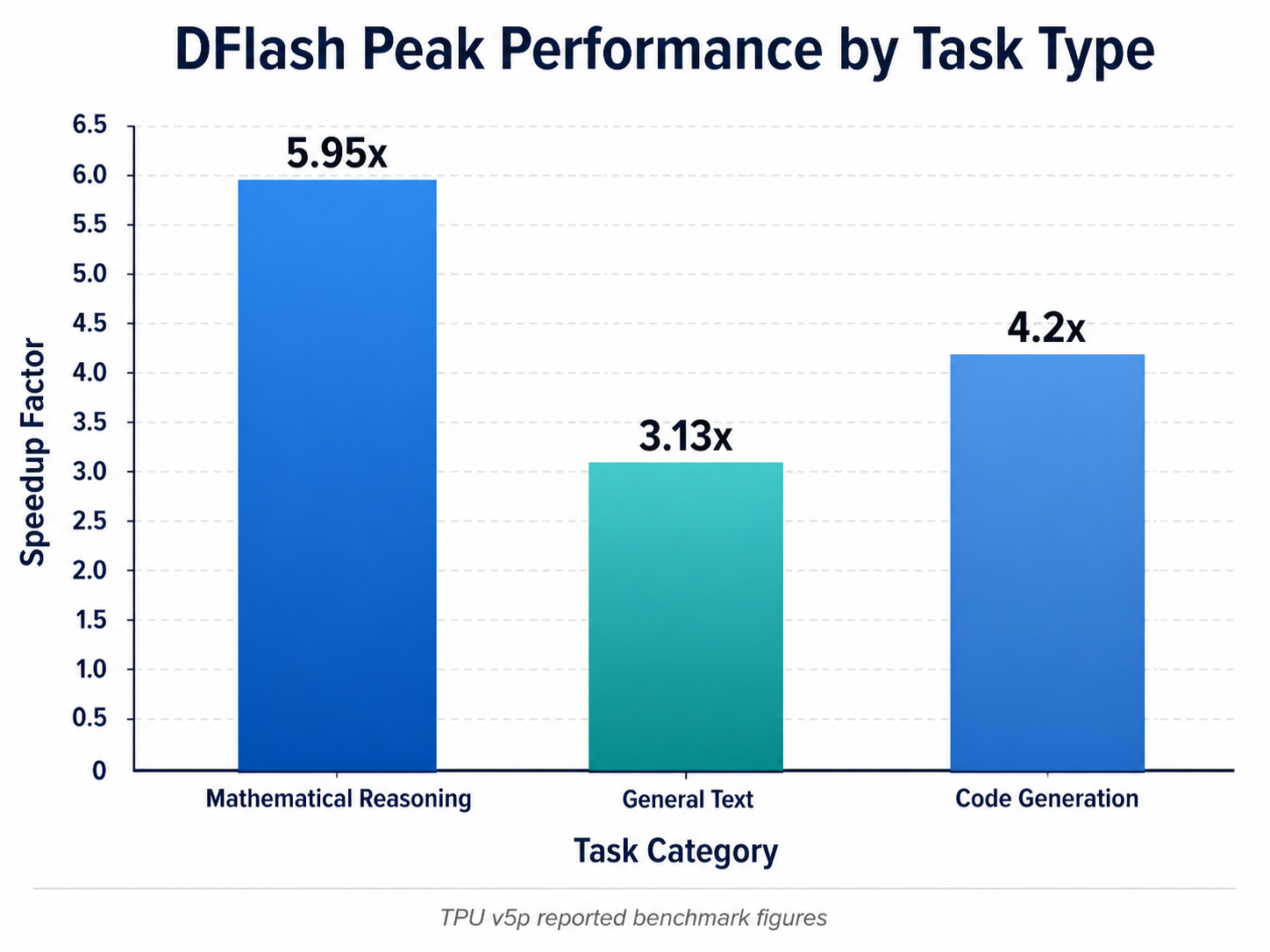

The results, published on February 5, 2026, in the paper "DFlash: Block Diffusion for Flash Speculative Decoding," show that this parallel drafting significantly increases the acceptance length of tokens during the verification phase. On Google’s TPU v5p hardware, DFlash achieved an average 3.13x speedup across various datasets. The efficiency gains were even more pronounced in specialized domains; for complex reasoning and mathematical tasks, the peak speedup reached nearly 6x.

Outperforming the State of the Art

The benchmarks suggest that DFlash represents a substantial improvement over existing speculative decoding techniques. In direct comparisons with EAGLE-3, a popular autoregressive speculative decoding method, DFlash demonstrated a 2.29x end-to-end serving speedup on TPU v5p, compared to just a 1.30x gain for EAGLE-3. In other specific benchmarks, the speedup provided by DFlash was up to 2.5 times higher than its predecessors.

Crucially, this acceleration is lossless. Because the larger "target" LLM still verifies every token generated by the DFlash draft model, the final output maintains 100% of the original model's accuracy and quality. This makes the method particularly attractive for enterprise applications where output integrity is non-negotiable.

Integration and Industry Impact

To ensure wide adoption, the research team has already integrated DFlash into the open-source vLLM TPU inference ecosystem. This integration allows developers and organizations using the vLLM framework to leverage these speedups without building custom infrastructure from scratch. Google has expressed pride in supporting external researchers from UCSD, highlighting the achievement as a major open-source milestone that pushes the boundaries of what is possible with AI hardware.

This development comes alongside other industry efforts to optimize inference, such as Google's JetStream engine and the ConfAdapt approach, which bakes multi-token prediction directly into model weights. However, DFlash’s unique use of diffusion-style drafting sets it apart by maximizing the inherent tensor-operation strengths of TPUs.

Forward-Looking Implications

The success of DFlash suggests a shifting paradigm in how AI models will be served in the future. By reducing the time required for LLMs to generate responses, DFlash directly improves the user experience for low-latency applications like real-time coding assistants and interactive reasoning engines.

Furthermore, the ability to squeeze 3x to 6x more performance out of existing hardware like the TPU v5p could significantly lower the cost-per-inference for cloud providers. As the industry moves toward more complex, multi-modal, and long-context models, the synergy between parallel diffusion drafting and autoregressive verification likely points toward a new standard for high-performance AI deployment. This breakthrough effectively democratizes high-speed inference, making powerful models more accessible and cost-effective for the broader developer community.