Google Signals Hardware Diversification with Reported Marvell AI Chip Partnership

Google is reportedly in talks with Marvell Technology to develop two new AI chips, a strategic move to diversify its custom silicon supply chain.

Google Explores New Frontiers in Custom Silicon

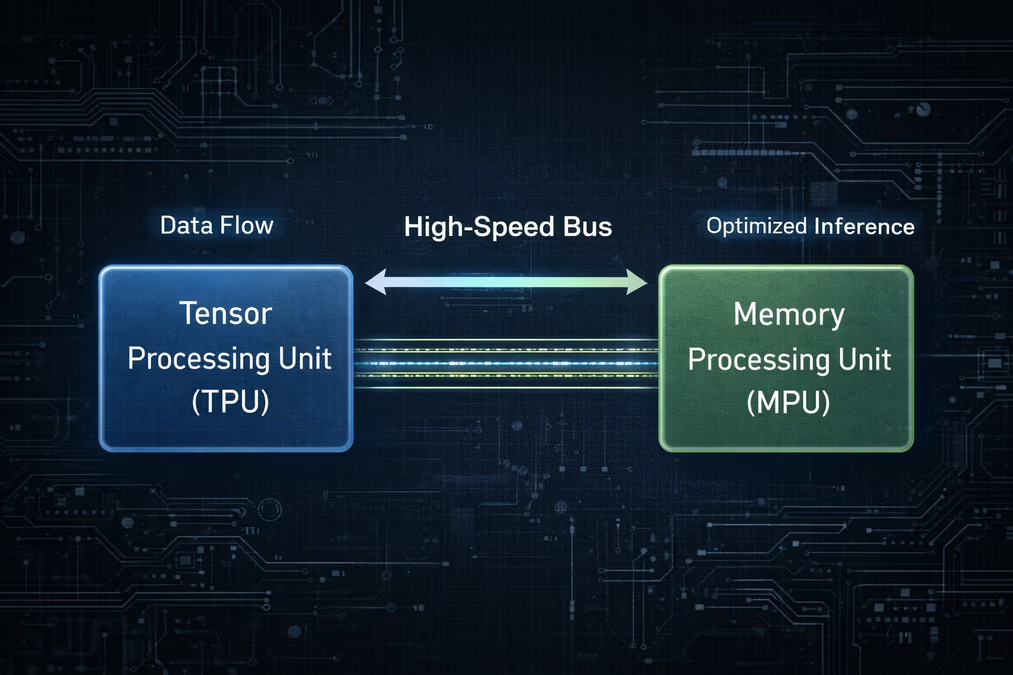

Google is reportedly in active discussions with Marvell Technology to develop two specialized AI chips, a move that could signal a significant expansion of its custom silicon strategy and a shift in its long-standing hardware partnerships. According to unverified reports initially published by The Information, these discussions focus on the creation of a memory processing unit (MPU) and a new Tensor Processing Unit (TPU) specifically optimized for AI inference workloads.

As of April 2026, these negotiations have not yet resulted in a formal contract. However, the potential collaboration highlights Google’s intent to diversify its hardware supply chain. While Google recently renewed its long-term TPU and networking agreement with Broadcom through 2031, industry analysts interpret the Marvell talks as a strategic effort to reduce reliance on any single design partner. In this proposed arrangement, Marvell would likely serve in a design-services capacity, a role similar to MediaTek’s involvement in Google's cost-optimized 'Ironwood' TPU project.

The Shift Toward Inference Optimization

The reported focus on inference-optimized chips reflects a broader industry transition. As AI services scale globally, the computational costs associated with 'inference'—the process of running a trained model to generate responses—are rapidly outpacing the costs of initial model training. For hyperscalers like Google, optimizing the performance-per-watt and cost-per-query of inference is becoming a critical necessity for maintaining profitability in the generative AI era.

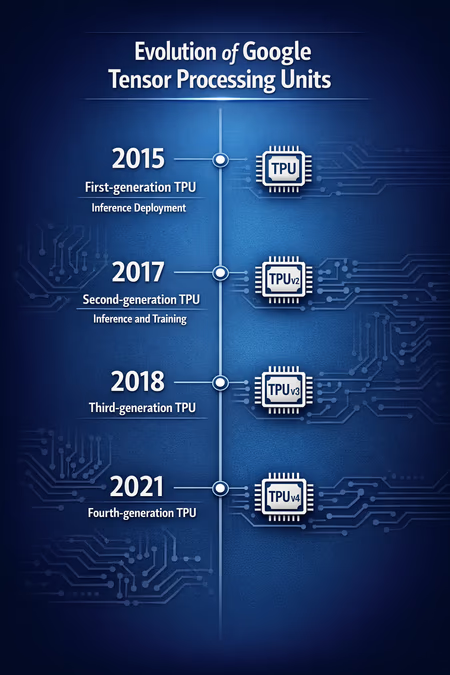

Google’s history with custom silicon dates back to the early 2010s, when the company realized that traditional CPUs and GPUs could not meet the projected computational demands of neural networks. By 2015, Google had internally deployed its first-generation TPU to accelerate AI inference. Since then, the TPU ecosystem has grown significantly, with Google Cloud offering access to these chips to major AI teams including Anthropic, Midjourney, and Salesforce.

Marvell’s Rising Profile in the AI Ecosystem

Marvell Technology has spent the last several years positioning itself as a powerhouse in data infrastructure. With a robust portfolio of intellectual property at advanced 3nm and 2nm process nodes, Marvell has delivered over 2,000 custom application-specific integrated circuits (ASICs) over the past quarter-century.

The company’s momentum in the AI space was further bolstered by a multi-billion dollar partnership with Nvidia in May 2025, focused on optical networking and the integration of NVLink Fusion technology. Furthermore, Marvell’s acquisition of Celestial AI in December 2025 for up to $5.5 billion significantly enhanced its photonic interconnect technology, a vital component for scaling AI clusters.

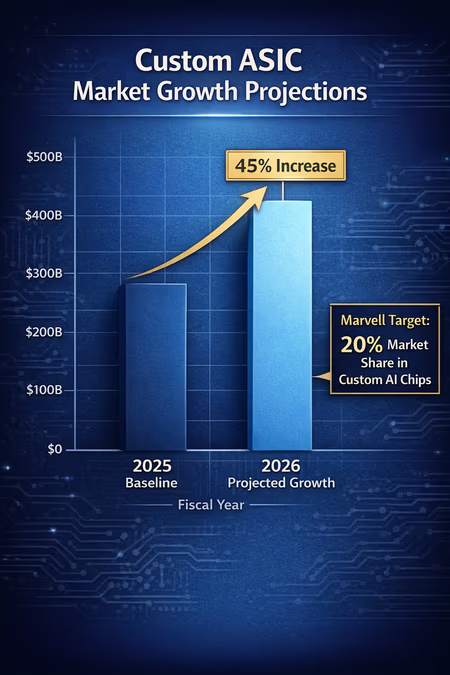

Industry projections suggest the custom ASIC market is poised for a 45% increase in 2026. Marvell has set ambitious targets to capture this growth, aiming for a 20% market share in custom AI chips and anticipating a 30% year-over-year revenue increase in fiscal year 2027. Securing a design contract with a 'Titan' like Google would serve as a massive validation of Marvell’s technical capabilities and its status as a viable alternative to established players like Broadcom.

Strategic Implications for the AI Market

If the partnership proceeds, the impact on Google’s infrastructure could be profound. By developing an MPU to work alongside its existing TPUs, Google could address the memory bottlenecks that often limit AI performance. Furthermore, unverified suggestions indicate that the design for this memory-focused chip could be finalized as early as 2027, with test production to follow shortly thereafter.

Google is not alone in this multi-sourcing strategy. Other hyperscale technology companies, including Meta and Microsoft, are actively developing their own custom AI silicon to mitigate their dependence on third-party hardware providers. Microsoft’s Maia 200 chip is a prime example of this trend. Additionally, Google continues to develop initiatives like 'TorchTPU' to improve compatibility with PyTorch, aiming to lower the barriers to entry for its silicon and reduce the industry's reliance on Nvidia’s CUDA ecosystem.

Looking forward, the reported collaboration between Google and Marvell underscores a future where the AI hardware landscape is increasingly fragmented and specialized. For Google, it is a play for greater control over its operational destiny. For the broader industry, it signifies that the race for AI supremacy is being fought as much in the semiconductor fabrication lab as it is in the software research department.