Google DeepMind Releases Gemini Robotics-ER 1.6 to Enhance Physical Reasoning in Robots

Google DeepMind's new Gemini Robotics-ER 1.6 model brings 'agentic vision' and enhanced spatial reasoning to robots, developed with Boston Dynamics.

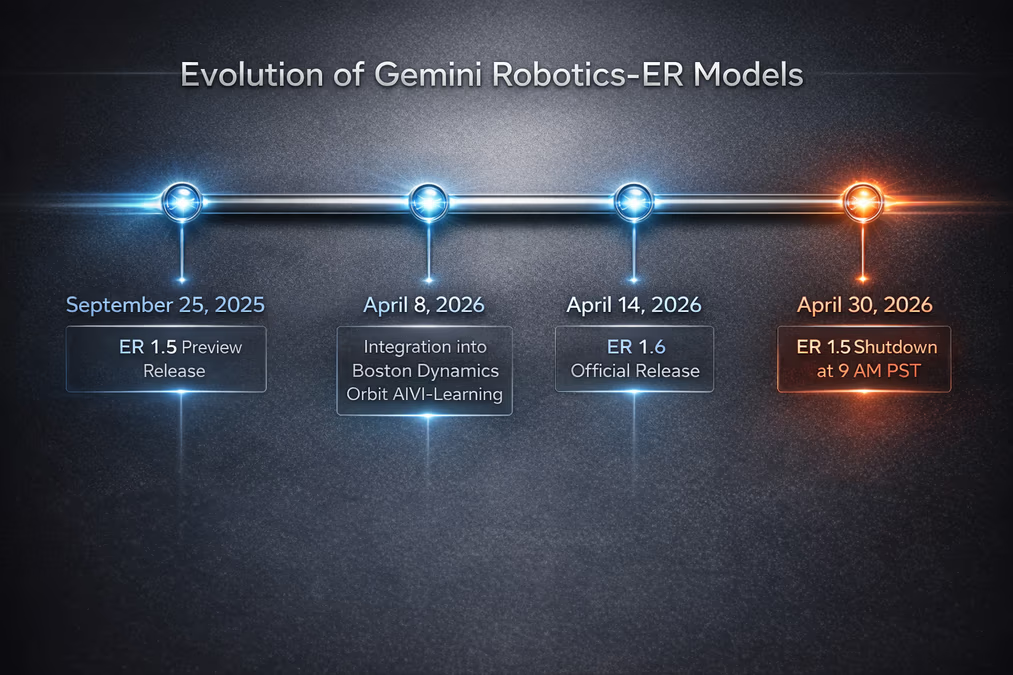

Google DeepMind has officially released the `gemini-robotics-er-1.6-preview` model, a significant upgrade to its reasoning-first robotics framework designed to bridge the gap between digital intelligence and physical action. Available as of April 14, 2026, through the Gemini API and Google AI Studio, the update introduces advanced capabilities in instrument reading and spatial logic, providing a more sophisticated cognitive layer for the next generation of physical agents.

Building upon the foundations of the ER 1.5 and Gemini 3.0 Flash models, the 1.6-preview iteration acts as a high-level reasoning engine. Rather than controlling robotic limbs directly, it interprets complex visual data and natural language commands to plan actions. This update is specifically engineered to allow robots to interact with unstructured environments—such as factories, warehouses, and domestic spaces—without the need for digital retrofitting of legacy equipment.

Agentic Vision and Instrument Reading

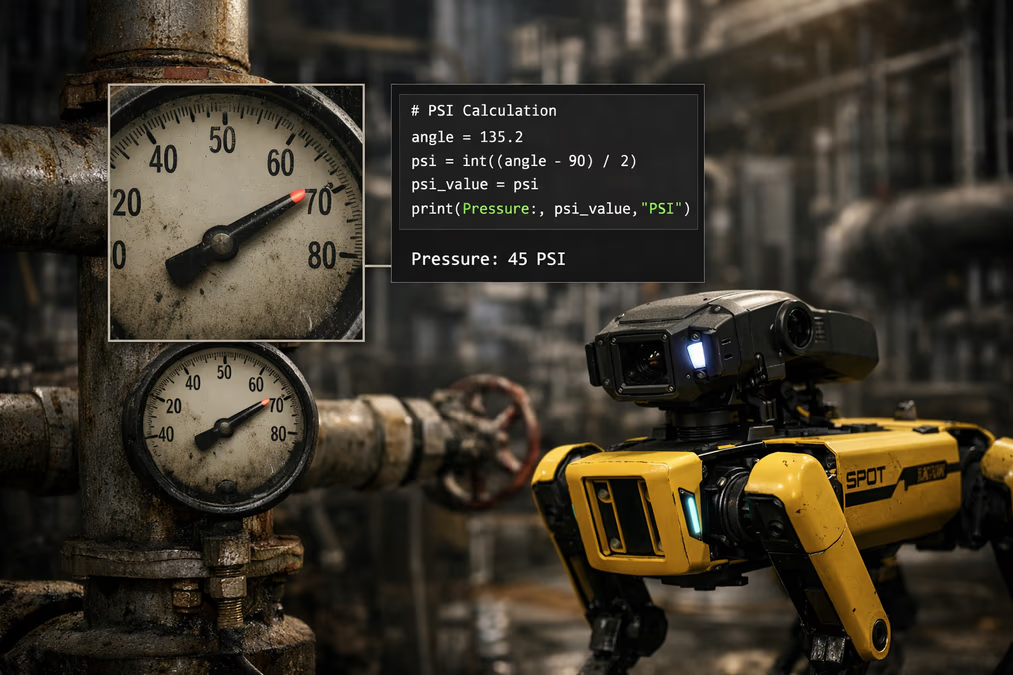

A standout feature of the Gemini Robotics-ER 1.6 update is its specialized capability for instrument reading, achieved through what Google calls "agentic vision." This process combines visual reasoning with active code execution. When a robot encounters an analog gauge or a manual readout, the model can zoom into specific image areas, identify relevant pointers or needles, and execute code-based calculations to estimate proportions and intervals.

This specific functionality was developed in close collaboration with Boston Dynamics to address facility inspection use cases. By enabling robots to "read" the world like a human inspector would, the model allows for the monitoring of legacy infrastructure that lacks modern IoT connectivity. This capability was integrated into Boston Dynamics’ Orbit AIVI-Learning products, which went live for customers on April 8, 2026, just days before the model's general developer release.

Sophisticated Spatial and Physical Reasoning

The 1.6-preview model demonstrates marked improvements in precise object detection, categorization, and relational logic. It can now better understand the physical relationship between objects—such as whether a container is stable enough to be stacked or how a specific tool should be oriented for use.

Furthermore, the update enhances motion detection and trajectory mapping. A critical addition to the model's logic is "success detection," which allows a robot to autonomously evaluate its own performance. If a robot fails to grasp an object or complete a turn, the model can decide whether to retry the attempt with a modified strategy or proceed to the next task, significantly reducing the need for constant human supervision.

“By enhancing spatial reasoning and multi-view understanding, we are bringing a new level of autonomy to the next generation of physical agents,” Google stated during the announcement.

A New Benchmark for Robotics Safety

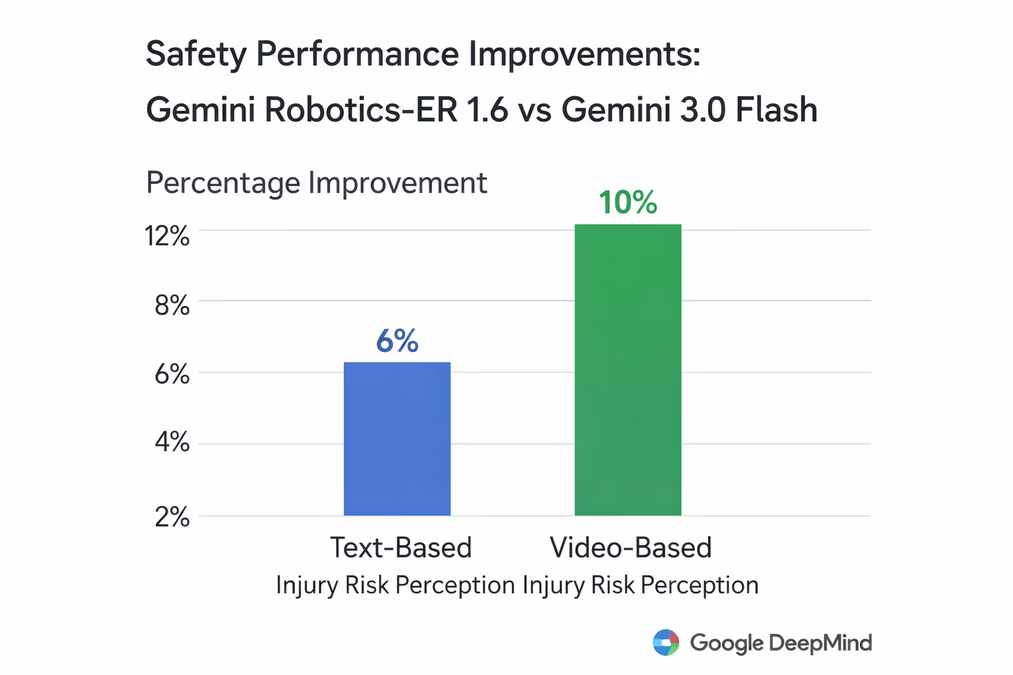

Safety remains a primary hurdle for generative AI in physical environments. Google describes Gemini Robotics-ER 1.6 as its "safest robotics model to date," citing superior compliance with safety policies during adversarial spatial reasoning tasks.

Empirical data suggests a measurable leap in risk perception. Compared to the Gemini 3.0 Flash model, the ER 1.6 update shows a 6% improvement in perceiving injury risks in text-based scenarios and a 10% improvement in video scenarios. These refinements ensure that robots are better equipped to adhere to physical safety constraints, identifying potential hazards to humans or delicate equipment before taking action.

Developer Flexibility and Infrastructure

To accommodate varying industrial needs, the model incorporates a flexible "thinking budget." This allows developers to tune the model to prioritize either low-latency response times for rapid movements or high-accuracy reasoning for complex, delicate tasks. The model also maintains the ability to natively call external tools, including Google Search and various vision-language-action (VLA) models, to ground its decisions in verifiable, real-world data.

The release signals a shift in the robotics timeline for Google. As the 1.6-preview takes center stage, Google has announced that the preceding `gemini-robotics-er-1.5-preview` model is scheduled for shutdown on April 30, 2026, at 9 AM PST.

The Path Toward General-Purpose Autonomy

The release of Gemini Robotics-ER 1.6 is poised to serve as critical infrastructure for the broader robotics ecosystem. By providing a powerful foundation model that understands physical context, Google is lowering the barrier for startups and researchers to develop adaptable, general-purpose robots.

As these physical agents move from the lab to the warehouse floor and eventually into the home, the ability to reason about the physical world—understanding not just what an object is, but how it functions and what risks it poses—will be the defining factor in their utility. With the integration of the ER 1.6 model into platforms like Boston Dynamics’ Orbit, the transition from digital intelligence to autonomous physical labor is accelerating.