Efficiency Over Scale: OpenAI, Google, and Alibaba Pivot to Leaner, Faster AI

OpenAI, Google, and Alibaba launch smaller, faster AI models, signaling a strategic industry shift toward cost-efficiency and on-device processing.

On March 3, 2026, the artificial intelligence industry witnessed a coordinated pivot toward efficiency as three of the world’s largest tech giants—OpenAI, Google, and Alibaba—simultaneously launched new models designed for speed, affordability, and on-device utility. This release wave marks a significant departure from the multi-year race for raw parameter count, signaling that the era of "scaling at all costs" has been replaced by a focus on practical, localized performance.

OpenAI Refines the User Experience with GPT-5.3 Instant

OpenAI updated its most widely used model with the release of GPT-5.3 Instant. Aimed at providing a smoother, more natural conversational experience, the update focuses on the nuance of daily interactions. According to OpenAI Help Center documentation, GPT‑5.3 Instant delivers more accurate answers and richer results when searching the web, while reducing the "unnecessary dead ends, caveats, and overly declarative phrasing" that often interrupt the flow of a chat.

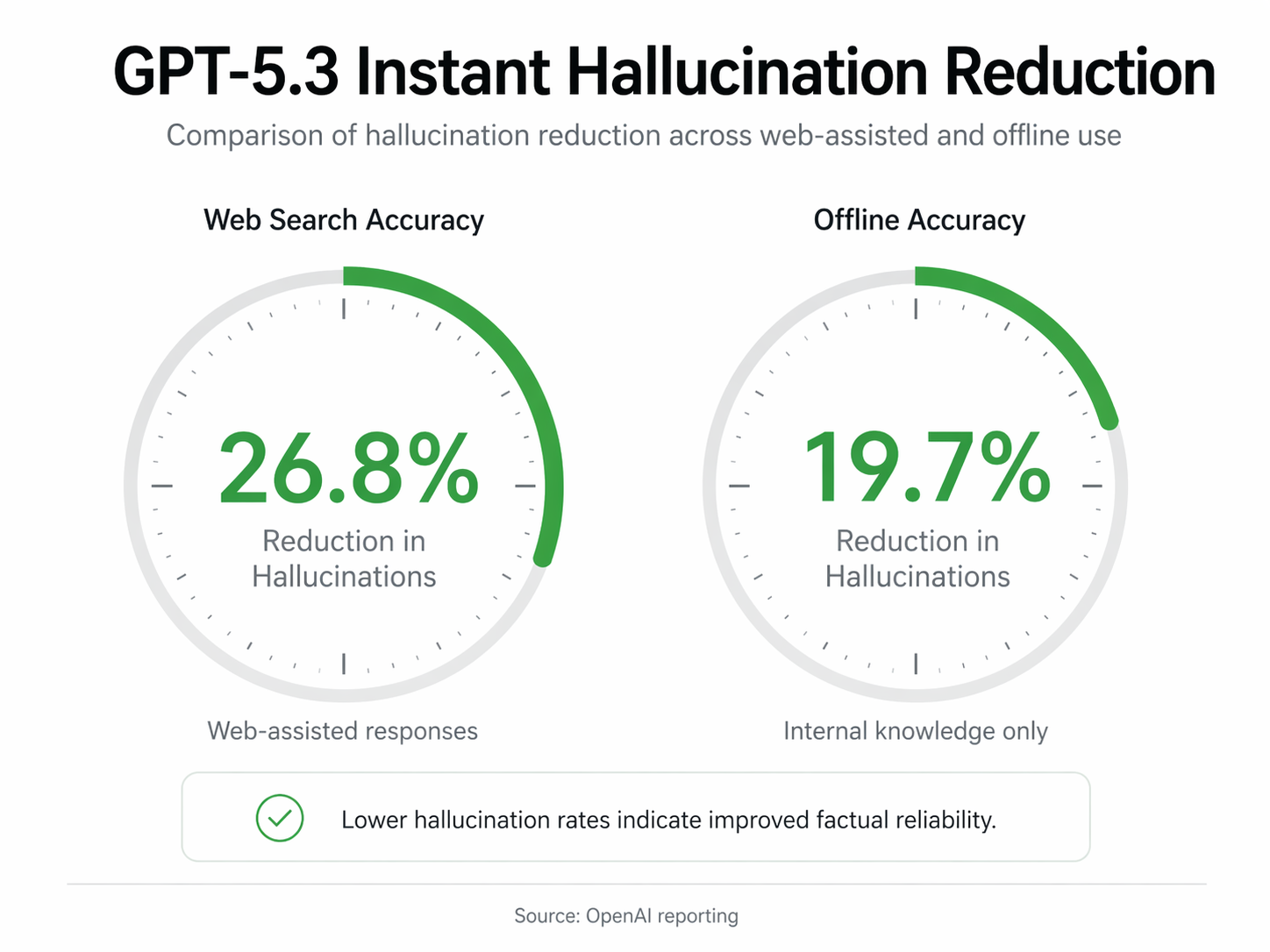

Technical improvements are centered on reliability. The new model boasts a 26.8% reduction in hallucinations when used with web search and a 19.7% reduction without. A product post from OpenAI noted that the update specifically targets subtle issues that do not always show up in standard benchmarks but are critical in determining whether ChatGPT feels "helpful or frustrating."

Available via the API as `gpt-5.3-chat-latest`, the model has already become the default interface for logged-in ChatGPT users, with a further tone update rolled out on March 16 to improve follow-up interactions.

Google Targets Enterprise Speed with Gemini 3.1 Flash-Lite

Google simultaneously expanded its Gemini lineup with the launch of Gemini 3.1 Flash-Lite. Positioned as a high-volume workhorse for developers and enterprises, the model is available via the Gemini API in Google AI Studio and Vertex AI. Google has described the Flash-Lite variant as being built specifically for "speed and efficiency."

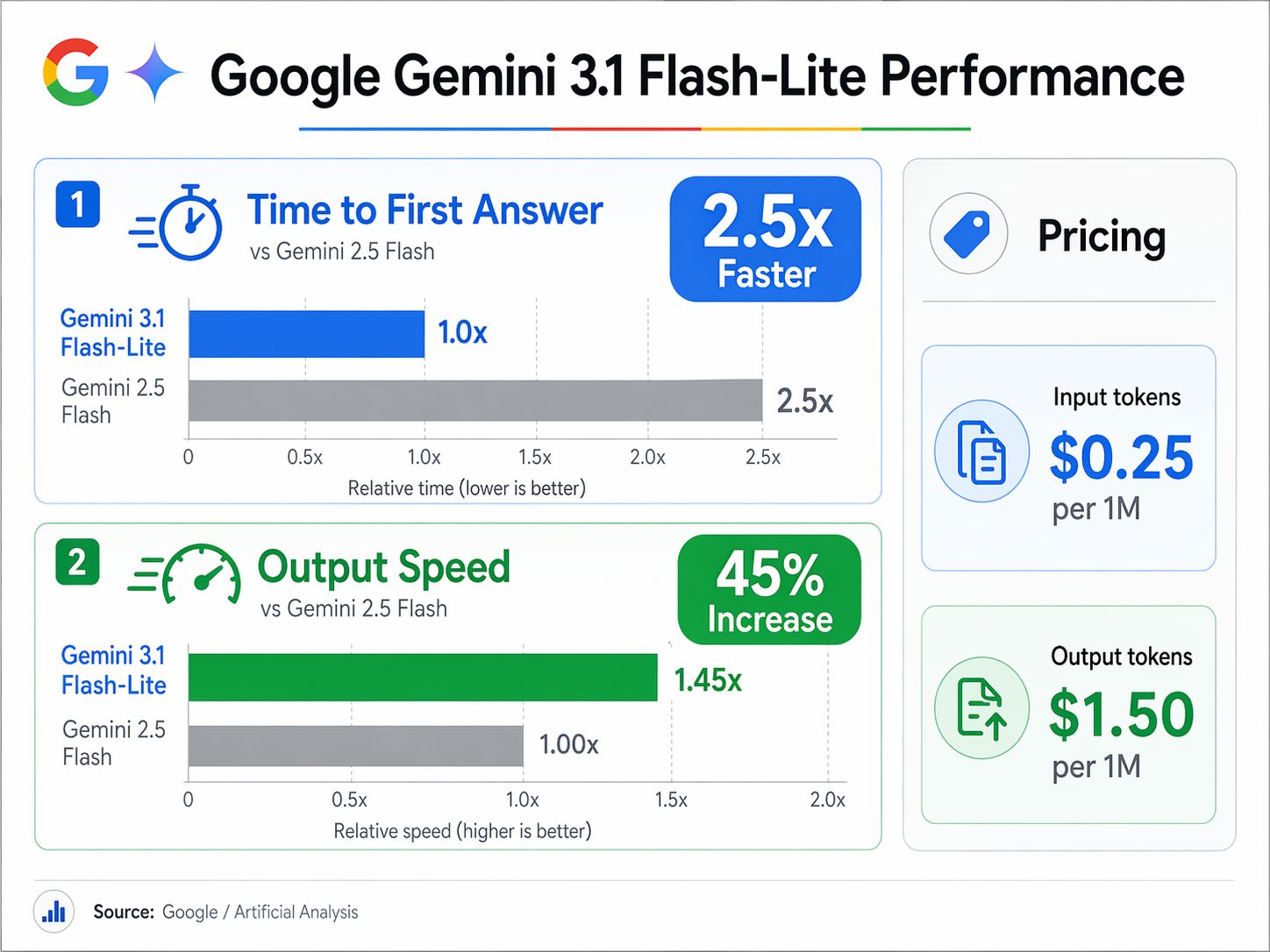

Benchmark data reveals significant performance gains over its predecessors. Gemini 3.1 Flash-Lite is 2.5 times faster in Time to First Answer (TTFA) and offers a 45% increase in overall output speed compared to Gemini 2.5 Flash. The pricing reflects a push for mass-market adoption, set at $0.25 per 1 million input tokens and $1.50 per 1 million output tokens.

Mehul Gupta, an expert from Data Science in Your Pocket, observed that Google is focusing on a practical challenge: how to deliver "strong intelligence at massive scale without premium pricing." This approach underscores the growing demand for models that can handle millions of requests without ballooning operational costs.

Alibaba Democratizes On-Device AI with Qwen 3.5 Small

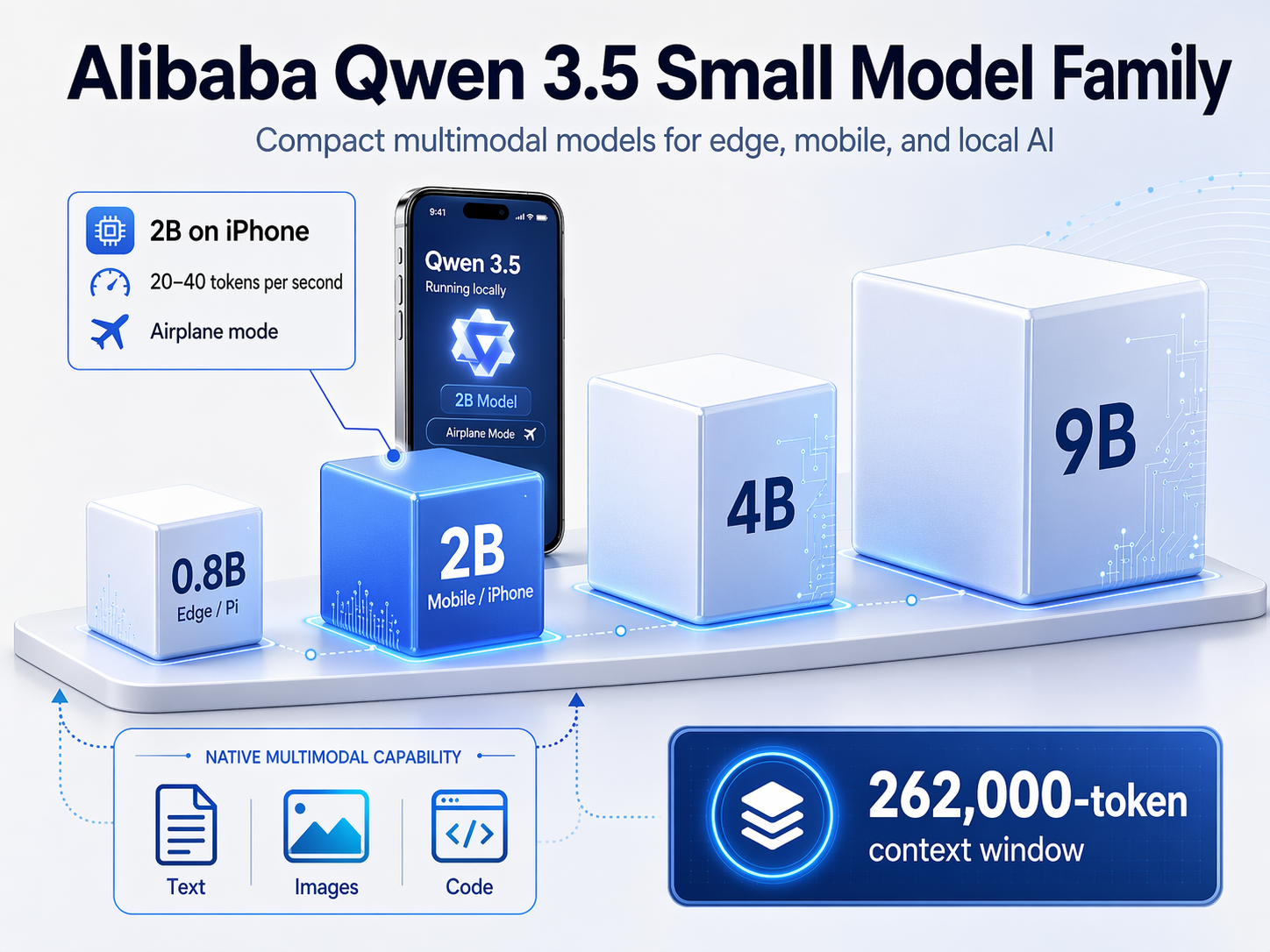

Alibaba Cloud contributed to the day's releases by shipping the Qwen 3.5 Small open-weight model series. This family includes four sizes: 0.8B, 2B, 4B, and 9B parameters. These models are natively multimodal and utilize a novel hybrid architecture designed to run on consumer-grade hardware. Remarkably, the smallest 0.8B model is capable of running on a Raspberry Pi.

In a demonstration of the series' portability, the 2B parameter version was shown running on an iPhone in airplane mode. The model processed both text and images at speeds between 20 and 40 tokens per second, maintaining a memory footprint of approximately 4GB. Despite their small size, these models support a massive 262,000-token context window and a vocabulary of 248,000 tokens covering 201 languages and dialects.

The Strategic Shift Toward Small Language Models (SLMs)

This flurry of releases reflects a pivotal change in the AI landscape. Industry leaders are increasingly recognizing that traditional scaling approaches—simply making models larger—are encountering diminishing returns in both performance and cost-efficiency. Specialized, domain-specific models are now being prioritized over general-purpose giants for tasks like financial analysis or medical diagnostics.

Microsoft’s vice president of GenAI research, Sébastien Bubeck, highlighted this economic reality during the release of the Phi-3 series, stating that "Phi-3 is not slightly cheaper, it's dramatically cheaper." This sentiment is echoed across the industry as companies like Meta and Apple also lean into on-device inference to enhance data privacy and reduce latency.

Unverified reports and expert predictions from organizations like Stanford and Dell suggest that 2026 marks an era where AI evangelism has given way to rigorous evaluation. Success is no longer measured by the size of the training cluster, but by practical utility and real-world impact. As these smaller, faster models become the standard, the democratization of AI will likely accelerate, allowing startups and smaller businesses to deploy sophisticated applications that were previously the exclusive domain of tech titans with massive cloud budgets.