Efficiency Meets Autonomy: The Early 2026 LLM Avalanche Reshapes AI Economics

Recent releases from Alibaba, MiniMax, and Xiaomi drive a 50% drop in inference costs while introducing self-evolving coding agents.

Alibaba’s Qwen team and the startup MiniMax have unleashed a series of high-performance models that effectively reset the baseline for coding efficiency and agentic autonomy. Between February and March 2026, the arrival of Qwen3 Coder Next and the MiniMax M2.7 suite signaled a pivot in the industry: high-tier intelligence no longer requires massive active compute, and the models are beginning to participate in their own architectural evolution.

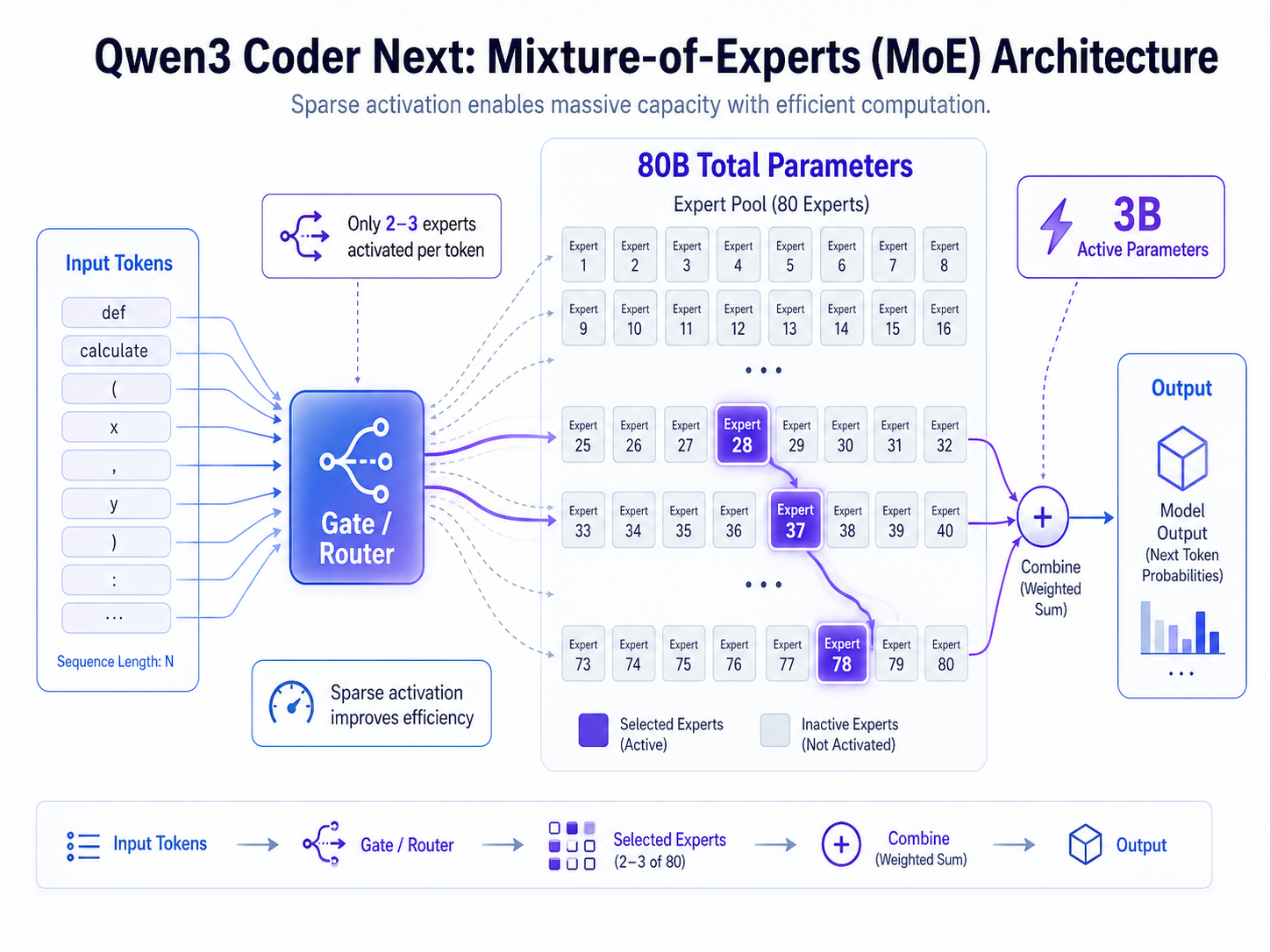

Alibaba’s Qwen3 Coder Next and the MoE Advantage

Released across the first week of February 2026, Alibaba’s Qwen3 Coder Next represents a significant leap in the efficiency of coding-specialized models. Utilizing a Mixture-of-Experts (MoE) architecture, the model boasts a total of 80 billion parameters but activates only 3 billion during any single inference step. This sparse activation allows for high-speed performance without sacrificing depth, enabling a native context window of 262,144 tokens.

According to reports from Alibaba’s Qwen team on the DEV Community, the model achieves performance parity with Claude Sonnet 4.5 in coding tasks despite its low active parameter count. This efficiency makes it a prime candidate for local deployment on consumer-grade hardware, providing individual developers with a sophisticated coding agent that was previously only available via high-latency cloud APIs.

MiniMax and the Rise of Self-Evolving Models

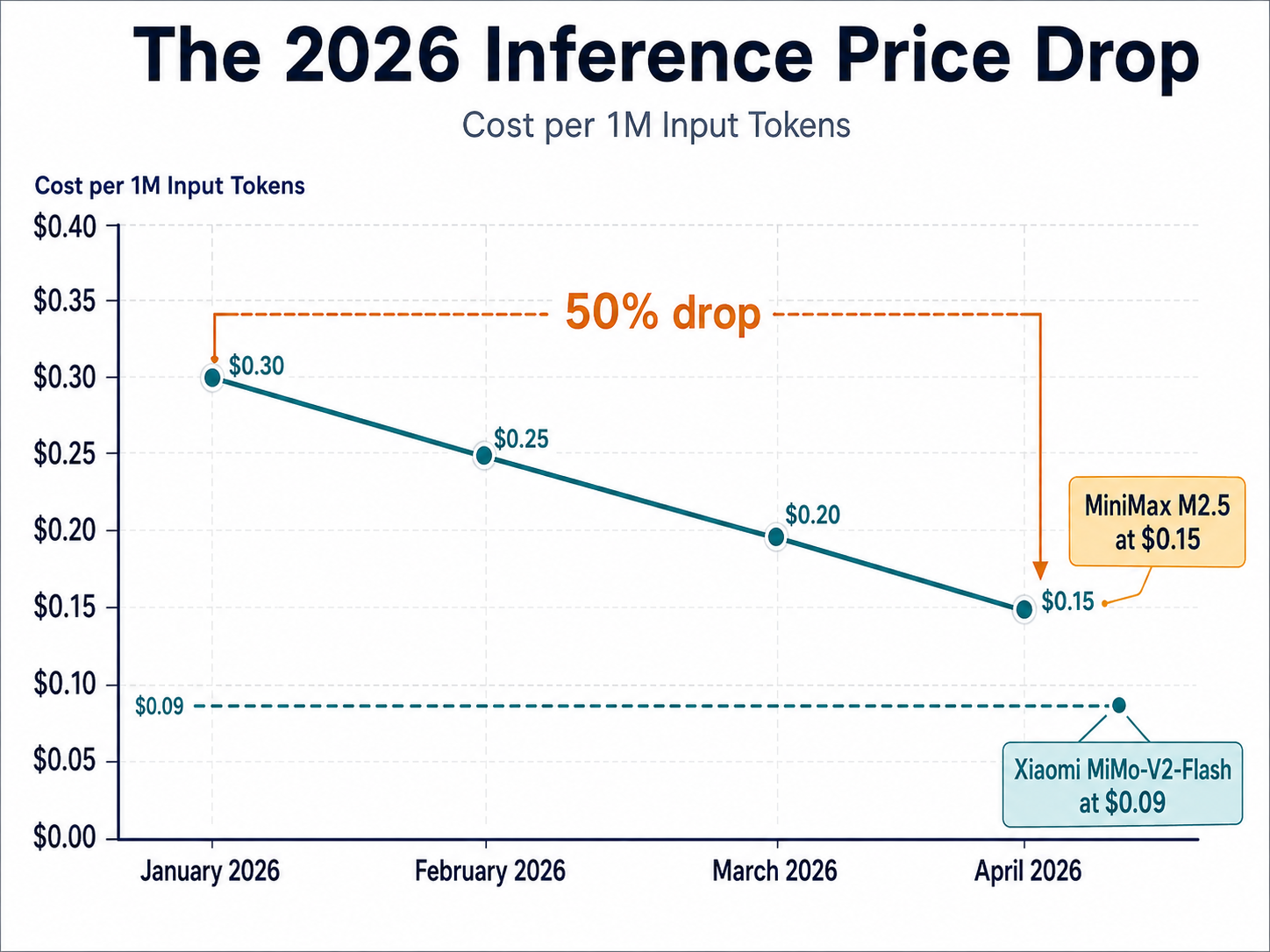

Following closely, the developer MiniMax accelerated the pace with two major releases. The M2.5 model debuted on February 12, featuring a 196,608-token context window and a price point of $0.15 per million input tokens. However, the announcement of MiniMax M2.7 on March 18 truly captured industry attention.

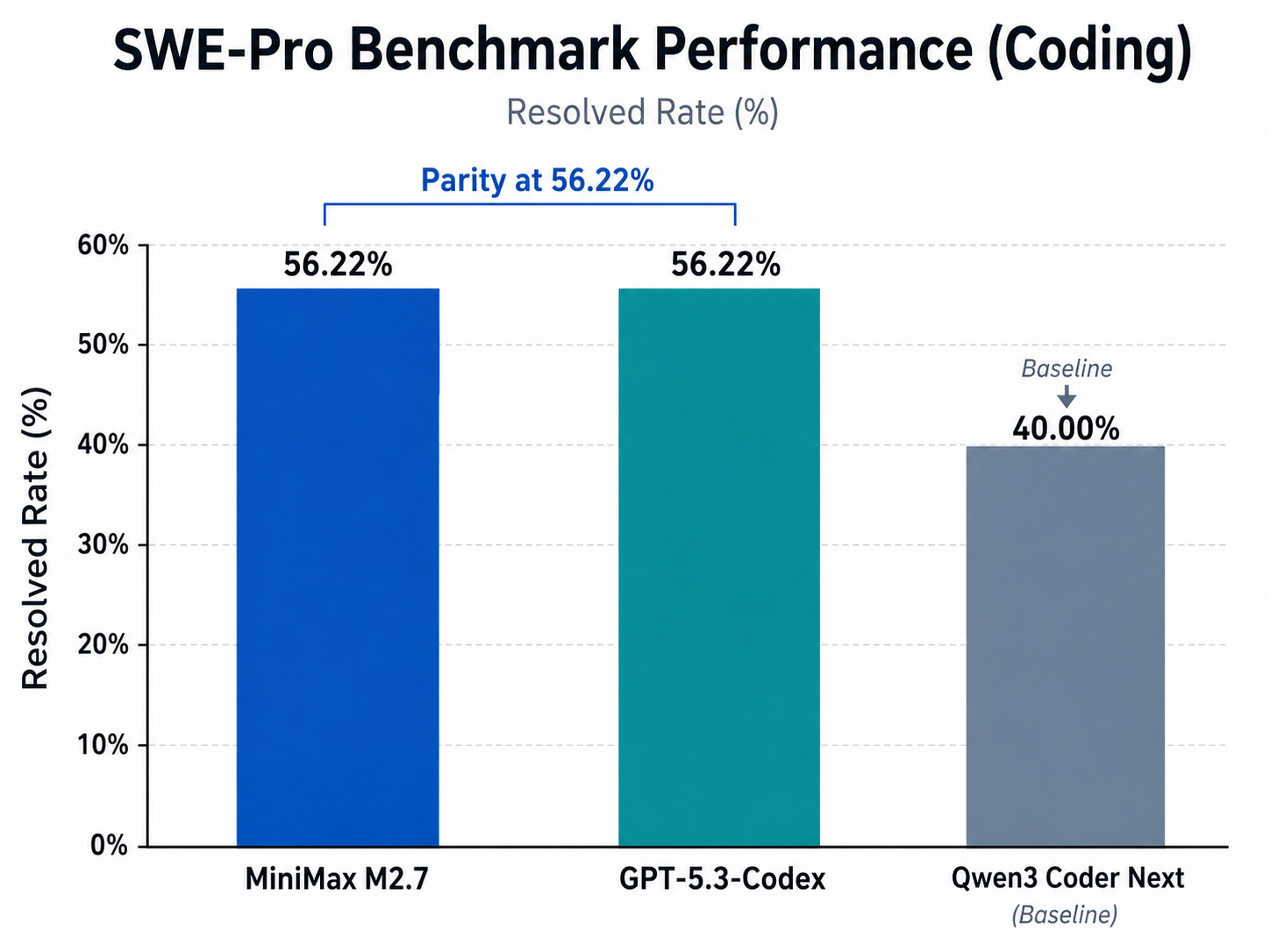

The M2.7 model employs a larger MoE architecture with 230 billion total parameters, activating 10 billion per inference. On the critical SWE-Pro benchmark—a measure of a model's ability to resolve real-world software engineering issues—M2.7 scored 56.22%, matching the performance of OpenAI’s GPT-5.3-Codex.

Perhaps more significant than its raw benchmarks is how M2.7 was developed. MiniMax noted in a blog post that M2.7 is their first model to deeply participate in its own evolution. Reports from Ai Studio on Medium suggest that an internal version of the model was provided a programming scaffold and allowed to operate autonomously. Over 100 rounds, the model analyzed its own failures, modified its code, and ran evaluations on those changes. This self-directed optimization reportedly resulted in a 30% performance improvement without human intervention at each step.

The Pricing Avalanche of 2026

This rapid succession of releases—compounded by Xiaomi’s MiMo-V2-Flash in late 2025—has created a volatile pricing environment. Xiaomi’s model, featuring a 256K context window, entered the market at a disruptive $0.09 per million input tokens.

By April 2026, the sheer volume of high-quality model updates, including Claude 4.7 and GPT-5.5, led to what TokenMix Research Lab described as an 'avalanche.' They observed that the cost for 'good enough' LLM inference dropped approximately 50% compared to January 2026. This downward pressure is democratizing access to agentic workflows, as developers can now afford to run complex, multi-step chains that require millions of tokens of context.

Impact on Agentic Workflows and Industry Trends

The shift toward MoE architectures and higher context windows is fundamentally changing how AI agents are deployed. With MiniMax M2.7-Highspeed offering output speeds of 100 tokens per second—a 66% improvement over standard versions—enterprises are moving away from simple prompt-response systems. Instead, they are building 'Agent Teams' capable of dynamic tool search and long-horizon reasoning.

As the industry moves toward multimodal architectures and 'System 2' reasoning, the focus is shifting from simple text generation to autonomous problem-solving. However, this velocity introduces new risks. The concept of 'LLM perception drift' is becoming a vital metric for brands, as they struggle to maintain stability in their automated systems while the underlying models evolve at an unprecedented rate.

Looking forward, the success of self-improving models like M2.7 suggests a future where human engineers act more as curators of AI-led evolution rather than direct authors of every line of code. The 'April Avalanche' of 2026 may be remembered as the moment when the economic and technical barriers to truly autonomous AI agents finally collapsed.