The End of the Middle: DeepSeek and OpenAI Carve the AI Market into Two Extremes

A sharp divide in AI pricing between OpenAI’s premium ecosystem and DeepSeek’s low-cost open infrastructure is eroding the market's middle ground.

A massive cost chasm has opened in the frontier AI market, effectively hollowing out the 'middle class' of model pricing. By April 2026, the landscape of artificial intelligence has shifted toward a binary choice: developers must now decide between high-priced, proprietary reliability or aggressive, open-weight infrastructure. This bifurcation, led by the diverging strategies of OpenAI and DeepSeek, is forcing a radical reassessment of how AI products are built and monetized.

The Great Pricing Divide

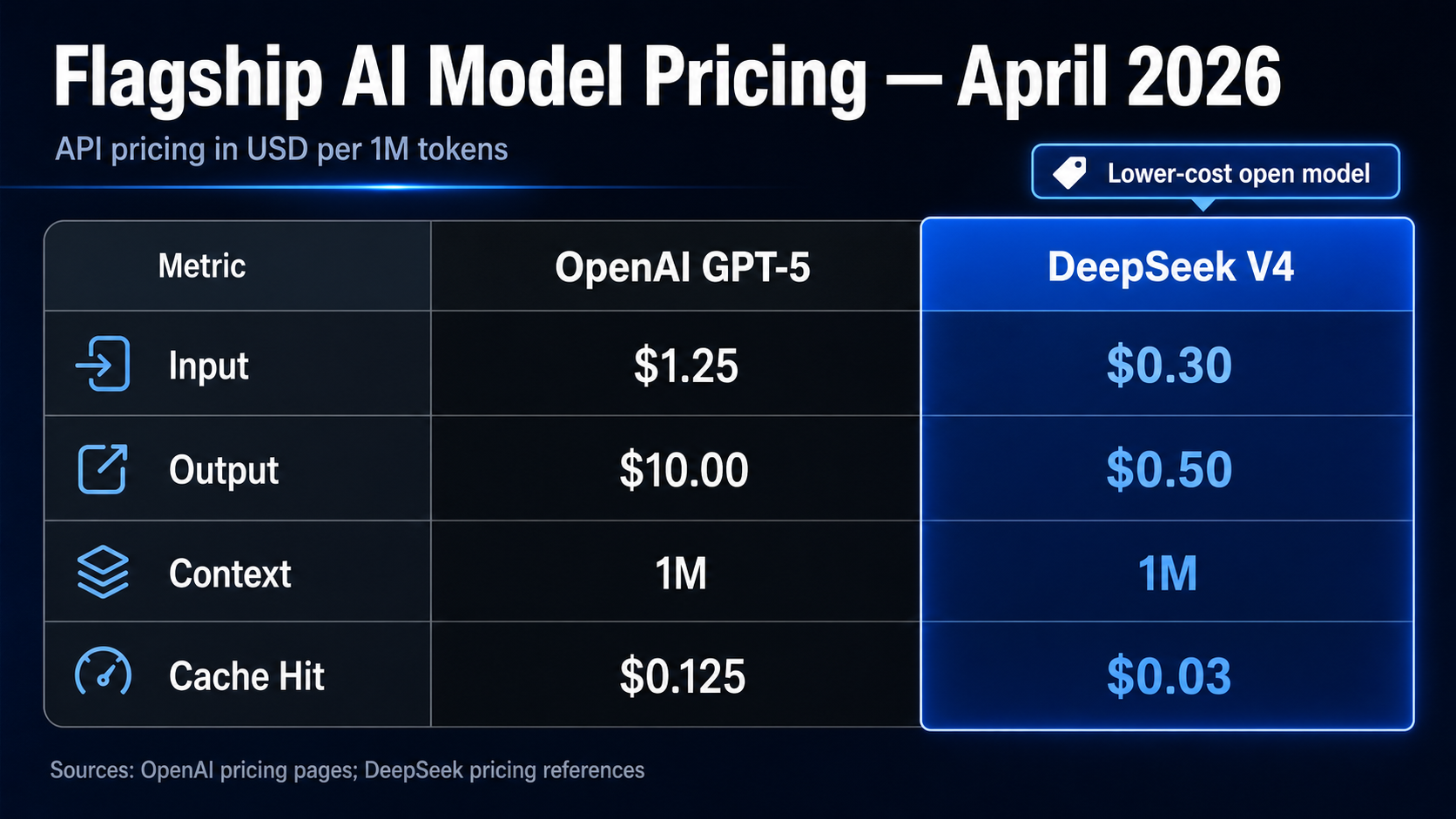

OpenAI has increasingly positioned its flagship models as premium 'outcome' products. Its GPT-5 model, released in mid-2025, currently commands $1.25 per million input tokens and $10.00 per million output tokens. While these rates are a reduction from the $10.00/$30.00 pricing seen during the GPT-4 Turbo era in 2024, they remain a high-margin premium compared to the rest of the market. OpenAI justifies these costs through an ecosystem of enhanced reliability, comprehensive enterprise support, and deep integration.

In stark contrast, DeepSeek has spent the last two years systematically undercutting the industry. Launched in early March 2026, DeepSeek V4 offers GPT-5-class performance at roughly 1/10th the price, charging just $0.30 per million input tokens and $0.50 per million output tokens. This aggressive strategy leverages advanced architectures like Mixture-of-Experts (MoE) and aggressive model compression to drive down inference costs to levels previously thought impossible for frontier-grade intelligence.

The Disappearing Middle Class

This pricing gap has created a vacuum where a balanced middle ground once existed. As Janakiram MSV of The New Stack observed, OpenAI and DeepSeek's pricing bets have split the market, leading to a disappearance of the AI middle class and forcing developers to adapt to a new economy. The segment of the market where businesses could find a comfortable balance of mid-range performance and moderate cost is evaporating.

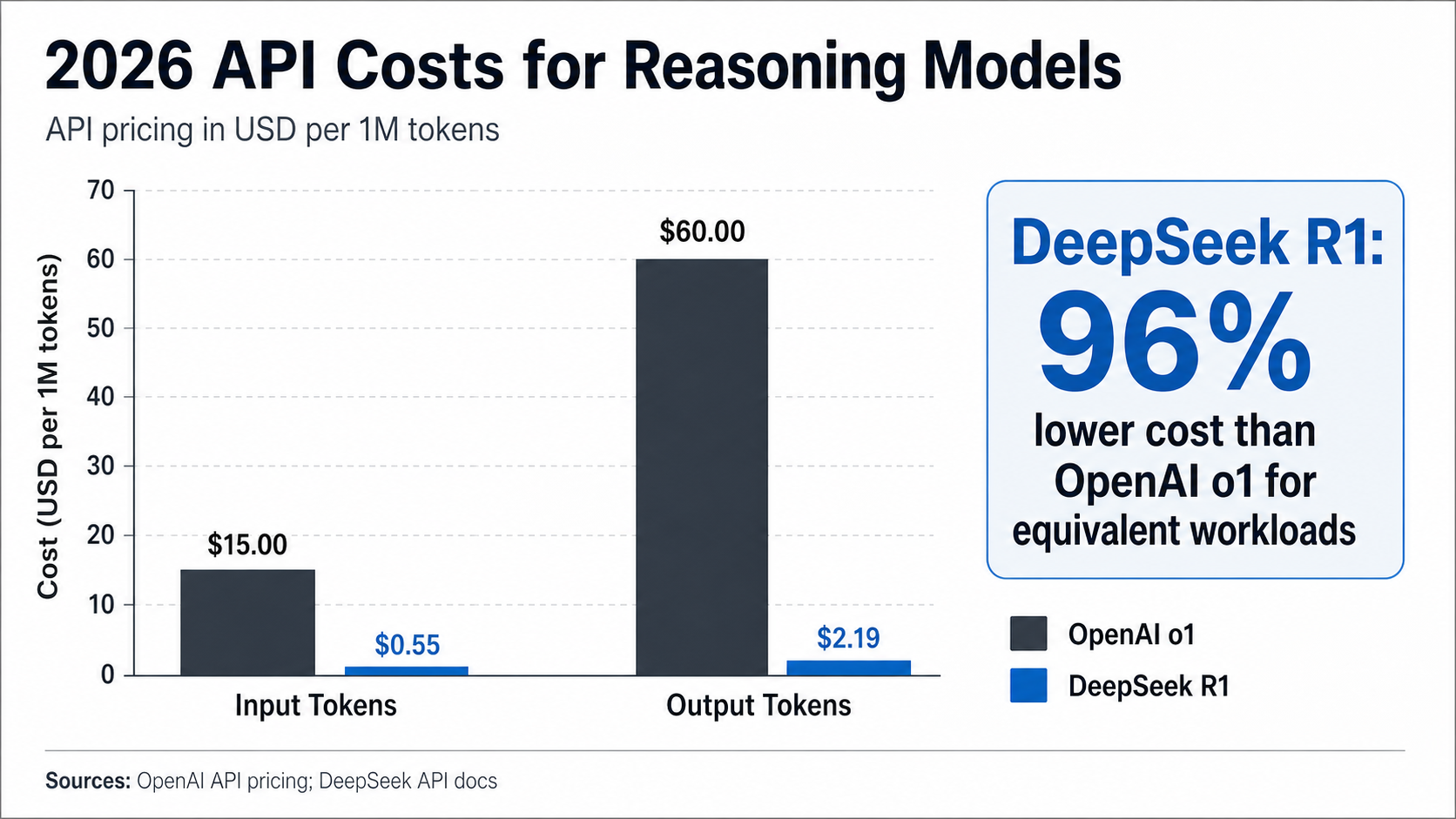

According to analysis from IntuitionLabs, DeepSeek's inference offerings have sent shockwaves through the industry by undercutting incumbent providers' prices by an order of magnitude. This is most evident in the reasoning-model segment. While OpenAI's o1 model series targeted high-complexity tasks at a premium, DeepSeek R1—a dedicated reasoning model—costs only $0.55 per million input tokens and $2.19 per million output tokens. Reports indicate that DeepSeek R1's API is approximately 96% less expensive than OpenAI o1 for equivalent workloads, offering o1-class reasoning at 1/27th the cost.

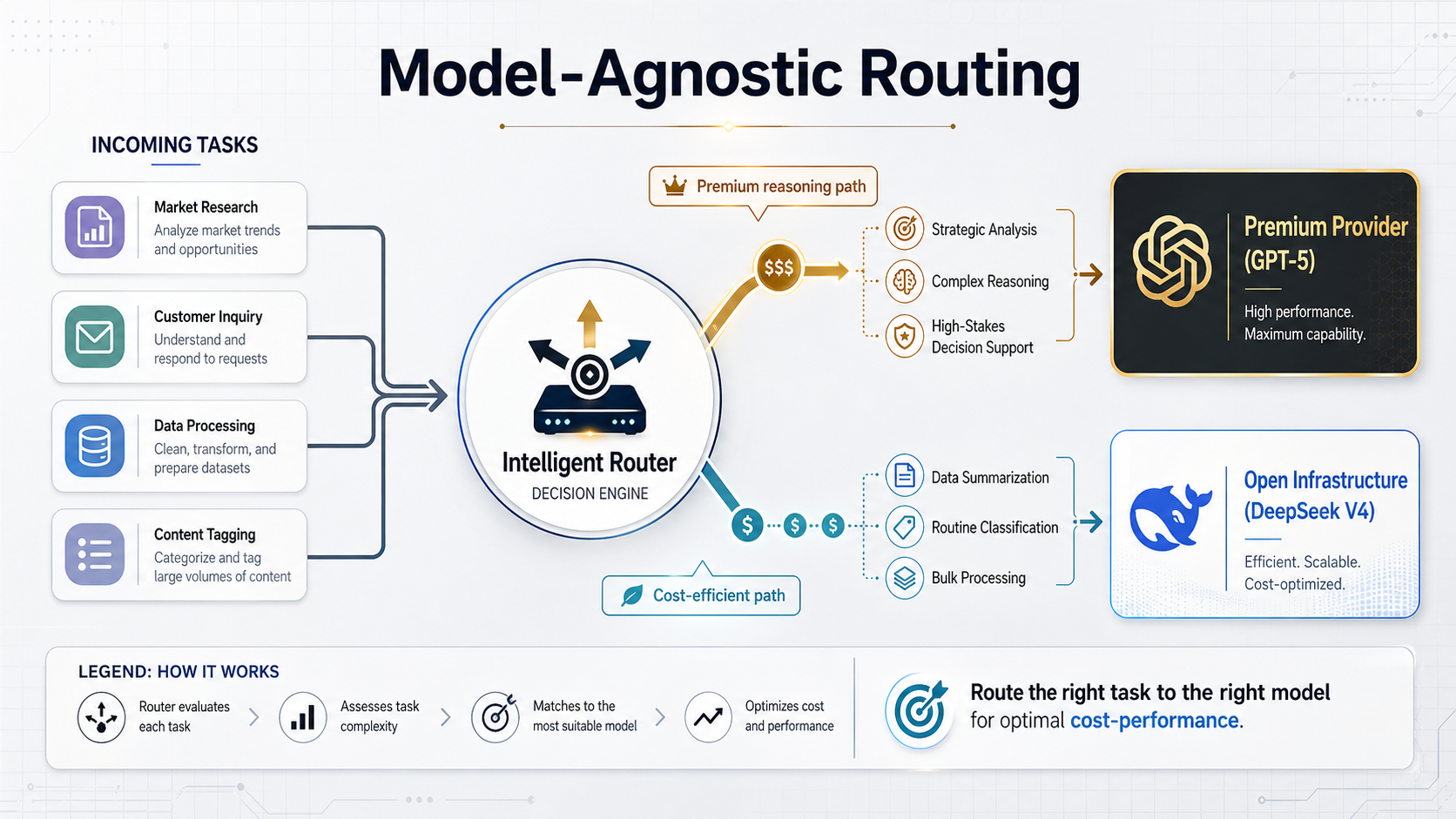

Strategic Adaptation: Model-Agnostic Architecture

The economic reality of 2026 is forcing developers to become 'model-agnostic.' Rather than tethering an entire application to a single provider, engineers are building intelligent routing layers. These systems direct high-complexity, high-stakes tasks to premium models like GPT-5 while offloading high-volume, repetitive tasks to DeepSeek V4 or even OpenAI's budget-tier GPT-4.1 Nano, which costs a mere $0.10 per million input tokens.

This shift is not just about saving money; it is about margin expansion. Businesses that originally priced their services based on OpenAI's 2024 economics are seeing dramatic profit increases by integrating DeepSeek’s lower-cost infrastructure. Conversely, those who fail to adapt risk being undercut by competitors who pass these massive infrastructure savings directly to the end user. As the Flowith Blog noted, the single biggest driver of adoption in this new era is cost.

The Sovereignty of Open Weights

Beyond the raw numbers, a philosophical divide is also contributing to the death of the middle. OpenAI remains a closed-model ecosystem, offering a 'black box' that provides ease of use but limits developer control. DeepSeek, however, has leaned into the open-weight movement. By allowing developers to download, fine-tune, and self-host models like DeepSeek V3.2 or V4, they have made frontier-level intelligence a viable option for enterprises with strict compliance or privacy requirements.

NxCode reports that DeepSeek's API pricing in 2026 remains the best value proposition in the frontier AI market, but the ability to self-host is what truly democratizes access for mid-sized teams that want to escape the 'tax' of proprietary APIs.

Forward-Looking Implications

As we look toward the remainder of 2026, the 'disappearing middle' may move from a technical phenomenon to a socio-economic one. The concentration of high-end AI power within a few premium providers, contrasted with the commoditization of infrastructure-grade AI, mirrors broader economic trends. AI automation continues to displace mid-level jobs, potentially concentrating wealth at the top of the service provider stack while leaving smaller builders to fight over high-volume, low-margin utilities.

For the AI industry, the era of simple per-token pricing is coming to a close. To survive the price compression driven by DeepSeek and the premium positioning of OpenAI, product builders must move toward value-based or outcome-based monetization. In a world where intelligence is either an expensive luxury or a dirt-cheap utility, being 'in the middle' is no longer a viable strategy.