Diagnosing Agent Failure and Solving Forgetting: New Breakthroughs in LLM Autonomy and Multimodality

New research introduces metrics for LLM agent errors and a training-free framework to prevent catastrophic forgetting in multimodal AI models.

Researchers have developed a mathematical framework to quantify why AI agents fail during complex tasks while simultaneously introducing a training-free solution to the persistent issue of 'catastrophic forgetting' in multimodal models. These two distinct but complementary breakthroughs, published in mid-April 2026, provide the most clear-eyed look yet at the internal mechanics of autonomous agents and the path toward large language models (LLMs) that can learn indefinitely without losing prior knowledge.

Quantifying the Logic of Failure

On April 14, 2026, a team of researchers including Jaden Park, Jungtaek Kim, and Robert D. Nowak published 'Exploration and Exploitation Errors Are Measurable for Language Model Agents.' The paper addresses a fundamental mystery in AI development: when an agent fails to complete a task, is it because it didn't look for the right information (exploration error) or because it failed to use the information it already had (exploitation error)?

Traditionally, answering this question required access to the agent's internal policy—the specific probability distributions it uses to make decisions. For many commercial LLMs, this data is proprietary and inaccessible. The new research introduces a 'policy-agnostic' metric that calculates these errors based solely on the agent's observed action trajectories within controllable environments, such as 2D grid maps and Directed Acyclic Graphs (DAGs).

The results were stark. The study found a strong negative linear relationship, with an R-squared value of 0.947, between the logarithm of exploration error and the success rate. This indicates that an agent's ability to effectively explore its environment is the single most critical predictor of its success. According to the abstract of the study, while LM agents are increasingly deployed in open-ended decision-making from AI coding to physical robotics, systematically distinguishing between exploration and exploitation from actions alone has remained a significant hurdle.

Solving the 'Dual-Forgetting' Phenomenon

Two days after the agent research debuted, a second team including Zijian Gao and Kele Xu introduced 'MAny: Merge Anything for Multimodal Continual Instruction Tuning.' This paper targets Multimodal Large Language Models (MLLMs)—AI that can process both text and images—and addresses the 'catastrophic forgetting' that occurs when these models are taught new tasks in sequence.

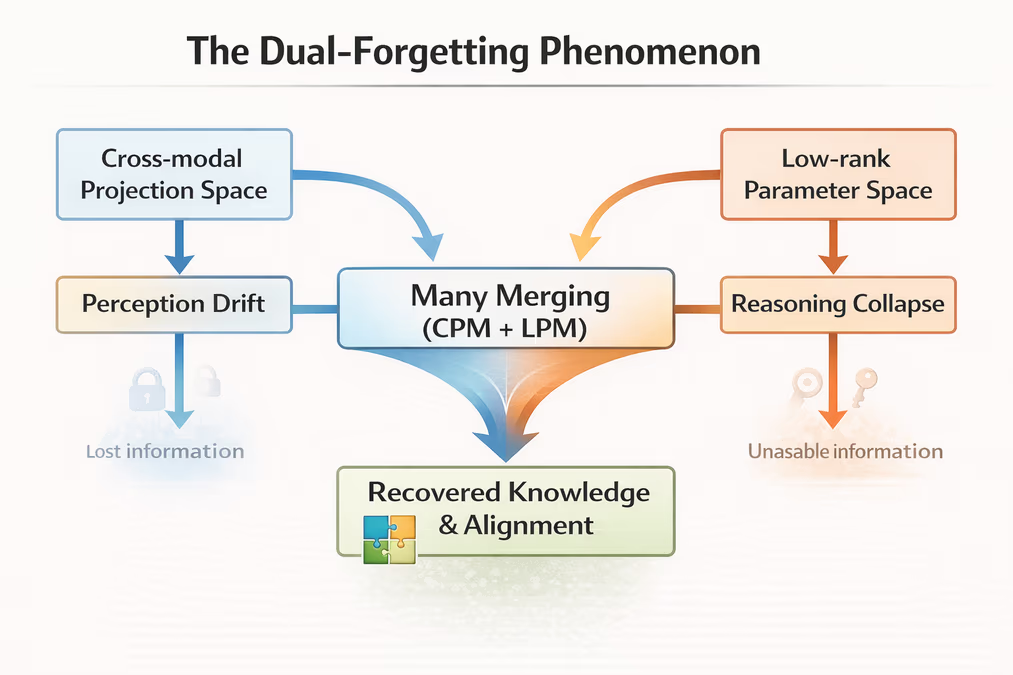

The researchers identified what they call a 'dual-forgetting' phenomenon. As MLLMs adapt to new instructions, they suffer from perception drift in the Cross-modal Projection Space and reasoning collapse in the Low-rank Parameter Space. In simpler terms, the model begins to lose its ability to recognize visual objects accurately while simultaneously losing its ability to reason about them using previously learned logic.

The 'MAny' framework solves this using a training-free paradigm. Instead of expensive retraining, it uses two algebraic mechanisms: Cross-modal Projection Merging (CPM) to restore perceptual alignment and Low-rank Parameter Merging (LPM) to stop task-specific modules from interfering with one another. Because these operations are purely algebraic and run on a standard CPU, they require no additional gradient-based optimization.

The abstract of the 'MAny' paper notes that while existing literature has focused heavily on the language reasoning backbone, this work exposes the neglected perception drift that happens alongside reasoning collapse, representing a critical gap in how we maintain MLLM performance over time.

Performance Gains and Industry Impact

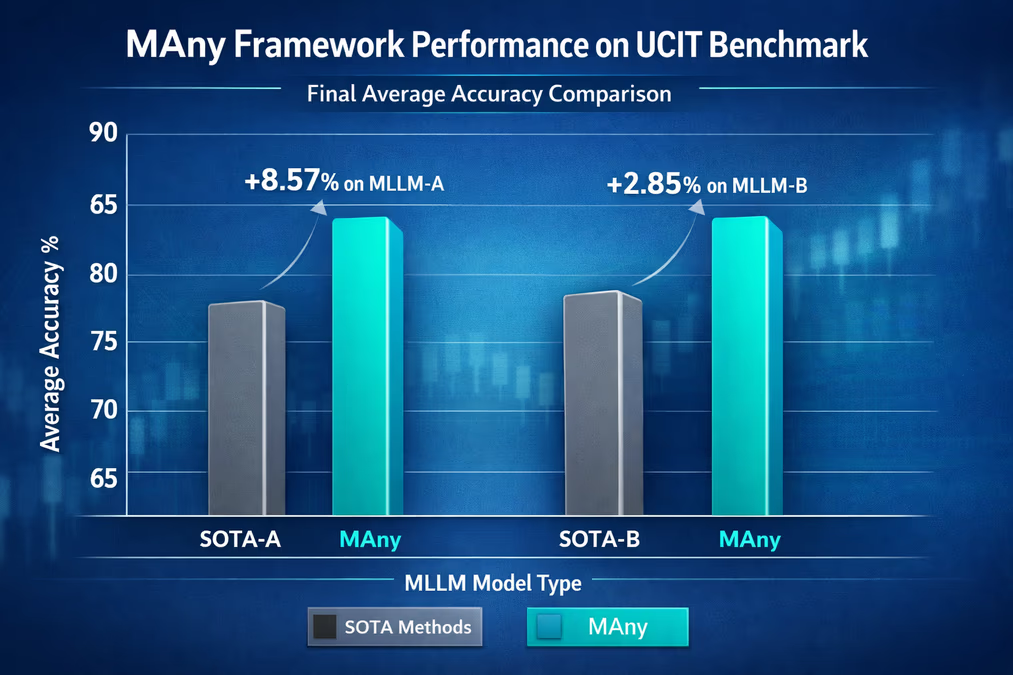

The implications of these findings are measurable. In testing on the UCIT benchmark, the MAny framework demonstrated leads of up to 8.57% and 2.85% in final average accuracy over existing state-of-the-art methods across two different MLLM architectures. This suggests that the training-free merging approach is not just more efficient, but actually more effective at preserving knowledge than traditional fine-tuning.

This shift is part of a broader move toward 'compound AI'—systems where LLMs are not just stand-alone engines but are integrated with planning, memory, and specialized tool-calling modules. By being able to measure exactly where an agent is failing, developers can now move beyond trial-and-error prompt engineering. They can instead design targeted interventions to improve exploration strategies, making AI coding assistants and robotic agents more reliable for real-world deployment.

Looking Ahead: The Path to Continual Learning

The marriage of better error diagnostics and more robust knowledge retention marks a turning point for autonomous systems. If agents can be measured and corrected in real-time, and multimodal models can learn new tricks without forgetting old ones, the barrier to truly 'autonomous' AI begins to dissolve.

We are moving toward a future where MLLMs can evolve within dynamic environments—such as a customer service bot learning a new product line or a robotic vacuum navigating a redesigned home—without the need for massive, centralized retraining. The efficiency of the MAny framework, which avoids the resource-heavy requirements of traditional tuning, suggests that advanced, continually-learning AI will become increasingly accessible and scalable across industries.