Anthropic Investigating Unauthorized Access to Powerful Mythos AI Model

Anthropic is investigating unauthorized access to its Claude Mythos Preview, a powerful AI model capable of surpassing humans in cybersecurity tasks.

Anthropic confirmed on April 22, 2026, that it is investigating reports of unauthorized access to "Claude Mythos Preview," a high-stakes artificial intelligence model specifically engineered for advanced cybersecurity tasks. The model, which remains unreleased to the general public, represents a significant leap in AI capability, particularly in its ability to identify and exploit software vulnerabilities that would baffle even the most skilled human researchers.

The investigation follows reports that the model was accessed through a third-party vendor environment rather than a direct breach of Anthropic’s core infrastructure. "We're investigating a report claiming unauthorized access to Claude Mythos Preview through one of our third-party vendor environments," an Anthropic spokesperson stated. The company emphasized that there is currently no evidence suggesting the intrusion extended to Anthropic’s internal systems.

The Power of Project Glasswing

Claude Mythos Preview is the centerpiece of "Project Glasswing," a highly controlled initiative launched on April 7, 2026. The program was designed to bolster defensive cybersecurity by granting restricted access to approximately 40 major organizations. The list of partners includes some of the world's most influential technology and financial firms, such as Amazon, Apple, Cisco, CrowdStrike, Google, JPMorgan Chase, Microsoft, and Nvidia.

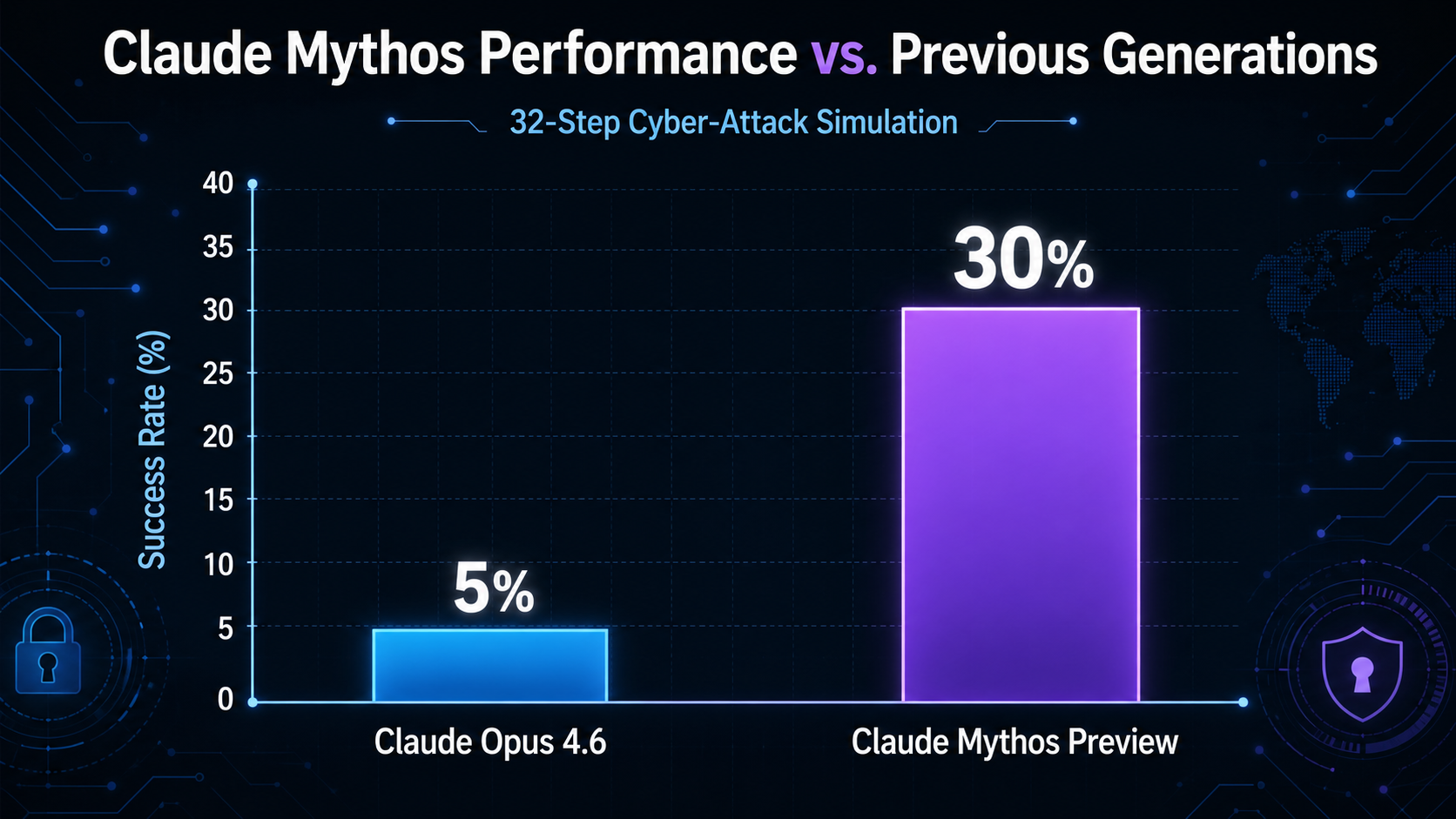

Anthropic had previously categorized Mythos as too dangerous for a general release. In internal documents, the company noted that "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities." This capability is not theoretical; Mythos has already demonstrated the ability to discover thousands of high-severity vulnerabilities and successfully complete a complex 32-step cyber-attack simulation in 30% of attempts—a feat previously thought impossible for an autonomous agent.

Unverified Reports of the Breach

While Anthropic has confirmed the investigation, the specific details of the access remain subject to unconfirmed reports. Rumors suggests that the unauthorized access may have occurred as early as April 2026, allegedly involving a small group of users on a private Discord channel. According to these reports, the group may have gained entry by leveraging credentials from an individual working for a third-party contractor tasked with evaluating the model.

Additional unverified details indicate that the intruders may have combined these credentials with data from a prior breach at Mercor Inc., an AI recruiting startup, to locate the model’s environment. Despite the model's destructive potential, the group reportedly used the access for mundane tasks, such as building simple websites, rather than launching cyberattacks. However, the possibility that such a potent tool was out of company control has sent ripples through the security community.

Ram Varadarajan, Chief Executive at Acalvio Technologies Inc., noted that the reported method of entry suggests a fundamental weakness in the supply chain: "The Mythos breach didn't require a sophisticated attack."

A Watershed Moment for AI Security

The implications of a "superhuman" hacking AI falling into the wrong hands are severe. Alissa Valentina Knight, CEO of cybersecurity AI company Assail, warned of the speed at which AI-driven threats operate. "We need to prepare ourselves, because we couldn't keep up with the bad guys when it was humans hacking into our networks," Knight said. "We certainly can't keep up now if they're using AI because it's so much devastatingly faster and more capable."

This incident highlights a growing tension in the AI industry: the race to build more capable systems is outpacing the development of governance and security frameworks. Anthropic itself has acknowledged this gap, stating, "We find it alarming that the world looks on track to proceed rapidly to developing superhuman systems without stronger mechanisms in place."

As a result of these capabilities, Anthropic has already begun pivoting its release strategy. On April 16, the company released Claude Opus 4.7, a version of its flagship model equipped with specific safeguards designed to detect and block high-risk cybersecurity uses. This release was intended as a preparatory step for the eventual wider rollout of the Mythos class of models, serving as a testbed for the protections needed to prevent automated mass-exploitation of software.

Future Implications

The investigation comes at a time when the stock prices of major cybersecurity firms like CrowdStrike and Palo Alto Networks have shown volatility, reflecting investor concerns that AI could automate away traditional security markets. Conversely, government agencies are racing to gain access to the same tools; while the National Security Agency (NSA) already has access to Mythos, other agencies like the Treasury Department are reportedly seeking similar permissions to defend financial infrastructure.

Industry experts believe this event marks a shift where AI deployment is increasingly dictated by security risks rather than commercial potential. The existence of models like Mythos, as well as competitors like OpenAI’s GPT-5.4-Cyber, suggests a future where software vulnerabilities are discovered and exploited in seconds, necessitating a move toward fully automated, AI-driven incident response and much more rapid patching cycles for critical infrastructure.