Anthropic Bridges the Memory Gap: Claude Managed Agents Gain Persistent Context

Anthropic launches public beta for persistent memory on Claude Managed Agents, enabling cross-session context and learning for agentic AI tasks.

Anthropic launched a public beta for Memory on Claude Managed Agents on April 24, 2026, marking a pivotal shift in how large language models interact with users by allowing agents to retain information and learn across multiple sessions. This update addresses the long-standing 'goldfish memory' problem, where AI models treat every interaction as a blank slate, forcing users to repeatedly provide background context and project details.

A Filesystem Approach to Intelligence

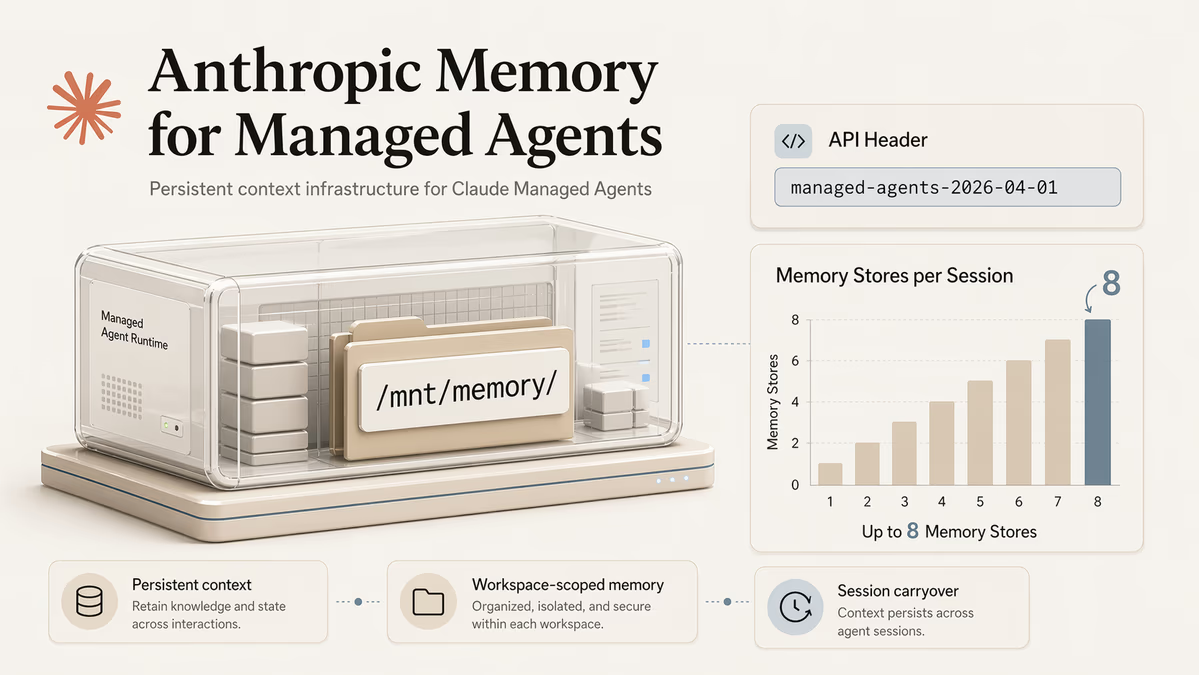

Unlike simple session-based caching, Anthropic's new system implements memory by mounting memory stores directly onto a filesystem. Claude agents can utilize existing bash and code execution capabilities to read from and write to these stores, which are organized as directories under `/mnt/memory/` within the session container. Developers can attach up to eight memory stores per session, providing a modular approach to context management.

In a blog announcement, Anthropic stated that agents are most effective when memory builds on the tools they already use. The company noted that with filesystem-based memory, their latest models save more comprehensive, well-organized memories and are more discerning about what to remember for a given task. This allows Claude to rely on the same bash and code execution capabilities that make it effective at agentic tasks.

Security and Developer Control

Recognizing the privacy and security implications of persistent AI memory, Anthropic has built-in granular controls. Memories are stored as files, allowing developers to export or delete data via API. The system utilizes scoped permissions, where memory stores can be designated as read-only for broad enterprise-wide knowledge bases or read-write for individual user-specific personalization.

Transparency is a core component of this beta. Every write operation is recorded as a session event in the Claude console. This creates a detailed audit trail, enabling developers to trace exactly when and why an agent learned a specific piece of information, while also providing the ability to roll back or redact memories if necessary. To access these features, Managed Agents API requests must include the `managed-agents-2026-04-01` beta header.

The Path to Persistent Agents

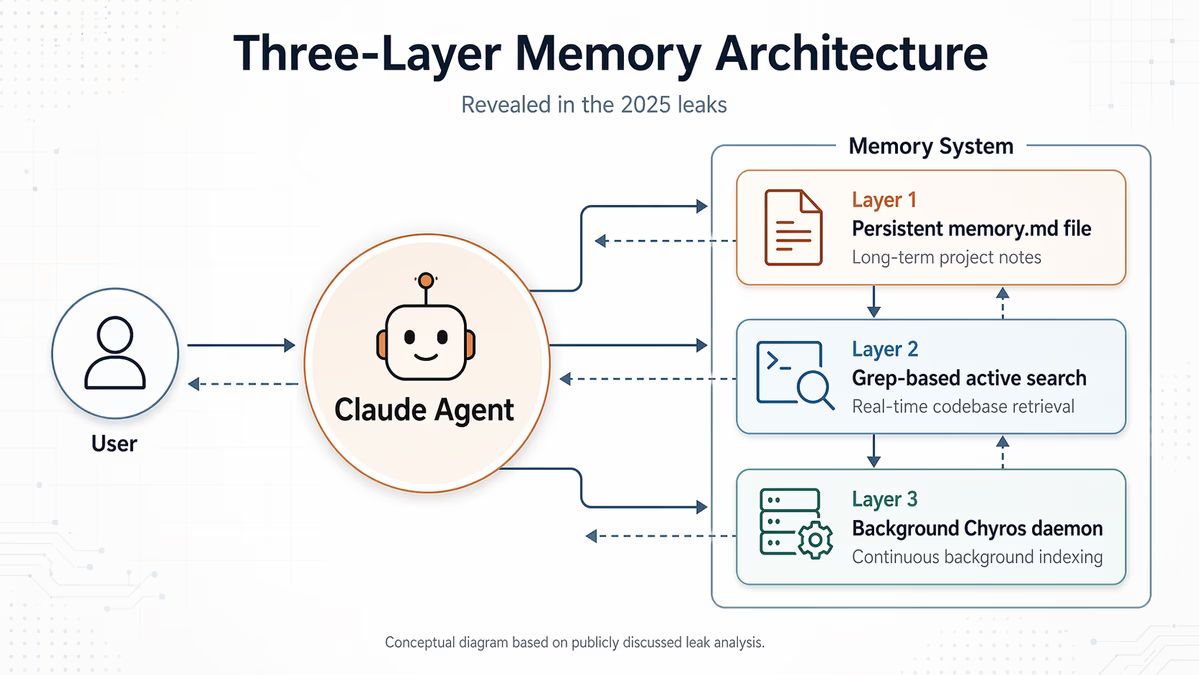

The move to public beta follows a series of incremental developments and leaks. According to unconfirmed reports from mid-2025, fragments of the Claude Code source code first hinted at a three-layer memory system. This architecture allegedly included a persistent `memory.md` file, a grep-based active search layer, and an unshipped background process referred to as the 'Chyros daemon.' Anthropic later acknowledged these leaks, which signaled an early internal focus on structured memory management.

Since then, the company has steadily expanded these capabilities. In late 2025, Anthropic introduced a general memory tool for the Claude Developer Platform and later expanded automatic recall to Pro and Max subscribers. The release of Claude Opus 4.7 on April 16, 2026, further refined the model's ability to handle file system-based notes across multi-session workflows, setting the stage for the current Managed Agents integration.

Competitive Stakes and Market Reaction

The integration of persistent memory is a direct response to a highly competitive landscape. OpenAI's ChatGPT-4 has already implemented persistent memory features to tailor responses based on user history. Other industry players like Google DeepMind and xAI are racing to refine their own agentic memory layers. Anthropic CEO Dario Amodei has suggested that continual learning in AI models will ultimately not be as difficult as it might currently appear. This sentiment is echoed across the industry; OpenAI CEO Sam Altman has indicated that the real breakthrough in AI lies not in better reasoning alone, but in the implementation of persistent memory.

Market observers are already pricing in the impact of this update. A Polymarket contract predicting whether Anthropic would have the best-performing AI model by the end of April 2026 saw a 15% upward shift in its projection immediately following the announcement. The feature is expected to bolster Claude's standing on benchmarks like the LMSYS leaderboard, which increasingly reflects real-world multi-turn interaction quality.

Future Implications

As the industry moves toward 'agentic' AI—models that can plan and execute complex tasks over days or weeks—memory is no longer an optional feature; it is a foundational requirement. The ability for agents to share learned information with other agents within an organization could transform enterprise workflows, moving AI from a simple chatbot to a collaborative, long-term digital coworker. With this public beta, Anthropic is signaling that the era of the forgetful AI is rapidly coming to an end, replaced by systems that grow more capable with every interaction.