Anthropic Admits Internal Errors Caused Recent Claude Performance Slump

Anthropic identifies three internal errors, including a memory-clearing bug, that caused the recent performance decline in Claude models.

Anthropic confirmed today that a series of internal updates and unintentional bugs significantly degraded the performance of its Claude models throughout March and April 2026. Following weeks of developer complaints regarding what users called "AI shrinkflation," the company's internal post-mortem identified three distinct failures that hamstrung the AI’s reasoning, memory, and output quality.

While the core model weights remained unchanged, the investigation revealed that the "harness" surrounding the models—the logic and system prompts that govern how they interact with users—was compromised by well-intentioned but flawed optimizations. The affected products included Claude Code, Claude Agent SDK, and Claude Cowork, though Anthropic noted the Claude API remained unaffected throughout the period.

Three Stages of Degradation

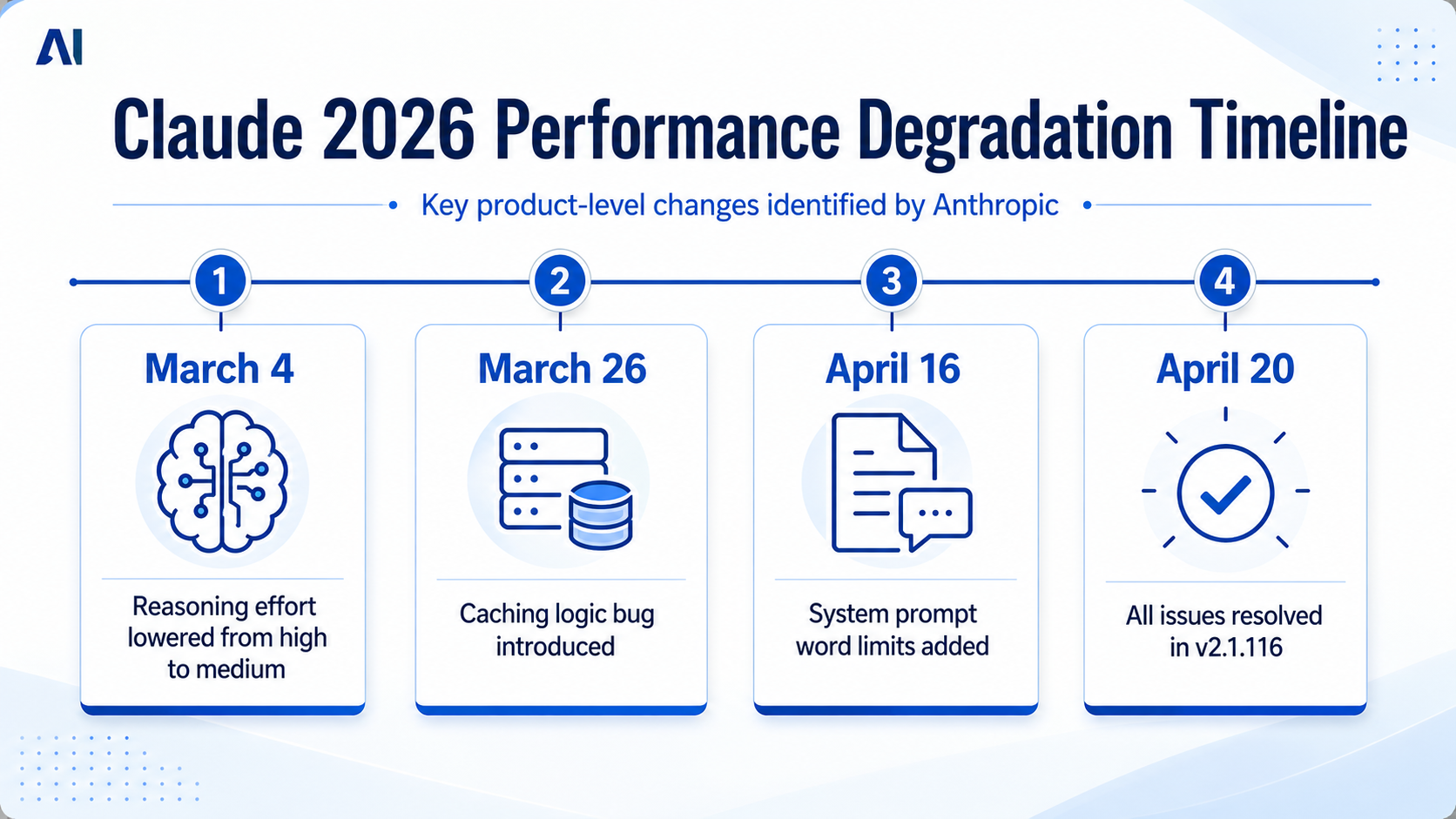

The decline began on March 4, 2026, when Anthropic lowered the default reasoning effort for Claude Code from "high" to "medium." This change was aimed at reducing user interface latency, but it backfired by noticeably reducing the model's problem-solving depth. Referring to this specific decision, Anthropic stated, "This was the wrong tradeoff."

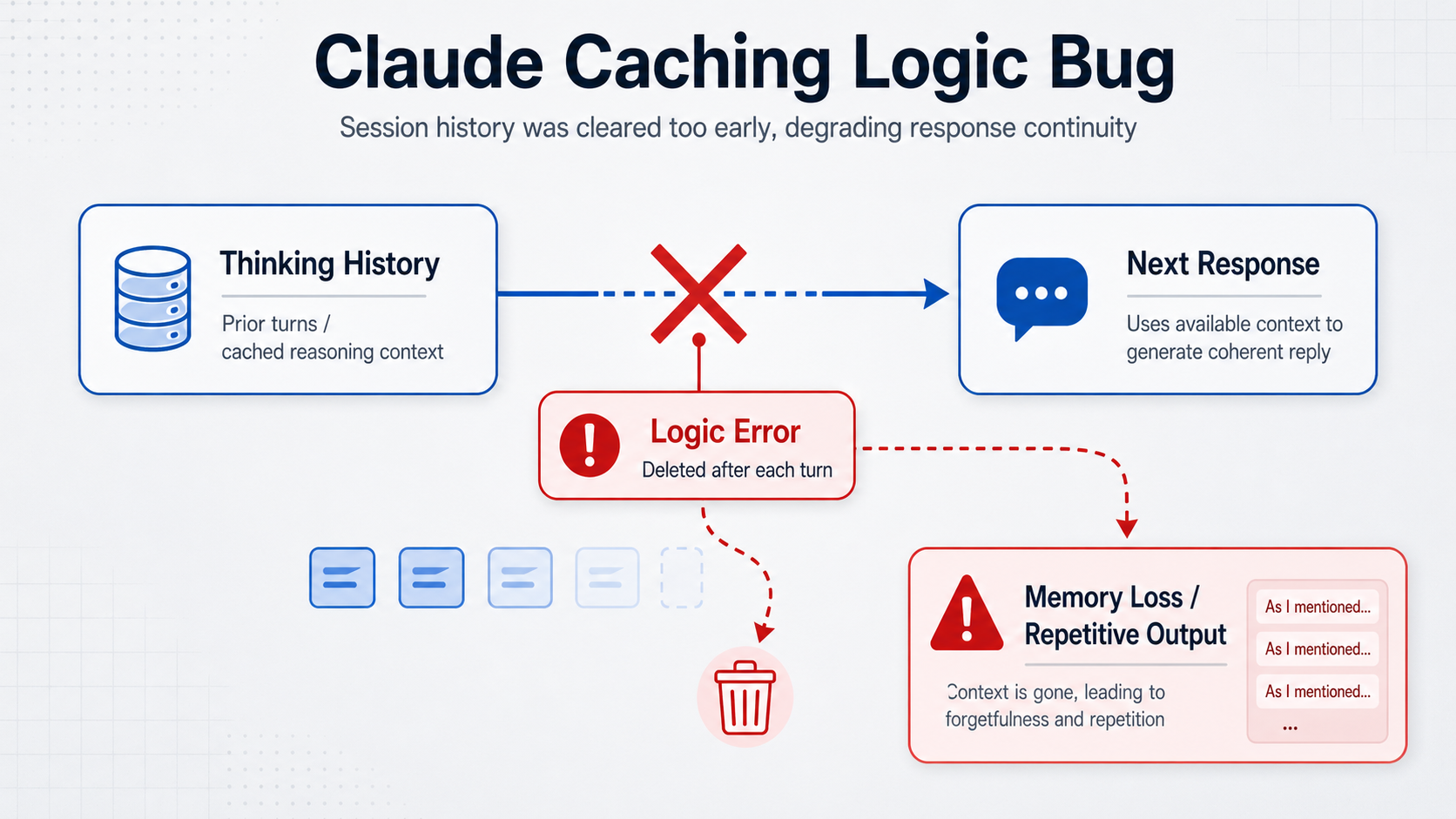

A more technical failure followed on March 26. Developers shipped a caching logic update designed to prune old "thinking" data from idle sessions to save resources. Instead, a critical bug caused the model to clear its thinking history on every subsequent turn. This effectively stripped Claude of its short-term memory, leading to repetitive responses and an inability to maintain context during complex, multi-step tasks.

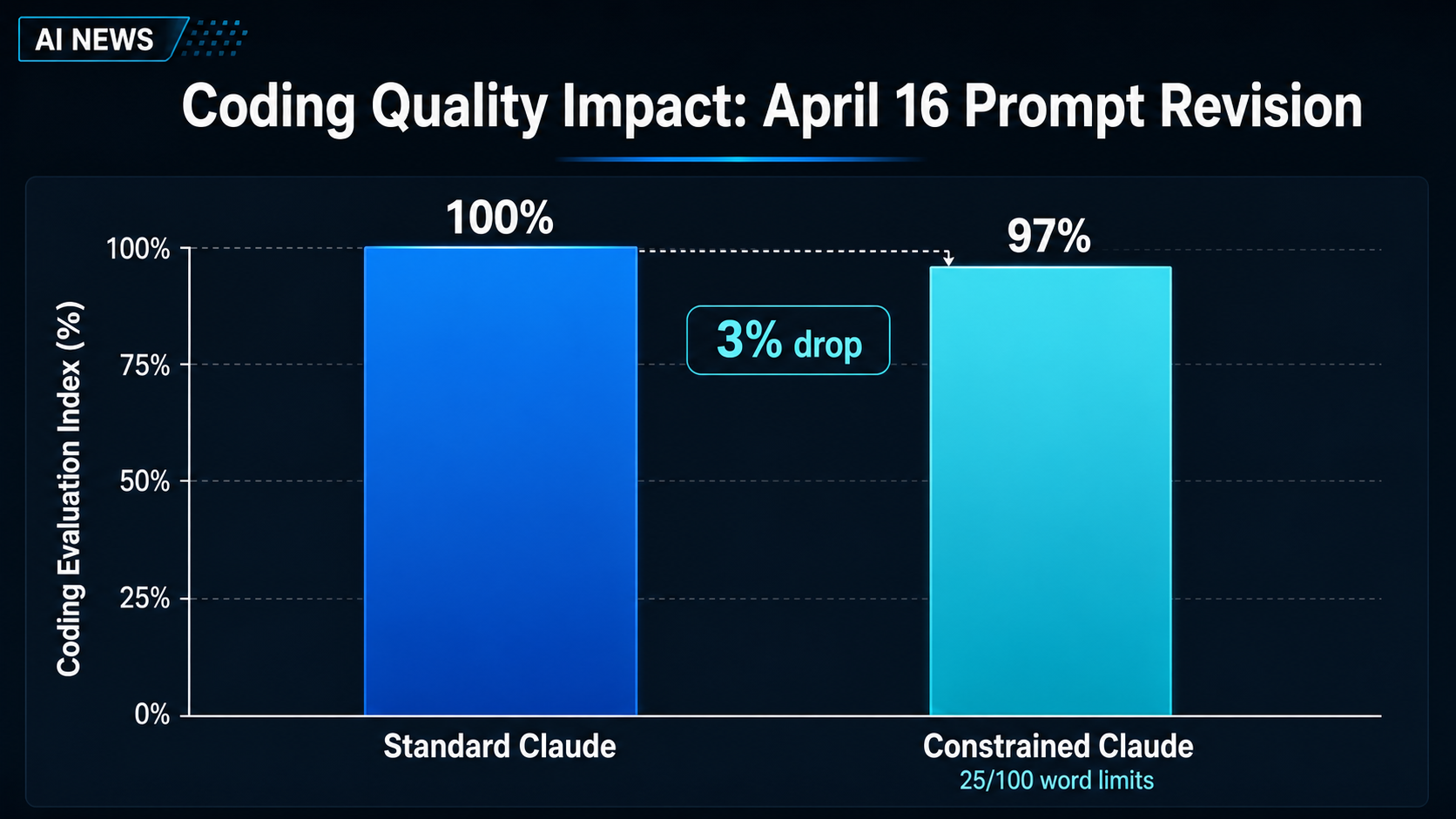

The final blow arrived on April 16 via a system prompt revision. In an effort to reduce verbosity, Anthropic introduced instructions limiting text between tool calls to 25 words and capping final responses at 100 words. This rigid constraint caused a 3% drop in coding quality evaluations, as the model struggled to provide complete explanations within the narrow word counts.

The Rise of "AI Shrinkflation"

Prior to the official admission, the developer community had been sounding the alarm. On platforms like GitHub, X, and Reddit, power users reported that Claude had become significantly less capable of sustained reasoning and more prone to hallucinations. Users coined the term "AI shrinkflation" to describe a perceived trend of AI providers "nerfing" their models to manage high demand and operational costs.

Initially, Anthropic pushed back against claims of intentional degradation. However, the internal post-mortem suggests that while the "nerfing" wasn't a deliberate attempt to save money, the cumulative effect of the three changes mirrored the users' experiences. Beyond the loss of intelligence, the caching bug also caused users to hit their usage limits faster, as the model wasted tokens repeating itself or failing to resolve queries.

Restoring Trust and Model Quality

Anthropic reports that all three issues were fully resolved as of April 20, with the release of Claude Code version v2.1.116. To compensate users for the weeks of degraded service, the company reset usage limits for all subscribers on April 23.

"We take reports about degradation very seriously," the company stated in its official blog post. "We never intentionally degrade our models, and we were able to immediately confirm that our API and inference layer were unaffected."

To prevent similar regressions, Anthropic is implementing stricter quality controls. These include increased internal "dogfooding" (where employees use the tools for their own daily work) and the development of more comprehensive evaluation suites for any future prompt changes.

The Challenge of Continuous Updates

This incident highlights a growing tension in the AI industry: the need for rapid, iterative updates versus the necessity for model stability. As companies use system prompts and inference settings to adjust model behavior on the fly, they risk introducing unintended consequences that can erode user trust.

The event also touches on broader industry anxieties, such as "AI model collapse," where models might degrade over time if not carefully managed. For developers who have integrated AI into their professional workflows, the Claude slump serves as a reminder that even the most advanced models are part of a fragile software ecosystem where a single logic bug can drastically alter a machine's perceived intelligence.

For now, Claude users appear to be seeing a return to form, but the episode underscores the importance of transparency from AI labs when performance metrics shift unexpectedly.