Amazon's Custom AI Chips Pose Challenge to Nvidia's Dominance

AWS accelerates its custom AI chip strategy with Trainium3 and 'Project Rainier,' a 500,000-chip cluster challenging Nvidia’s hardware dominance.

Amazon Web Services (AWS) has turned a 1,200-acre site in Indiana into the front line of its war against Nvidia’s hardware monopoly. In October 2025, the cloud giant activated 'Project Rainier,' a massive AI training cluster deploying nearly 500,000 of its custom Trainium2 chips dedicated to training Anthropic’s Claude models. This activation, followed by the December 2025 unveiling of the even more powerful Trainium3 chip at AWS re:Invent, underscores Amazon's aggressive vertical integration strategy to reduce its reliance on third-party silicon.

The Next Generation: Trainium3

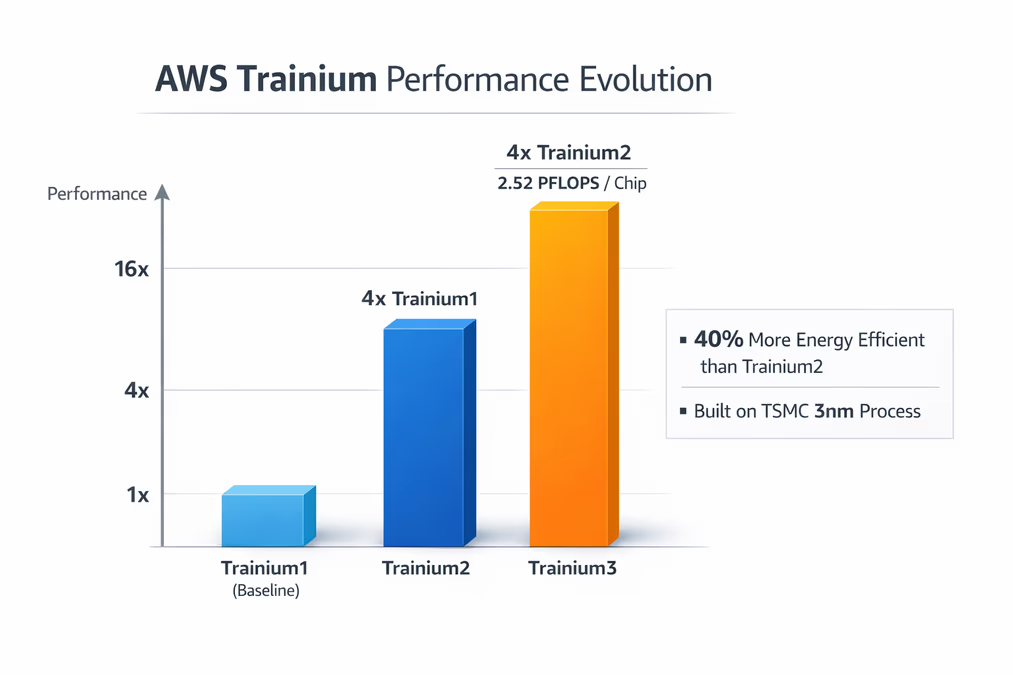

At the heart of Amazon’s recent push is the Trainium3 processor. Manufactured on a cutting-edge TSMC 3nm process, the new chip delivers 2.52 PFLOPS of FP8 performance. According to Amazon, this represents a fourfold performance increase and a 40% improvement in energy efficiency compared to the second-generation Trainium2. AWS CEO Matt Garman stated that "Trainium3 offers the industry's best cost efficiency in AI training and inference," positioning the hardware as a direct alternative to Nvidia’s high-margin GPUs.

AWS claims that Trainium2 already provides a 30% better price-performance ratio than standard GPUs, and it expects Trainium3 to push that advantage to between 30% and 40%. This efficiency is a result of a strategy centered on the unified development of AI accelerators. Rahul Kulkarni, AWS’s Director of Compute, noted that the company can achieve significant optimization and cost benefits by "doubling down on Trainium as a single, unified product."

A $20 Billion Silicon Business

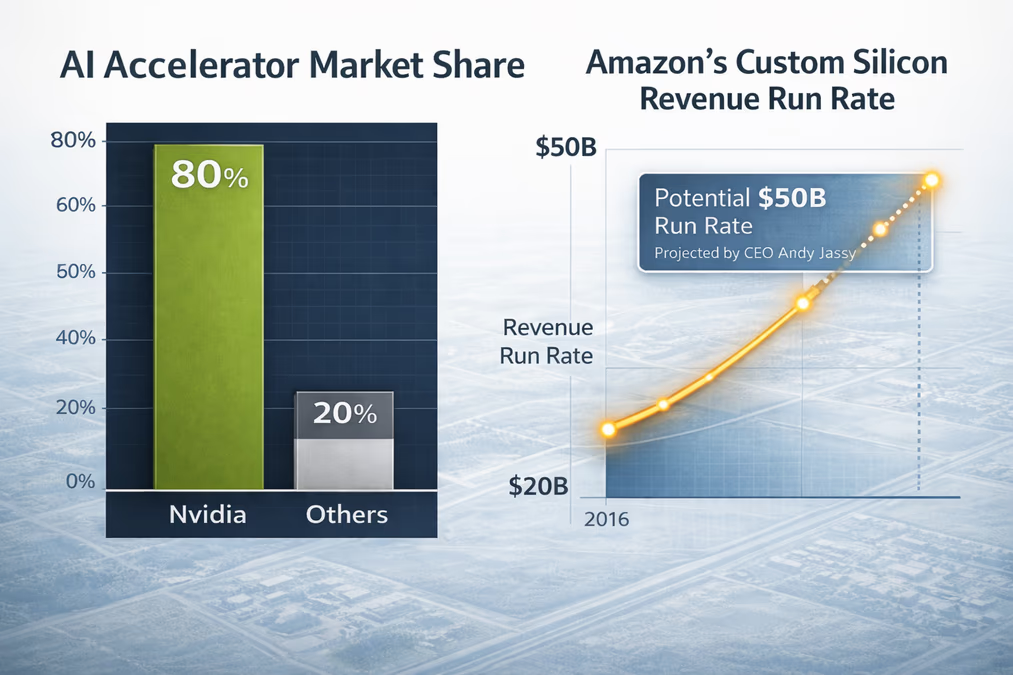

Amazon’s custom silicon journey began in earnest with the $350 million acquisition of Annapurna Labs in 2015. Today, that investment has evolved into a powerhouse business unit encompassing Graviton CPUs, Trainium AI accelerators, Inferentia inference chips, and Nitro networking components. This silicon portfolio currently operates at an annual revenue run rate exceeding $20 billion.

Amazon CEO Andy Jassy highlighted the massive scale of this division, suggesting that if it were a standalone company selling to external third parties, its annual run rate could reach approximately $50 billion. "There's so much demand for our chips that it's quite possible we'll sell racks of them to third parties in the future," Jassy said, indicating that Amazon may eventually transition from being a cloud provider of compute to a standalone merchant silicon vendor.

The Shadow of Nvidia

Despite Amazon's rapid scaling, Nvidia remains the undisputed leader with roughly 80% of the AI accelerator market. Nvidia’s dominance is built not just on raw hardware performance, such as its H100 and Blackwell architectures, but also on its CUDA software ecosystem, which has become the industry standard for AI development.

While Amazon’s vertical integration aims to offer lower operational costs, unverified reports based on internal startup documents suggest that the transition isn't seamless for everyone. Some reports indicate that startups like Cohere, Stability AI, and Typhoon have found Trainium and Inferentia to be less competitive for specific tasks. For instance, according to unconfirmed internal findings from one startup, Nvidia's A100 GPUs were up to three times more cost-efficient than Inferentia2 for certain inference workloads. Similarly, unverified reports suggest Stability AI found that Trainium2 struggled to match the latency benchmarks of Nvidia’s H100 GPUs.

Amazon acknowledges that while its chips offer compelling price-performance, some customers still prefer Nvidia's established ecosystem. Consequently, AWS continues to support Nvidia's latest hardware alongside its own offerings, maintaining a multi-vendor cloud environment.

Strategic Importance and Industry Impact

For many in the industry, the development of proprietary silicon is no longer optional for hyperscalers. "Owning that AI hardware is just so important for a variety of reasons and only someone like AWS can pull it off," said Ethan Simmons, Managing Partner of PTP. Jimmy Chui, CEO of ClearScale, added that AWS’s own chips will be "extremely beneficial... especially as these AI workloads increase."

By controlling the entire stack from silicon to cloud services, Amazon mitigates supply chain risks and gains the ability to tailor hardware to specific large language model architectures. This move is mirrored by other tech giants; Google has its Tensor Processing Units (TPUs), and Meta is developing its Training and Inference Accelerator (MTIA).

Looking Ahead

With Trainium3 scheduled for active use in early 2026 and Trainium4 already projected for late 2027, Amazon is maintaining a rapid cadence of hardware releases. As the broader AI chip market is projected to exceed $100 billion, the success of Amazon’s custom silicon will depend on its ability to convince developers to move beyond the CUDA ecosystem and into the AWS Neuron SDK. If successful, Amazon could fundamentally shift the economics of AI, turning a massive internal infrastructure cost into one of the most profitable silicon businesses in history.