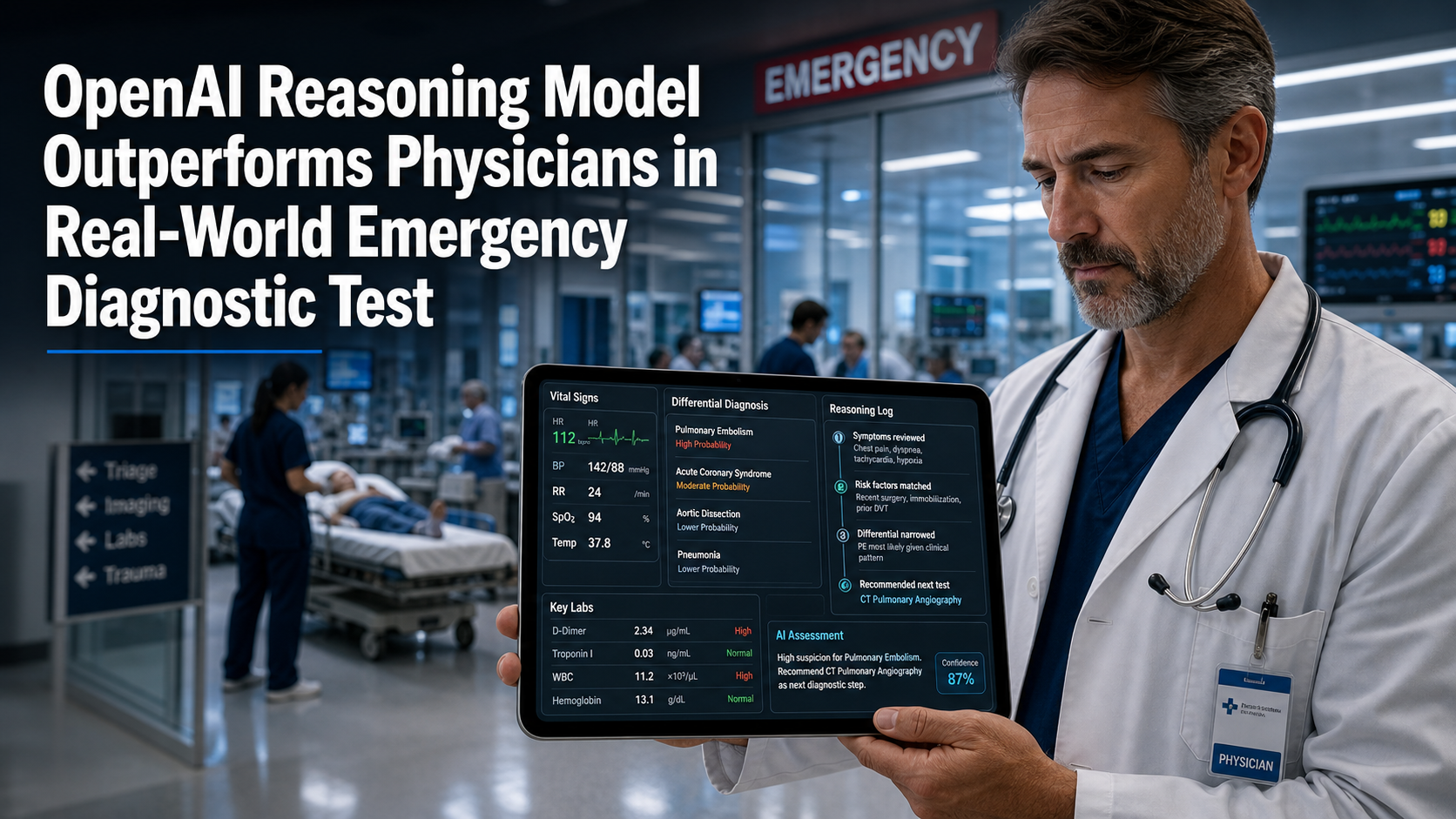

OpenAI Reasoning Model Outperforms Physicians in Real-World Emergency Diagnostic Test

A landmark study in Science reveals OpenAI’s o1 model significantly outperformed expert physicians in real-world emergency department diagnostic accuracy.

A research team from Harvard Medical School and Beth Israel Deaconess Medical Center has found that a new class of "reasoning" artificial intelligence can diagnose patients more accurately than human physicians in a real-world clinical environment. The study, published on April 30, 2026, in the journal Science, highlights a significant shift in medical technology, as the AI model demonstrated a superior ability to navigate the "messy" data typical of emergency department settings.

The research utilized OpenAI’s "o1-preview" model, the company’s first large language model specifically designed for complex reasoning. Unlike previous iterations like GPT-4, which primarily relied on pattern recognition, the o1 series is capable of working through problems in a step-by-step, logical manner. This capability was put to the test against 76 actual cases from a Boston emergency department, where the AI's performance was compared directly against two expert attending physicians.

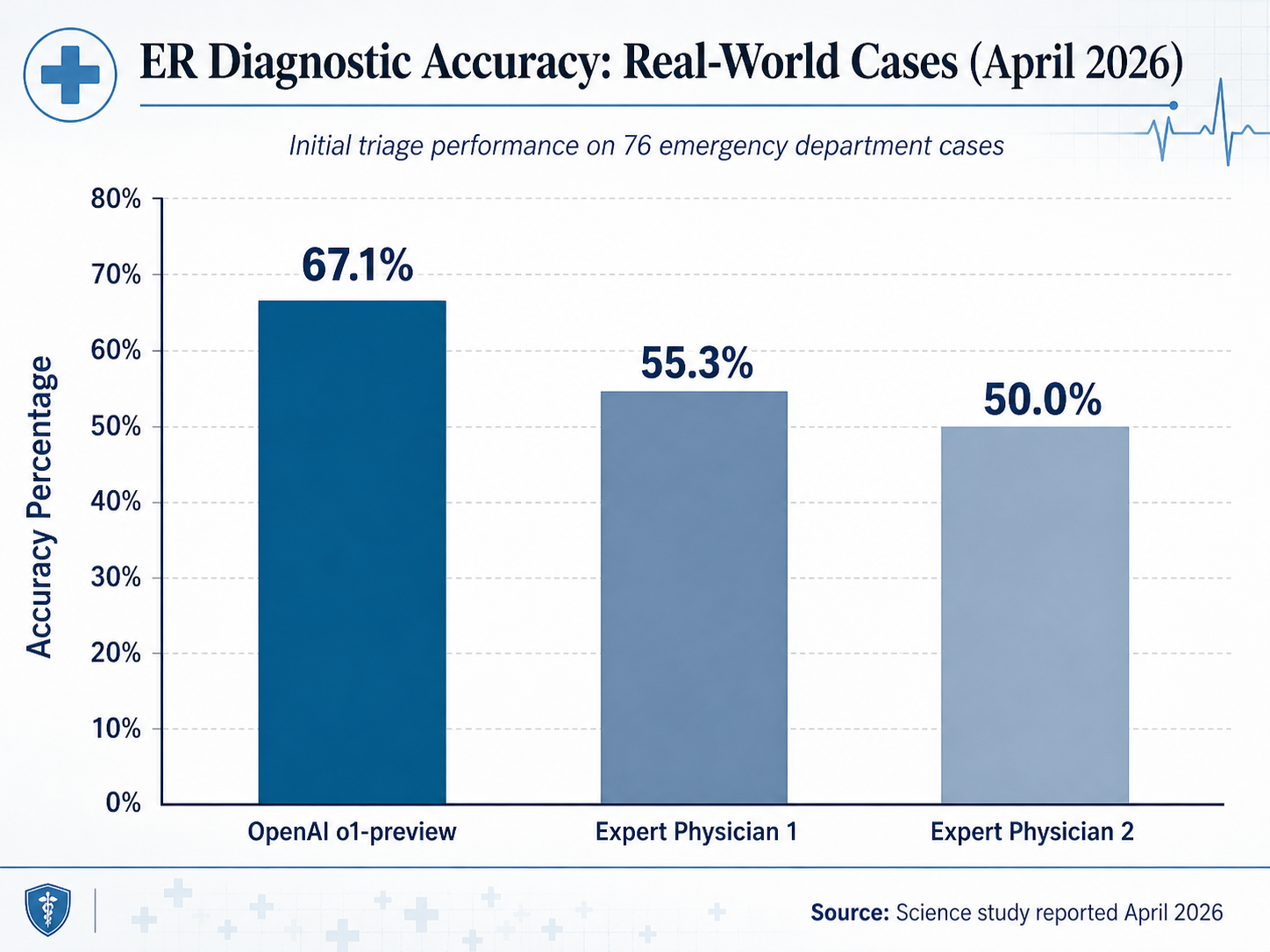

Surpassing Human Benchmarks

In the evaluation of 76 real-world ER cases, the o1-preview model achieved an exact or very close diagnostic accuracy of 67.1% during the initial triage stage. In contrast, the two human physicians scored 55.3% and 50.0%, respectively. The researchers also tested the model against 143 clinicopathological conferences published in The New England Journal of Medicine (NEJM). In those complex cases, the AI included the correct diagnosis in its differential in 78.3% of instances and provided a "helpful" diagnosis 97.9% of the time.

Arjun Manrai, Assistant Professor of Biomedical Informatics at Harvard Medical School and co-senior author of the study, noted the scale of the achievement. "We tested the AI model against virtually every benchmark, and it eclipsed both prior models and our physician baselines," Manrai said. He characterized the findings as a sign of a "profound change" in technology that is poised to reshape the landscape of modern medicine.

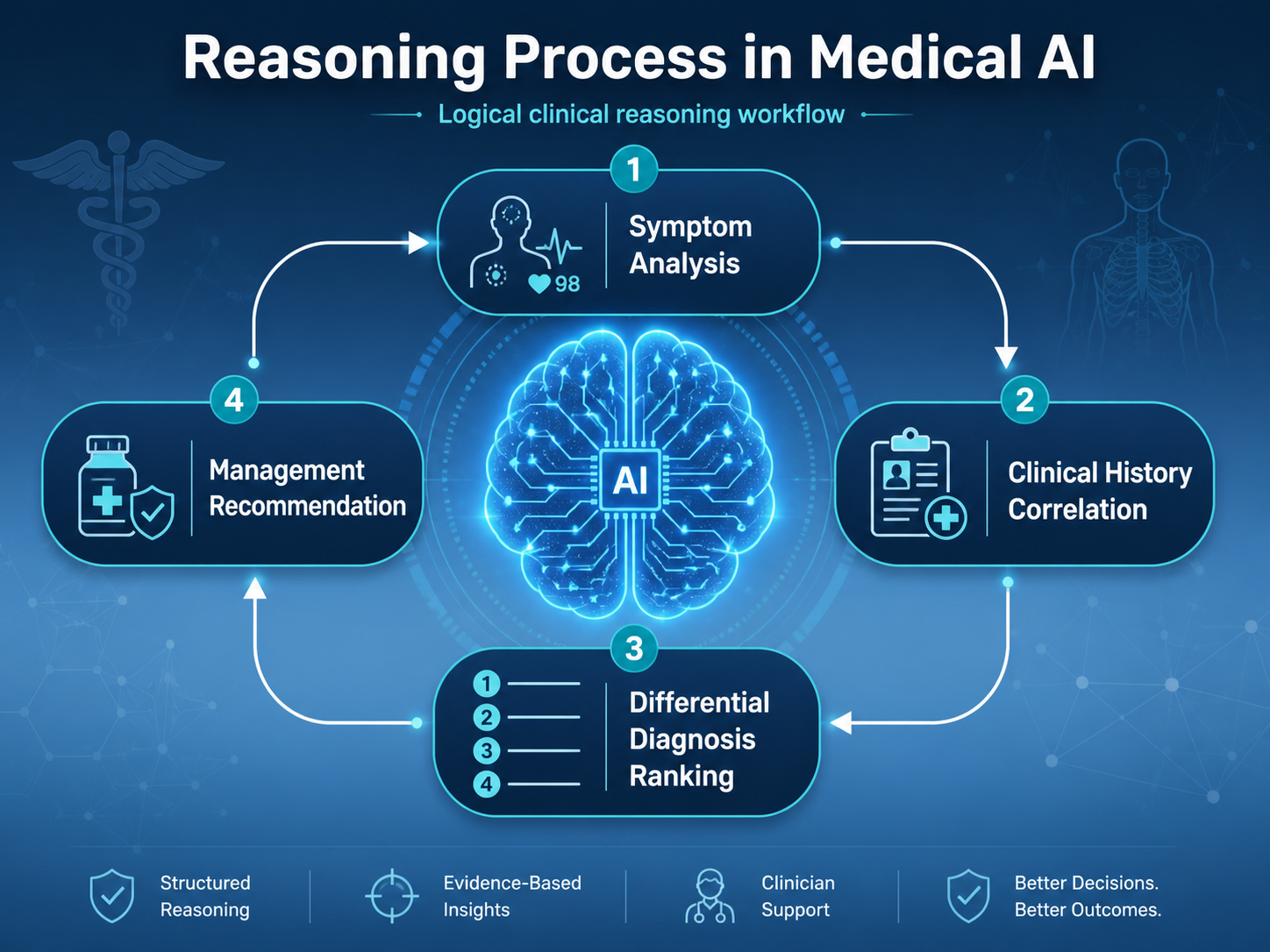

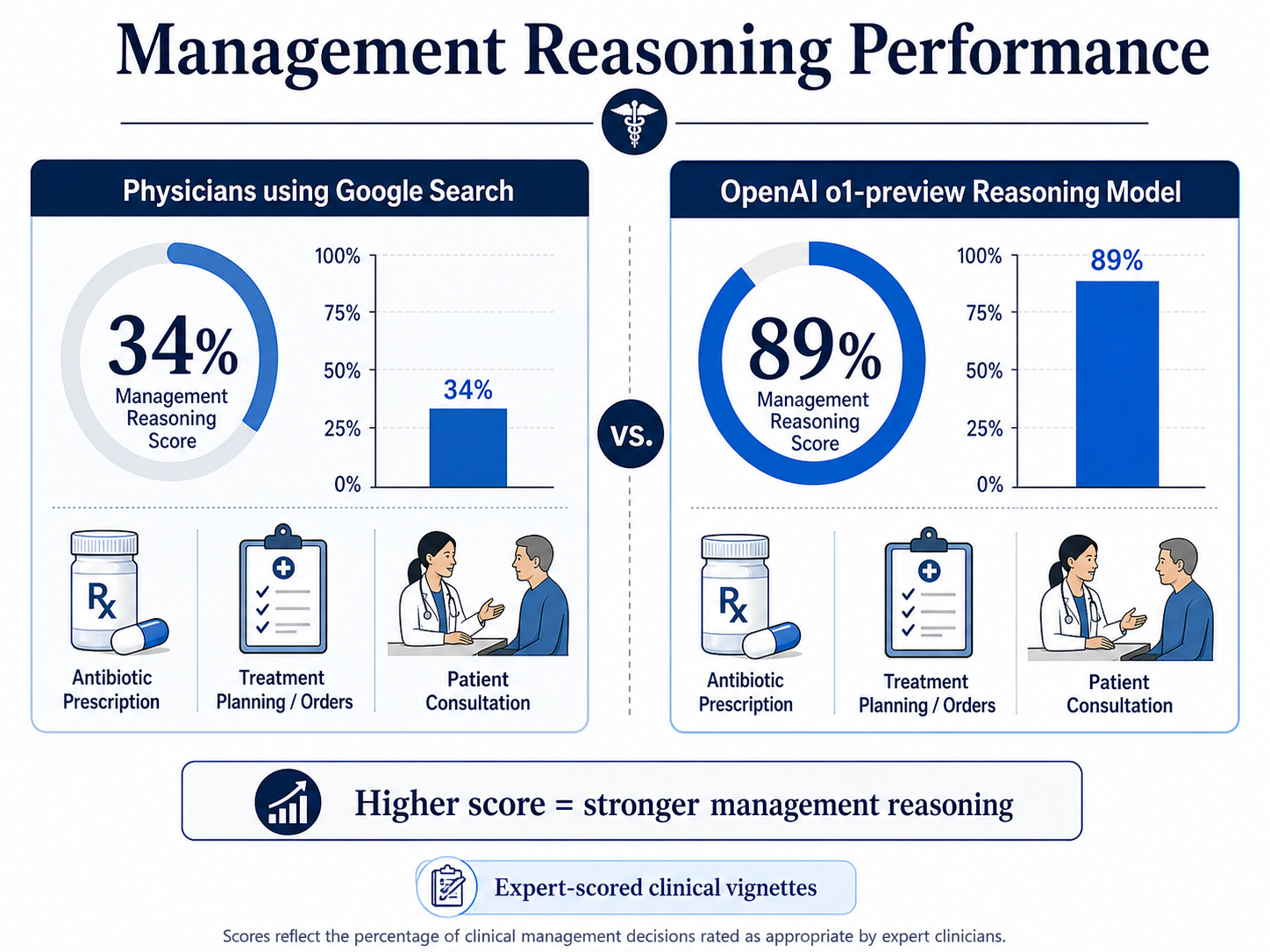

The Leap in Management Reasoning

Perhaps more striking than the diagnostic numbers was the AI’s performance in "management reasoning." This metric involves making critical care decisions, such as recommending specific antibiotic treatments or navigating sensitive end-of-life conversations. In these tasks, the o1-preview model scored a median of 89%, significantly outperforming physicians who used conventional aids like Google search, who scored a median of 34%.

Dr. Adam Rodman, a hospitalist and clinical researcher at Beth Israel Deaconess Medical Center, admitted he was initially skeptical of the experiment's potential. "I thought it was going to be a fun experiment but that it wouldn't work that well," Rodman said. "That was not at all what happened. This is the big conclusion for me—it works with the messy real-world data of the emergency department."

Despite the impressive results, the researchers cautioned that the study had clear limitations. The tests were entirely text-based, utilizing data from electronic health records. This means the AI did not have access to the sensory information—visual cues, physical examinations, or the tone of a patient's voice—that remains a cornerstone of human medical practice.

Redefining the Role of the Physician

The authors were careful to emphasize that these results do not suggest AI should replace doctors. Instead, they view the technology as a powerful assistive tool that requires human oversight to ensure patient safety. Peter Brodeur, an HMS Clinical Fellow and co-first author, warned that a model might correctly identify a top diagnosis while simultaneously suggesting unnecessary or harmful testing. "Humans should be the ultimate baseline when it comes to evaluating performance and safety," Brodeur noted.

As AI models increasingly "hit the ceiling" of traditional multiple-choice medical exams, this study advocates for a new era of evaluation. Researchers are calling for rigorous, prospective clinical trials in real-world healthcare environments to determine how these tools can be safely integrated into patient care.

Future Implications

The findings arrive at a time of rapid AI adoption in healthcare. As of 2025, approximately 20% of doctors and nurses globally were already using AI for second opinions. Other recent developments have shown AI identifying hidden disease patterns in sleep data with 89% accuracy and discovering biomarkers for Alzheimer’s disease a decade before symptoms appear.

Arjun Manrai stressed that the medical community must act now to evaluate this technology through formal trials. While the o1-preview model demonstrates a leap in reasoning, the challenge ahead lies in establishing legal and regulatory frameworks that can keep pace with a technology that is no longer just answering questions, but actively thinking through the complexities of human health.